Hosting Native Docker Container on C9300

Powered by an x86 CPU, the application hosting solution on the Cisco® Catalyst® 9000 series switches provide the intelligence required at the edge. This gives administrators a platform for leveraging their own tools and utilities, such as a security agent, Internet of Things (IoT) sensor, and traffic monitoring agent.

Application hosting on Cisco Catalyst 9000 family switches opens up new opportunities for innovation by converging network connectivity with a distributed application runtime environment, including hosting applications developed by partners and developers.

Cisco IOS XE 16.12.1 introduced native Docker container support on Catalyst 9300 series switches. Now, C9404 and C9407 models also can support Docker container with Cisco IOS XE 17.1.1 release. This enables users to build and bring their own applications without additional packaging. Developers don’t have to reinvent the wheel by rewriting the applications every time there is an infrastructure change. Once packaged within Docker, the applications will work within any infrastructure that supports docker containers.

In this blog, you will see how you can use native docker image (iperf) from Docker Hub to measure network performance by hosting on Cisco Catalyst 9300 switch.

Step-by-step installation configuration

1. Download the iPerf docker image to the local laptop.

Download the latest iPerf image from Docker Hub to the laptop.

Docker engine has to be installed on laptop and can pull iPerf image from Docker Hub.

MyPC$ docker pull mlabbe/iperf3

Save the downloaded iPerf docker image as a tar archive:

MyPC$ docker save mlabbe/iperf3 > iPerf.tar

2. Login to the Catalyst 9300 and copy the iPerf.tar archive to the flash: drive.

copy usbflash0:iPerf.tar flash:

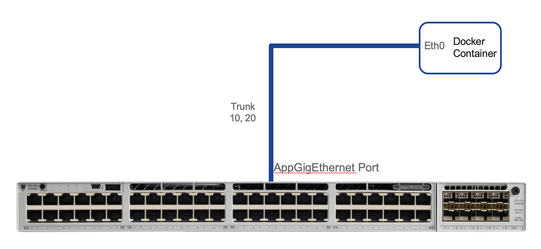

3. Configure network connectivity to the IPerf docker.

a. Create the VLAN and VLAN interface:

conf t

interface Vlan123

ip address 192.168.1.1 255.255.255.0

b. Configure the AppGigabitEthernet1/0/1 interface:

interface AppGigabitEthernet1/0/1

switchport mode trunk

The above configuration allows VLAN 123 on the AppGigabitEthernet port.

c. Map vNIC interface eth0 of the IPerf docker to VLAN 123 on the AppGigabitEthernet 1/0/1 interface:

conf t

app-hosting appid iPerf

app-vnic AppGigEthernet trunk

guest-interface 0

vlan 123 guest-interface 0

guest-ipaddress 172.26.123.202 netmask 255.255.255.0

app-default-gateway 172.26.123.1 guest-interface 0

4. Enable and verify App Hosting.

a. Verify that the USB SSD-120G flash storage is used.

dir usbflash1:

Directory of usbflash1:/

11 drwx 16384 Mar 25 2019 22:32:36 +00:00 lost+found

118014062592 bytes total (105824313344 bytes free)

Note: SSD-120G will be shown as usbflash1: in IOS-XE CLI. Internal flash and front panel usb (usbflash0:) do not support for application hosting.

b. Configure iox for App Hosting:

conf t

iox

show iox-service

IOx Infrastructure Summary:

---------------------------

IOx service (CAF) : Running

IOx service (HA) : Running

IOx service (IOxman) : Running

Libvirtd : Running

Dockerd : Running

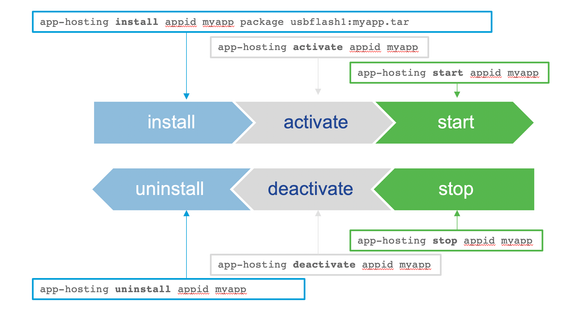

5. Install ,activate and run the IPerf docker cat9k application.

a. Deploy the IPerf docker application:

app-hosting install appid iPerf package flash:iPerf.tar

Installing package 'flash:iPerf.tar' for iPerf. Use 'show app-hosting list' for progress.

show app-hosting list

App id State

------------------------------------------------------

iPerf DEPLOYED

b. Activate the IPerf docker application:

app-hosting activate appid iPerf

iPerf activated successfully

Current state is: ACTIVATED

c. Start the IPerf docker application:

app-hosting start appid iPerf

iPerf started successfully

Current state is: RUNNING

d. Verify that the IPerf docker application is running:

show app-hosting list

App id State

---------------------------------------------------------

iPerf RUNNING

6. Working with the app.

a. Check app details.

show app-hosting detail appid iperf

App id : iperf

Owner : iox

State : RUNNING

Application

Type : docker

Name : mlabbe/iperf3

Version : latest

Description :

Path : usbflash0:iperf3_sai.tar

Activated profile name : custom

Resource reservation

Memory : 2048 MB

Disk : 4000 MB

CPU : 7400 units

VCPU : 1 units

Attached devices

Type Name Alias

---------------------------------------------

serial/shell iox_console_shell serial0

serial/aux iox_console_aux serial1

serial/syslog iox_syslog serial2

serial/trace iox_trace serial3

Network interfaces

---------------------------------------

eth0:

MAC address : 52:54:dd:50:b5:ce

IPv4 address : 172.26.123.202

Network name : mgmt-bridge193

Docker

------

Run-time information

Command :

Entry-point : iperf3 -s

Run options in use :

Application health information

Status : 0

Last probe error :

Last probe output :

b. Check app utilization.

show app-hosting utilization appid iPerf

Application: iPerf

CPU Utilization:

CPU Allocation: 7400 units

CPU Used: 1.49 %

Memory Utilization:

Memory Allocation: 2048 MB

Memory Used: 893 KB

Disk Utilization:

Disk Allocation: 4000 MB

Disk Used: 0.00 MB

7. Monitoring performance between the laptop and C9300.

MyPC$ iperf3 -c 172.26.123.202

Connecting to host 172.26.123.202, port 5201

[ 5] local 10.154.164.97 port 59408 connected to 172.26.123.202 port 5201

[ ID] Interval Transfer Bitrate

[ 5] 0.00-1.00 sec 7.51 MBytes 63.0 Mbits/sec

[ 5] 1.00-2.00 sec 8.24 MBytes 69.2 Mbits/sec

[ 5] 2.00-3.00 sec 10.0 MBytes 84.2 Mbits/sec

[ 5] 3.00-4.00 sec 9.52 MBytes 79.9 Mbits/sec

[ 5] 4.00-5.00 sec 9.36 MBytes 78.5 Mbits/sec

[ 5] 5.00-6.00 sec 10.8 MBytes 90.8 Mbits/sec

[ 5] 6.00-7.00 sec 10.1 MBytes 84.9 Mbits/sec

[ 5] 7.00-8.00 sec 9.62 MBytes 80.7 Mbits/sec

[ 5] 8.00-9.00 sec 10.9 MBytes 91.2 Mbits/sec

[ 5] 9.00-10.00 sec 4.51 MBytes 37.7 Mbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate

[ 5] 0.00-10.00 sec 90.6 MBytes 76.0 Mbits/sec sender

[ 5] 0.00-10.01 sec 90.1 MBytes 75.5 Mbits/sec receiver