- Cisco Community

- Technology and Support

- Data Center and Cloud

- Unified Computing System (UCS)

- Unified Computing System Discussions

- Is a FI capable of making a loop?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Is a FI capable of making a loop?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-07-2014

07:56 AM

- last edited on

03-25-2019

01:38 PM

by

ciscomoderator

![]()

Hi there guys! got a question

Is there any chance that a Fabric Interconnect 6296 in EHM make a loop in a LAN?

A couple of weeks ago we try to make a upgrade from a 6140xp to a 6296up, when the first fabric (6296) was put into the cluster one chassis go crazy and the fans in that chassis start making very loud noise. All the network entered into a loop and we have to make rollback. When the 6140xp was put again into the cluster the chassis 3 go back to normal. The loop in the LAN stooped when i disconnect the uplinks from the 6296UP.

So...i hope anyone can get my question answered and provide some document or info about the anti-loops mechanins.

Greetings.

- Labels:

-

Unified Computing System (UCS)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-07-2014 12:48 PM

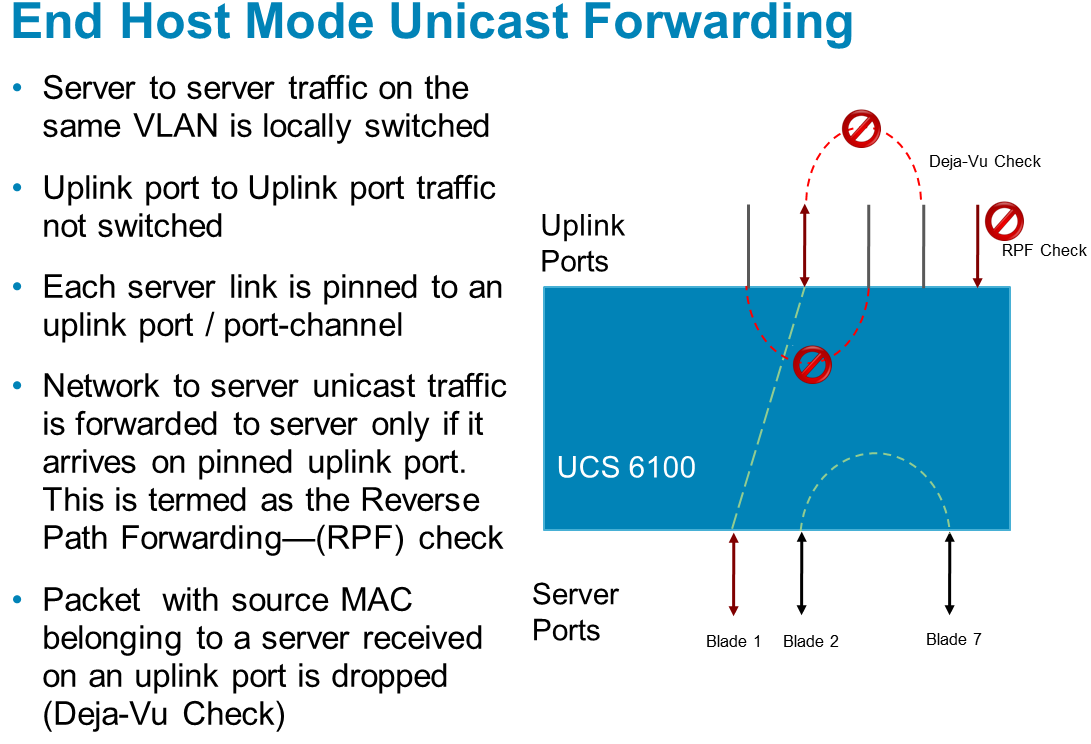

Can you please clarify what you mean by loop in the lan ? In EHM there is no spanning tree ? and loop avoidance mechanism in place (see below)

The fact that the chassis fan's are running at full power is not related to a loop !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-07-2014 01:21 PM

Hi wdey, thank you for respond.

In the time when the 6296UP was connected all services on my network were down, no magnagement trough ssh/telnet on the 6500 or the n7k.

what you saying abouts the chassis is correct, but what is strange is that when the 6296 was connected the chassis loose communication and go full power. In the moment we do some TS but the only way that the problem were solved was doing the rollback.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-07-2014 01:27 PM

Did you follow the procedure in http://www.cisco.com/en/US/docs/unified_computing/ucs/sw/upgrading/from1.4/to2.0/b_UpgradingCiscoUCSFrom1.4To2.0_chapter_0101.pdf

The upgrade described here is primarily for upgrading a Cisco UCS 6120 fabric interconnect to a Cisco UCS

6248. If you have a Cisco UCS 6140 fabric interconnect that has 48 or less physical ports connected, you will

also be able to utilize these steps, the same principles will apply though you will have to manually map any

ports connected to slot 3 on a UCS 6140 as the UCS 6248 has no slot 3. The same considerations will also

apply when upgrading a Cisco UCS 6140 fabric interconnect to a Cisco UCS 6296, though as the 6296 has

enough slots that no manual re-mapping will be necessary.

There might have been a problem with the port function assignment.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-07-2014 01:39 PM

- We are migrating from a FI 6140XP to a full FI 6296UP so the port mapping is not a problem.

- All the hardware is in the same version (v 2.1)

- I follow the procedure describe in the same document you provide.

in the moment when the first 6296UP was incorporate in the cluster the problems begins; a chassis (we have 10 chassis) was alarmed and the fans go full power; loose magnament capabilities with the n7000 and the 6500 switches; The UCSM console loose communication with the fabric several seconds after log in; Making a ping to FI cluster ip show loose of packets.

Greetings.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-07-2014 02:59 PM

I still don't understand; if you swap eg. one FI to a 6296, you have to cable up the chassis, and configure the server ports, uplinks to your N7k and 6500 ! and heartbeat links.

Maybe you swapped FI master node, where UCSM was running, and therefore you created a split brain situation; this would explain the loss of the UCSM session.

I did this procedure several times without problems; just starting with one fabric, with the FI subordinate (slave). And then after everything is running ok, do the other fabric.

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide