- Cisco Community

- Technology and Support

- Data Center and Cloud

- Unified Computing System (UCS)

- Unified Computing System Discussions

- Is this a bug in hx_post_install?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-16-2021 04:39 AM - edited 09-16-2021 08:12 PM

[Edit: 2021.09.17 Using @Steven Tardy 's comprehensive answer, I was able to update the script to run properly. You can read about it on my blog (Sorry - can't paste the link here - against the rules, but a search for

rednectar hyperflex hx_post_install fix

should get you close - but might take a day or two for the search engines to find it)]

Hi,

I ran the hx_post_install --vallidate script on a HyperFlex cluster today, and examined the output carefully.

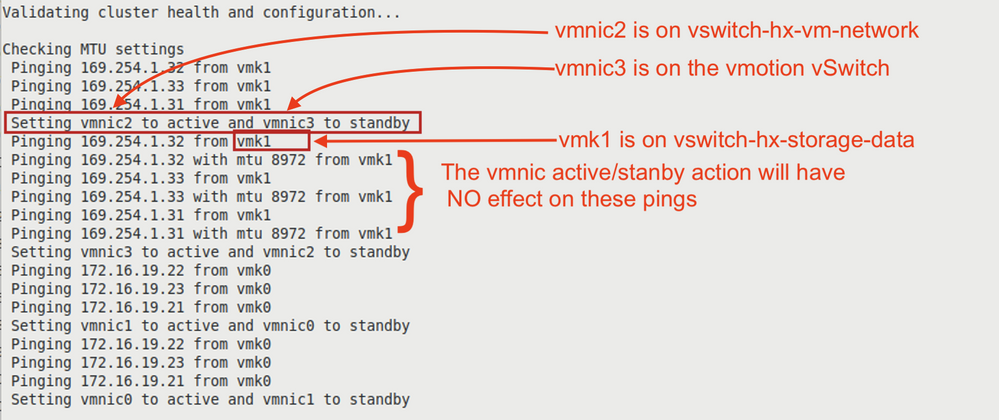

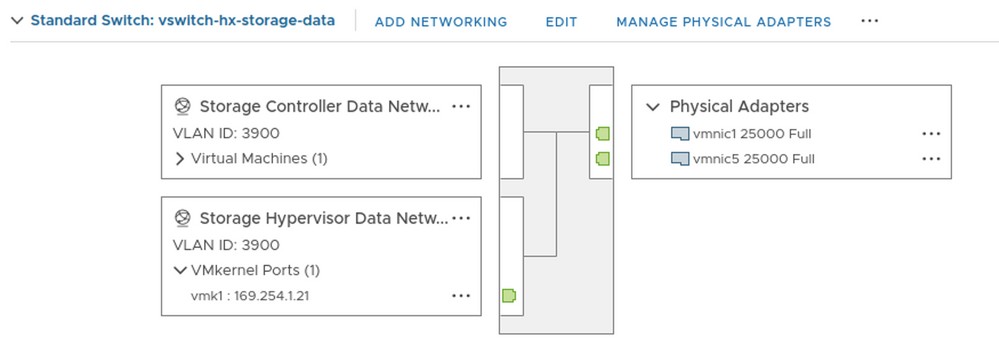

Until now, (I've run this script at least 50 times) I had blindly assumed that the MTU test was testing the vswitch-hx-storage-data vSwitch by shutting down the primary vmnic on the vswitch (vmnic5) and sending packets to the other vmk1 interface IPs with 8972 bytes.

But if the output of the command is to be believed, this is NOT what the script does,

What it (says it) does is set vmnic2 to active (vmnic2 is on vswitch-hx-vm-network and already active) and set vmnic3 to standby (vmnic3 is on the vmotion vSwitch)

And then it does the big packet ping via the vswitch-hx-storage-data vswitch

What a complete waste of time if the printed output is accurate. The vmnic active/stanby action will have

NO effect on these pings.

Now there are TWO possible explanations.

- Either the script actually sets vmnic5 to standby and vmnic1 to active and actually tests the vswitch properly, but has some error in the way it prints the information, or

- the script is a total waste of time, and the printed information is accurate.

If 2, above is correct, it's actually worse than a total wast of time, because the script puts vmnic2 to standby - but it should remain active (both vmnic2 and vmnic6 are active on vswitch-hx-vm-network)

However, that standby action may actually fail - certainly thevswitch-hx-vm-network still has both adapters active after running the script.

Now, I once had a case where I THOUGHT the script SHOULD fail, but it didn't, so I assumed that I was wrong. I'm beginning to think that maybe I wasn't wrong and should have checked closer. (I hope that customer never loses the connection to FI-B)

SO - could I ask one of the Cisco gurus who check this page from time to time to tell me exactly what the script does, and tell me if I'm wasting my time running the --vallidate option?

And while on the case - when I run the script WITHOUT the --vallidate option, the script asks if I want to do a health check, and even when I answer "yes" it doe NOT do the SAME health check as running with the --vallidate option - which is confusing - it SHOULD run the whole health check, just to save me having to run it a second time and going through all the logins again.

Forum Tips: 1. Paste images inline - don't attach. 2. Always mark helpful and correct answers, it helps others find what they need.

Solved! Go to Solution.

- Labels:

-

HyperFlex

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-16-2021 06:03 AM

Looks like this is a vNIC order issue.

Within post_install the VMware vmnics are hard coded:

vsList = [

{'vswitch': 'vswitch-hx-storage-data', 'vmk': 'vmk1', 'active_vnic': 'vmnic3',

'standby_vnic': 'vmnic2', 'mtu': 8972, 'ips': controllerList},

{'vswitch': 'vswitch-hx-inband-mgmt', 'vmk': 'vmk0', 'active_vnic': 'vmnic0',

'standby_vnic': 'vmnic1', 'mtu': 1472, 'ips': serverList},

]

This works for M4 with VIC 1200 generation as from ESXi the vNIC order is ABABABAB(storage-a is vmnic2 and storage-b is vmnic3

[root@hx-01a-esxi-1:~] esxcfg-nics -l

Name PCI Driver Link Speed Duplex MAC Address MTU Description

vmnic0 0000:05:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a1:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic1 0000:06:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b2:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic2 0000:07:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a3:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic3 0000:08:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b4:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic4 0000:09:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a5:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic5 0000:0a:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b6:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic6 0000:0b:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a7:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic7 0000:0c:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b8:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

But on M5 with VIC 1300 generation ESXi reorders the vNICs as AAAABBBB(storage-a is vmnic1 and storage-b is vmnic5

[root@HX-07-1:~] esxcfg-nics -l

Name PCI Driver Link Speed Duplex MAC Address MTU Description

vmnic0 0000:3f:00.0 nenic Up 40000Mbps Full 00:25:b5:c7:a1:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic1 0000:3f:00.1 nenic Up 40000Mbps Full 00:25:b5:c7:a3:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic2 0000:3f:00.2 nenic Up 40000Mbps Full 00:25:b5:c7:a5:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic3 0000:3f:00.3 nenic Up 40000Mbps Full 00:25:b5:c7:a7:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic4 0000:3f:00.4 nenic Up 40000Mbps Full 00:25:b5:c7:b2:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic5 0000:3f:00.5 nenic Up 40000Mbps Full 00:25:b5:c7:b4:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic6 0000:3f:00.6 nenic Up 40000Mbps Full 00:25:b5:c7:b6:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic7 0000:3f:00.7 nenic Up 40000Mbps Full 00:25:b5:c7:b8:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

I will submit a defect against Hyperflex post_install.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-16-2021 06:03 AM

Looks like this is a vNIC order issue.

Within post_install the VMware vmnics are hard coded:

vsList = [

{'vswitch': 'vswitch-hx-storage-data', 'vmk': 'vmk1', 'active_vnic': 'vmnic3',

'standby_vnic': 'vmnic2', 'mtu': 8972, 'ips': controllerList},

{'vswitch': 'vswitch-hx-inband-mgmt', 'vmk': 'vmk0', 'active_vnic': 'vmnic0',

'standby_vnic': 'vmnic1', 'mtu': 1472, 'ips': serverList},

]

This works for M4 with VIC 1200 generation as from ESXi the vNIC order is ABABABAB(storage-a is vmnic2 and storage-b is vmnic3

[root@hx-01a-esxi-1:~] esxcfg-nics -l

Name PCI Driver Link Speed Duplex MAC Address MTU Description

vmnic0 0000:05:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a1:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic1 0000:06:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b2:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic2 0000:07:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a3:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic3 0000:08:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b4:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic4 0000:09:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a5:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic5 0000:0a:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b6:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic6 0000:0b:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:a7:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic7 0000:0c:00.0 nenic Up 10000Mbps Full 00:25:b5:c0:b8:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

But on M5 with VIC 1300 generation ESXi reorders the vNICs as AAAABBBB(storage-a is vmnic1 and storage-b is vmnic5

[root@HX-07-1:~] esxcfg-nics -l

Name PCI Driver Link Speed Duplex MAC Address MTU Description

vmnic0 0000:3f:00.0 nenic Up 40000Mbps Full 00:25:b5:c7:a1:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic1 0000:3f:00.1 nenic Up 40000Mbps Full 00:25:b5:c7:a3:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic2 0000:3f:00.2 nenic Up 40000Mbps Full 00:25:b5:c7:a5:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic3 0000:3f:00.3 nenic Up 40000Mbps Full 00:25:b5:c7:a7:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic4 0000:3f:00.4 nenic Up 40000Mbps Full 00:25:b5:c7:b2:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic5 0000:3f:00.5 nenic Up 40000Mbps Full 00:25:b5:c7:b4:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic6 0000:3f:00.6 nenic Up 40000Mbps Full 00:25:b5:c7:b6:01 1500 Cisco Systems Inc Cisco VIC Ethernet NIC

vmnic7 0000:3f:00.7 nenic Up 40000Mbps Full 00:25:b5:c7:b8:01 9000 Cisco Systems Inc Cisco VIC Ethernet NIC

I will submit a defect against Hyperflex post_install.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-16-2021 01:46 PM

Thanks @Steven Tardy ,

I hope the new M6 models coming use the same ordering.

And double thanks for the comprehensive explanation.

Forum Tips: 1. Paste images inline - don't attach. 2. Always mark helpful and correct answers, it helps others find what they need.

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide