- Cisco Community

- Technology and Support

- Data Center and Cloud

- Unified Computing System (UCS)

- Unified Computing System Discussions

- Re: Prevent broadcast storm from VMs under FI

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-21-2018 01:10 AM - edited 03-01-2019 01:35 PM

Hi,

We are having pair of Fabric-Interconnect 9296

On the blades, there ESXi installed, with VMs on it

Yesterday we had some network issues, and after 2 hours of T-Shoot, we get in to a point that we saw that there was a VM installed on some ESXi that used as a virtual switch, and it blows the whole subnet with Broadcast storm. We succeded with power off this VM, and now all is back to normal

How can we prevent things like that happened in the future ?

I know that on our Network Devices Env, we having a BPDU command on all of the user's interfaces to prevent it from happen, but what can I do here on a UCSM Env ?

Thanks a lot in advance,

Solved! Go to Solution.

- Labels:

-

Other Unified Computing System

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-24-2018 03:28 AM - edited 06-24-2018 05:50 AM

There's nothing you can directly change on the vnic settings that's going to prevent you from creating loops/bridging at the guestVM level.

Some best practice things would be to limit the vlans allowed on the vnics to only the absolutely required vlans. This will cut down on the amount of traffic, especially broadcast traffic, that is received at the ESXi host VMNIC level.

I see a lot of customers create service profiles early on in the design phase of their UCSMs, and virtually allow all the vlans because they haven't sorted out exactly which vlans are going to be used on which hosts. Mis-configuration of vswitch teaming hashing algorithm, incorrect disjoint layer 2 config, upstream switching problems, can all potentially contribute to loss of traffic leaving the FIs, and cause a given guestVM to arp, re-arp trying to resolve basing mac/ip mapping.

Again, you need to fire up wireshark, and find out exactly what the nature of the traffic is, exact source mac addresss, which vlan, etc

There is a good forum post on how to do the vmware based captures at https://supportforums.cisco.com/t5/collaboration-voice-and-video/packet-capture-on-vmware-esxi-using-the-pktcap-uw-tool/ta-p/3165399

Thanks,

Kirk...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-21-2018 03:27 AM - edited 06-21-2018 03:28 AM

Greetings.

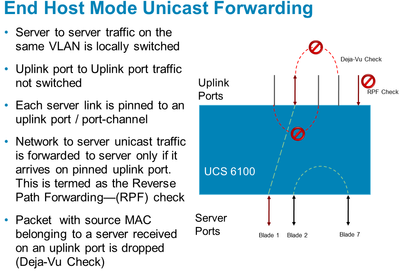

By design, the UCSM/FIs have several mechanisms including deja vu check, RPF check, that avoid loops.

If you have misbehaving hosts or VMs that are continuously shooting out broadcast traffic, then the FIs are simply going to delivery the broadcast frames for that vlan.

I would take a wireshark capture of the traffic to confirm exactly what it is, and evaluate any guest VMs that have multiple NICs and have any sort of bridging config.

Thanks,

Kirk...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-21-2018 05:09 AM

Hi, thanks for your reply,

When the BD happened, the whole subnet was down as you said.

If I understand right, there is no way to prevent the Broadcast storm on the vlan before it happens ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-21-2018 05:47 AM - edited 06-21-2018 07:19 AM

Some routers/switches will have storm control.

The UCSM, which is designed to behave like an end host, maximum throughput, cut through switching, is not designed to do filtering or packet analysis.

These kinds of issues are usually due to improper network design/config.

If the broadcast storms are emanating from a guestVM, you could have a loop configured if multiple nics, or have an issue with the guestVM OS/app that is simply spitting out broadcast traffic (not necessarily related to a loop)

Thanks,

Kirk...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-23-2018 11:44 PM

Hi Kirk, thanks again

I hope that I understood right...

So is there something I can configure on the SP's NIC that can minimize the impact ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-24-2018 03:28 AM - edited 06-24-2018 05:50 AM

There's nothing you can directly change on the vnic settings that's going to prevent you from creating loops/bridging at the guestVM level.

Some best practice things would be to limit the vlans allowed on the vnics to only the absolutely required vlans. This will cut down on the amount of traffic, especially broadcast traffic, that is received at the ESXi host VMNIC level.

I see a lot of customers create service profiles early on in the design phase of their UCSMs, and virtually allow all the vlans because they haven't sorted out exactly which vlans are going to be used on which hosts. Mis-configuration of vswitch teaming hashing algorithm, incorrect disjoint layer 2 config, upstream switching problems, can all potentially contribute to loss of traffic leaving the FIs, and cause a given guestVM to arp, re-arp trying to resolve basing mac/ip mapping.

Again, you need to fire up wireshark, and find out exactly what the nature of the traffic is, exact source mac addresss, which vlan, etc

There is a good forum post on how to do the vmware based captures at https://supportforums.cisco.com/t5/collaboration-voice-and-video/packet-capture-on-vmware-esxi-using-the-pktcap-uw-tool/ta-p/3165399

Thanks,

Kirk...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-25-2018 02:38 AM

Thanks Kirk !

We always giving only the needed Vlan on the NIC

That's what caused that only one subnet was hurt

Thanks for your time

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-06-2019 04:59 PM

Hi Robab,

I agree that this is not necessarily a loop. We just encountered an ARP storm from multiple VMs that hit our upstream gateway router at such a high rate that it started dropping ARP. This affected all VLANs since the policer is not specific to VLAN. It actually affected dynamic routing peering as well when the ARP table needed to be updated for the peer. We are not using FI. We put in place storm-control to it's lowest limit which is still higher than the upstream Nexus 7K switch's ARP policer. We are now going to put in a custom policer that will limit VMWare MAC addresses at a lower threshold than the rest of the Nexus environment.

I would like to ask if you had implemented NSX-T prior to seeing the storm? We had done so and the hosts we have seen do this were on the NSX-T port groups. Apparently, the hosts were not getting the ARP reply from the gateway for what might be a bug on NSX-T and the host just kept ARPing for it. Why it needs to ARP at such an aggressive rate is beyond me. Not sure when NSX-T became available. It may be later than the time of your issue.

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide