- Cisco Community

- Technology and Support

- Data Center and Cloud

- Unified Computing System (UCS)

- Unified Computing System Discussions

- VMWare NPIV seems to not work with Cisco UCS Infrastructure !

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-12-2015 04:33 AM - edited 03-01-2019 12:24 PM

Hi everybody,

My system has a configuration like below:

- Blade Server: UCS B200 M2 (Model: N20-B6625-1) with mezzazine card M81KR

- Host OS: VMWare ESXi 5.0.0 - 623680

- Storage Connection: Blade Server --> Chassis --> Extender (FEX) --> Fabric Interconnect (UCS 6248UP) --> SAN Switch (Cisco MDS 9100) --> SAN Storage (EMC VNX5300)

Now we intend to implement a RDM Hard Disk via NPIV feature for a Virtual Machine(VM) on the Host Blade. Up to now, we have:

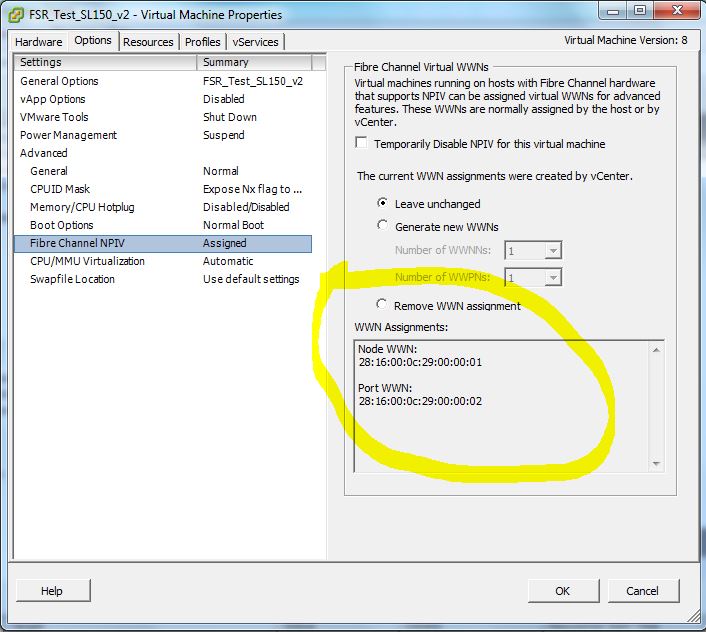

- Configured the NPIV setting of the VM and the VM displayed the generated WWPN and WWNN (NPIV status is Assigned)

- On the SAN Switch (Cisco MDS 9100), we have checked that feature NPIV is enabled (by command #show npiv status)

- LUN is created on EMC Storage

However, during the process of implementation, there are some issues as below:

- When we reboot the VM and check the /var/log/vmkernel.log, there is no information about NPIV event (search the log by the word "npiv")

- SSH login to the ESXi host and cat /proc/scsi --> there is only 1 folder named mptsas (/proc/scsi/mptsas), not like to be an folder which represents an HBA card (Emulex: lpfc, QLogic: qla)

- According to the URL:

Deployment Procedure --> 1. Enable NPIV on the Cisco MDS 9000 Family switch: We checked that the Fibre Channel license on Cisco UCS Manager had only activated 1 package ETH_PORT_ACTIVATION. The output is as below:

UCS A/ license # show usage detail

License instance: ETH_PORT_ACTIVATION_PKG

Scope: A

Default: 12

Total Quant: 12

Used Quant: 12

State: License Ok

Peer Count Comparison: Matching

Grace Used: 0

License instance: ETH_PORT_ACTIVATION_PKG

Scope: B

Default: 12

Total Quant: 12

Used Quant: 12

State: License Ok

Peer Count Comparison: Matching

Grace Used: 0

Meanwhile, the topic suggests that the Fabric Interconnect is licensed the FC_FEATURES_PKG Yes --> Did we lack any FC license for the NPIV to work correctly ??

4. On the SAN Switch, use command #show fcns database which lists all the WWPN of all the vHBA Host’s Port, NPV WWN of Fabric Interconnect, WWN of EMC Storage Processor (SP) HBA without the NPIV WWPN of the VM (that we generated before)

5. Although SAN Switch does not display the NPIV WWPN of the VM, we still try zoning this NPIV WWPN to the same zone with the physical vHBA of the parent ESXi host and the Storage SP port manually; like the following thread below:

https://blogs.vmware.com/vsphere/2011/11/npiv-n-port-id-virtualization.html

“FC Switch Configuration

On the FC switch, you will now have to create zones from the VM’s NPIV WWPN to the Storage Array ports WWPN. However, the VM’s NPIV WWPNs are currently not active, so they do not appear in the nameserver on the FC switch. Therefore they will need to be added manually. Once they are manually added to the FC switch, the WWPNs can then be placed in zones with the WWPN of the Storage Array ports.”

However, on the EMC Storage Management Console, the new expected initiator (NPIV WWPN of VM) does not appear. SO that we cannot assign RDM Lun to VM via NPIV feature.

In conclusion, we are wondering that:

- Whether the Cisco UCS supports VMWare NPIV feature ?

- And if our infrastructure supports the NPIV feature, are there any misconfiguration in our step ?

I’m highly appreciated for any suggested idea for this case, thank you so much !!

Solved! Go to Solution.

- Labels:

-

Unified Computing System (UCS)

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 04:24 AM

Hi Mike !

I got the confirmation that indeed, NPV on VM (RDM) for VMware is NOT supported, and is NOT working. The corresponding fnic driver has NOT this functionality is blocking the flogi. As I mentioned, this feature is available under Hyper-V !

Walter.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-12-2015 11:20 AM

Do you see any strange entries in the MDS log file: show logging log ?

I have seen long time ago situations, where certain pwwn prefixes were rejected by MDS. Don't know about 28:16........

Walter.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-12-2015 09:31 PM

HI Walter,

I'm willing to send you the MDS Log file as the attachement.

We have investigate the MDS log but there aren't any log information related to WWPN event although we have rebooted the VM (with NPIV assigned) several times between 09-October until NOW !

2015 Oct 9 10:13:14 VPCP-PRI-SANA %AUTHPRIV-3-SYSTEM_MSG: pam_aaa:Authenticatio

n failed for user admin from 10.9.249.97 - sshd[24873]

2015 Oct 9 10:13:14 VPCP-PRI-SANA %DAEMON-3-SYSTEM_MSG: error: PAM: Authenticat

ion failure for admin from 10.9.249.97 - sshd[24870]

2015 Oct 9 10:13:19 VPCP-PRI-SANA %AUTHPRIV-3-SYSTEM_MSG: pam_aaa:Authenticatio

n failed for user admin from 10.9.249.97 - sshd[24870]

2015 Oct 9 10:43:02 VPCP-PRI-SANA %AUTHPRIV-3-SYSTEM_MSG: pam_aaa:Authenticatio

n failed for user admin from 10.9.249.90 - sshd[25178]

2015 Oct 9 11:31:55 VPCP-PRI-SANA %PORT-5-IF_UP: %$VSAN 66%$ Interface fc1/7 is

up in mode F

[7m--More--[m

2015 Oct 12 08:26:27 VPCP-PRI-SANA %AUTHPRIV-3-SYSTEM_MSG: pam_aaa:Authenticatio

n failed for user admin from 10.9.249.22 - sshd[24649]

2015 Oct 12 08:26:27 VPCP-PRI-SANA %DAEMON-3-SYSTEM_MSG: error: PAM: Authenticat

ion failure for admin from 10.9.249.22 - sshd[24645]

2015 Oct 13 04:26:07 VPCP-PRI-SANA %AUTHPRIV-3-SYSTEM_MSG: pam_aaa:Authenticatio

n failed for user admin from 10.9.249.98 - sshd[1298]

2015 Oct 13 04:26:07 VPCP-PRI-SANA %DAEMON-3-SYSTEM_MSG: error: PAM: Authenticat

ion failure for admin from 10.9.249.98 - sshd[1297]

VPCP-PRI-SANA# exit

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 12:06 AM

This is a nested NPV configuration, and I remember that this is not supported ?

http://www.ccierants.com/2013/06/ccie-dc-nested-npv.html

Nevertheless, I would try to change the initator pwwn; this is what I was referring to

http://www.cisco.com/c/en/us/products/collateral/storage-networking/mds-9500-series-multilayer-directors/white_paper_c11_586100.html

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 12:43 AM

HI Walter,

Did you mean that we have to change the NPIV WWN format or that of physical vHBA of the host ?

To make thing clearer, we send you some pic of our configuration:

1. WWN of 2 physical vHBAs on the ESXi host (these WWNs are generated automatically from Pool)

- vHBA0:

- WWPN: 20:A1:00:25:B5:00:00:5E

- WWNN: 20:00:00:25:B5:00:00:5E

- vHBA1:

- WWPN: 20:B1:00:25:B5:00:00:5E

- WWNN: 20:00:00:25:B5:00:00:5E

2. WWN of NPIV on Virtual Machine:

- WWNN: 28:16:00:0C:29:00:00:01

- WWPN: 28:16:00:0C:29:00:00:02

Would you mean this WWN format needs to be changed to 20:00:00:25..... format ?

3. We also send you the output of show fcns database command on MDS SAN Switch as below:

Actually, MDS SAN Switch can recognize the WWN of Fabric Interconnecte and all that of physical vHBA of every ESXi host except the NPIV's WWN.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 01:40 AM

Yes, I mean the pwwn of the VM's ! Just use a prefix of 20:......

First you should see a flogi entry, and then you can do the zoning.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 02:19 AM

Thanks Walter for your help,

But what a pity, the WWN of NPIV in VM cannot be customized because the VSphere genenerates it automatically based on the VMWare WWN OUI.

You can check the Vendor WWN OUI via the following URL:

http://wintelguy.com/index.pl

the result shows that the WWN of the NPIV of VM - 28:16:00:0C:29:00:00:01

is belong to VMWare Corp, and poorly we cannot change it to aa:bb:00:25:B5:cc:dd:ee Format that belongs to Cisco Corp

Otherwise, our final purpose is to create NPIV on VM and the Windows Server 2008 OS must recognizes new device but nothing works -- > Maybe i have to ask VMWare Community to have some more ideas.

I'm very exhausted when getting stuck in this case !

I you have any futhermore clue, don't hesitate to reply me on this thread, i'm very appreciated for you useful information :)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 02:54 AM

After all, I would open a TAC case, and get hopefully the confirmation that it is supported and works. I know with 100% guarantee that it works for Hyper-V, where this feature was used for cluster setup at the VM level.

Microsoft Clustering on VMware vSphere: Guidelines for supported configurations (1037959)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 03:20 AM

Dear Walter,

thank you for your very help.

So now will you open a TAC case on VMWare to get the confirmation that: Whether NPIV feature given by VMWare ESXi is compatible to Cisco UCS infrastructure ??

If you will do so, please notify me the URL so that we can accompany you in that thread !

Sincerely !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 04:24 AM

Hi Mike !

I got the confirmation that indeed, NPV on VM (RDM) for VMware is NOT supported, and is NOT working. The corresponding fnic driver has NOT this functionality is blocking the flogi. As I mentioned, this feature is available under Hyper-V !

Walter.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-13-2015 07:25 AM

Dear Walter,

Bored to hear that but i'm still very appreciated for your help and confirmation.

Your statement help me saving many time so that i can think about another solution for my problem.

Best regard !

Mikel :)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-12-2015 12:27 PM

Did you activate the zoneset after you added the new zones?

The license should not be a problem as 2nd Gen FIs use Unified Ports that can be configured as Eth or FCand FC does not require an additional license.

If you reboot one of the servers that face this issue, press F6 and use the commands here (lunlist and lunmap) : https://angryjesters.wordpress.com/2012/01/05/cisco-vic-boot-from-san-troubleshooting/

Paste the output here and we can try to see what you get from there...

-Kenny

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-12-2015 09:48 PM

Hi Kenny,

- We can confirm that we activated zoneset after added the new zones

- Upto now, we have not rebooted the ESXi host server yet but we only restarted the VM (with NPIV assigned) serveral time to generate event log in the ESXi or MDS switch. When we SSH to the Fabric Interconnect and check some info about (lun list, lun map), the output is empty as below:

VPCP-PRI-UCS-A# connect adapter 2/5/1

adapter 2/5/1 # connect

adapter 2/5/1 (top):1# attach-fls

adapter 2/5/1 (fls):1# vnic

---- ---- ---- ------- -------

vnic ecpu type state lif

---- ---- ---- ------- -------

19 1 fc active 16

20 2 fc active 17

adapter 2/5/1 (fls):2# login 19

adapter 2/5/1 (fls):3# lunmap 19

adapter 2/5/1 (fls):4# lunlist 19

adapter 2/5/1 (fls):5# login 20

adapter 2/5/1 (fls):6# lunmap 20

adapter 2/5/1 (fls):7# lunlist 20

adapter 2/5/1 (fls):8#

.............

We send you the total output log.

I would remind you that our final purpose is to deploy the NPIV feature on the VM to access RDM LUN but until now, this NPIV feature seems to not work properly. The physical vHBA of the Blade B200 M2 is still working fine with storage (ESXi host still have datastore which is mounted from EMC Storage)

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide