- Cisco Community

- Technology and Support

- Collaboration

- Collaboration Knowledge Base

- How to troubleshoot voice quality issues in a UCM environment (bad sound, no audio)

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

05-29-2012 08:23 AM - edited 03-12-2019 09:47 AM

- Introduction

- Really understanding the problem

- Collecting additional details

- Narrowing down to a specific situation

- Troubleshooting Tools

Introduction

In this guide I will try to describe a structure to use when troubleshooting voice issues like choppy or clipping sound, hissing noise, dead air or silence, one way audio, and others, rather than provide merely technical recommendations that may fix those. The goal here is to add another tool when you have a TAC case open so to provide us with more information, or even before opening the case to attempt a quick narrow down of the problem, and maybe a fast solution as well.

Really understanding the problem

The first thing we need to do, is to take the problem description to a concrete, short statement. Just stating that "the sound is bad", or that "there are voice quality issues" won't help you or your TAC engineer conclude a thing. Those statements however, reflect what end users may experience, and it is ok for them to express that way, the person working on the problem is the one in charge to gather a clear understanding of the situation. Before even moving to the part where we start collecting details, lets ask the following questions:

How was the sound?

What did you hear?

Can you tell if the other party heard you?

Once we answer those, we will know what we are after later when checking configurations and logs. We will end up with a statement such as:

"There was a hissing noise"

"The audio was choppy, couldn't hear all the words, but we could both hear each other"

"She was able to hear me because she told me through chat later on, but I couldn't not hear her at all"

"We were having normal conversation, and after she put me on hold, the music sounded all garbled and noisy"

Collecting additional details

Now is time to fully understand the topology in which the problem is happening. Audio streams are just like any other data streams in the sense that they flow through a cable or through wireless networks from one device to another. Having said that, the first thing to do after the problem statement is ready, is to narrow down probabilities to as few components as possible. Such task can only be done when there is a clear topology outline. Answer the following sample questions:

Does it happen with regular calls from one IP Phone to another IP Phone only?

Does it happen with calls from one IP Phone to an outside number only? (outside meaning a device on the public network)

Does it happen with both of the above?

Does it happen when connecting to a internal voice service like a contact center or voicemail system?

Is it occuring within the same Local Area Network?

Is it occuring between an IP Phone in one site, and an IP Phone in another site linked up by a MAN (Metropolitan Area Network) or a WAN (Wide Area Network)?

Are there security devices in between like firewalls and the alike?

Does it happen with all phone models?

At this point I would like to clarify myself when saying that voice or video streams "flow through a cable or through wireless networks from one device to another". There are 2 perspectives to take into account. Voice packets flow between phones, switches, routers, transcoders and other devices as bits, so every router, cable, switch and telephony device is suspect of dropping packets or causing delays; that would the transport perspective. From the signaling perspective, you must remember to always understand which devices are also voice devices, for example transcoders, Media Termination Points, Trusted Relay Points, RSVP agents, audio proxys and so forth. Why do I make this differenciation? Easy, take a look at the following diagram:

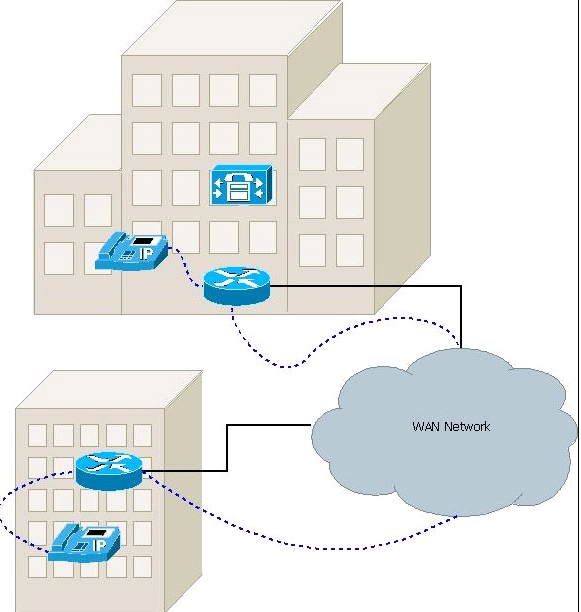

Following the blue dotted lines you see how the RTP with the real audio packets flow from one device to the other. It helps us determine which layer 1 through layer 3 paths are taken to deliver the information, and would help us focus on which devices to troubleshoot, however, lets imagine the topology from above is not telling us the entire story. Turns out that the IP Phone in the remote site was configured with a setting called "Use Trusted Relay Point", which will basically allocate an MTP resource to handle the audio to. Our topology now transforms into this:

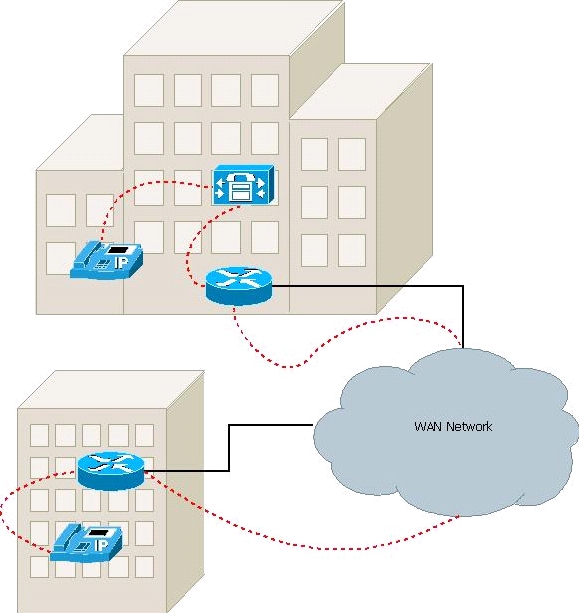

See how we now have a and extra line from the HQ router to the UCM server? That is because the phone's configuration in the remote site is asking for an MTP to be allocated, thus placing and extra device in the audio path. From a transport perspective only we wouldn't have guessed that the CallManager server was in the middle. But analyzing from the signaling perspective revealed it so. Just imagine that the region associated to the remote phone is set to use G.711 against the region associated to the MTP server built into CallManager. It so happens that the excessive bandwidth utilized across the WAN is causing the audio to be horribly choppy. Setting the regions to use G.729 could be the fix to this scenario.

So, with the last set of questions we have hopefully gathered an understanding of the transport topology. Here are some questions that will clarify the signaling topology:

Does it involve only IP Phones or is it a mix of phones with CTI devices, or a voicemail system, etc?

What codec is used on the affected calls/devices?

Is ther CAC in place?

What type of CAC?

How many call legs there are?

What signaling protocols are involved on those call legs?

Narrowing down to a specific situation

Ok now, we should have sufficient details to narrow it down a bit further. Once we find the affected call flows, we can start leaving some telephony devices out of the scope, or some transport devices out. For example, we answered the questions and found that clipping audio happens not only across the WAN, but also within the Head Quarters LAN. Weirdly enough, it happens only with certain phone models not present in the branch office, and it doesn't happen necessarily when connecting with the Public Telephony network. With that said, we can leave certain devices out of the picture: the WAN routers and the VPN tunnel between them, the PSTN gateway, and the collection of phones that has had no complaints. We can now focus on the phone model that persistently shows degraded sound quality. So what can we suspect from now?

The hardware pieces, the firmware running on them, and the underlying network. Tipically, a LAN is not subject of bottle necks, delays and other network performance adversaries, but in some cases, hard as it is to believe, a local area network performance may be running low and impact greatly on audio quality.

Lets continue with our example. Say we have narrowed down the problem to be either on the network, or on the phones. The easiest step at this point, because we have already determined that there is a suspicious phone model, would be to verify if there is a newer firmware version. We could still think that the hardware is damaged, but, would all the phones from that model be damaged? Unlikely, so we'll go with the firmware option. In our scenario, we were able to determine that the phones were affected by a bug: CSCtz54879. Upgrading to a fixed code solves the problem in this very particular scenario.

My goal here is to be able to provide a structure, a methodology for you to think about the problem you have in hands, and quickly narrow it down to then use more advanced tools.

Troubleshooting Tools

Cisco equipment comes with an awesome set of troubleshooting tools. Lets discuss some of them.

The IP Phones

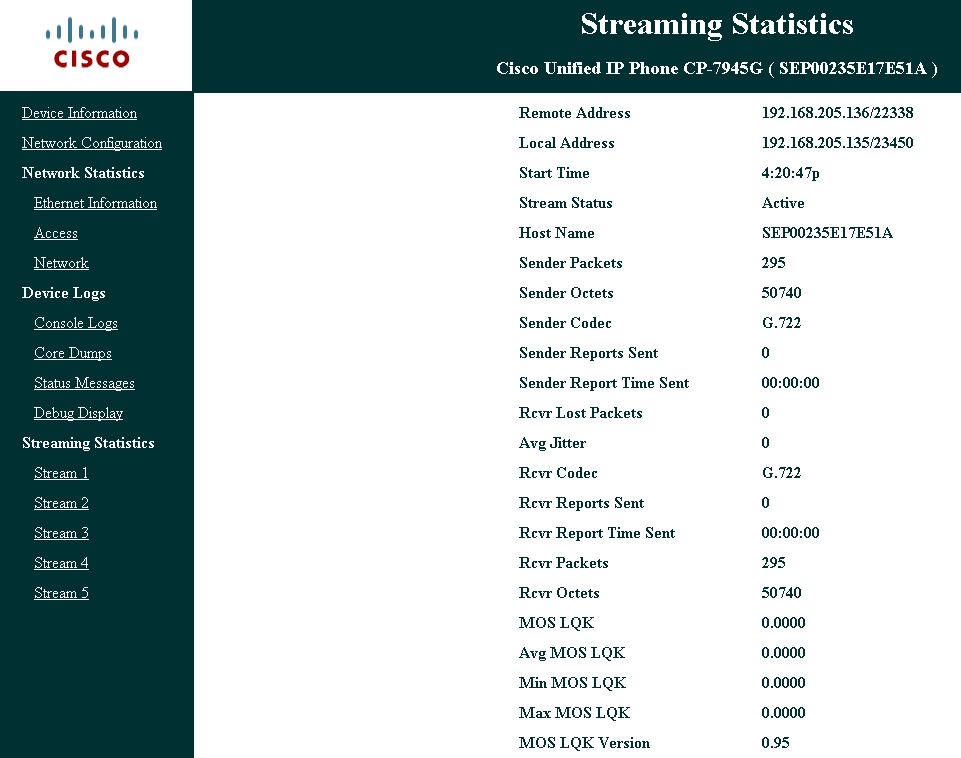

Many phone models come with an internal web server that will allow you to extract lots of information that can be used to troubleshoot audio quality problems. The picture below is the Stream 1 link on the left:

This page shows information from the current latest call opened on the phone, or the last call already terminated. It is very useful to determine with which device IP Address was the phone exchanging audio with, the codec negotiated, the "Rcvr Packets" and the "Sender Packets" counters. These last two are of special mention because they will reflect if the phone is even getting audio (RTP) packets or not. In a one-way-audio situation, how would this be useful? Well, lets imagine you open the Stream 1 page from both involved phones, and you see how the Sender Packets counter increases on Phone A, but the Rcvr Packets counter does not do the same in phone B. Is this a phone malfunction? No. Packets are being dropped somewhere in the middle. There are other useful links in the phone, but not specifically to audio issues.

Packet captures

A packet sniffer is simply the most powerful tool a network engineer can afford. And its freeware. Remember that when troubleshooting audio issues we are after one specific portion of a possibly complex topology. Lets put an example:

Outside (PSTN) callers stop hearing the audio during a normal 3 way conference conversation after the audio quality progressively begans to degrade at around 15 seconds, but the internal users can hear them just fine from start to end. To make it even harder, lets imagine that the users report that this happens when a user from a remote branch are involved. Lets work with the information provided, and jump right to the diagnostic questions:

How was the soud? good at the beginning, after 15s more or less, the audio degrades until it is dead air

What was heard? garbled sound

Can you tell if the other party heard you? internal users have no issues, external participant can't hear the others

Does it happen with regular calls from one IP Phone to another IP Phone only? No

Does it happen with calls from one IP Phone to an outside number only? No

Does it happen when connecting to a internal voice service like a contact center or voicemail system? The conference bridge

Is it occuring within the same Local Area Network? no

Is it occuring between an IP Phone in one site, and an IP Phone in another site linked up by a MAN (Metropolitan Area Network) or a WAN (Wide Area Network)? one user in the conference resides on a remote site

Are there security devices in between like firewalls and the alike? yes

Does it happen with all phone models? yes

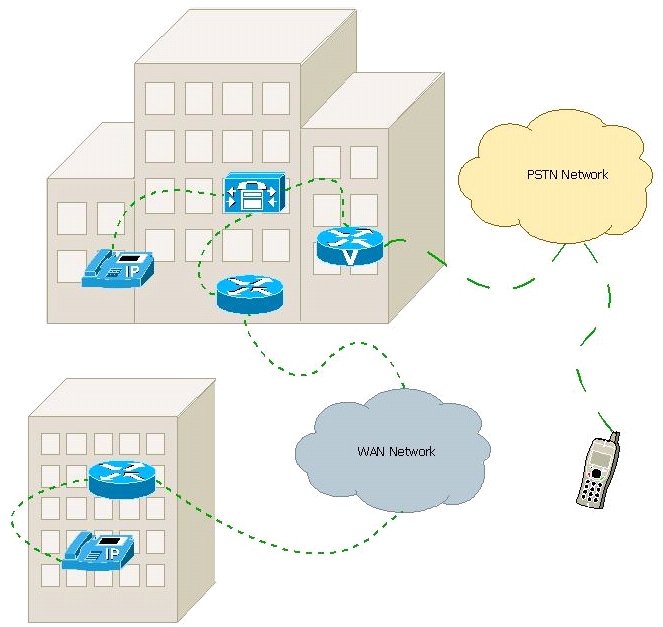

We can conclude that the topology looks something like this:

Let me say it right away: narrowing down this case is a piece of cake. Even though the user provided details make the situation seem like a cumbersome topology to analyze, thanks to packet captures it turns out to be as simple as generating a packet capture file on the CallManager server that in this particular scenario acts as the conference bridge for this call. See how all segments of the audio end up connecting to the CallManager server above? That makes it extremely easy to determine which device is the culprit. Below you will find the steps to analyze the captures.

- What are you looking for? So you have the packet captures now, but what are you after? Well basically we want to determine who is responsible for degrading the sound quality. If one or more of the audio streams came in degraded into CallManager, so that the bridge is using polluted sound on its way out to the other participants, or, if all 3 streams come in fine but maybe CallManager mixer is responsible, or if maybe the RTP packets comes in and exit the server just fine, and then some other device in between drops the ball.

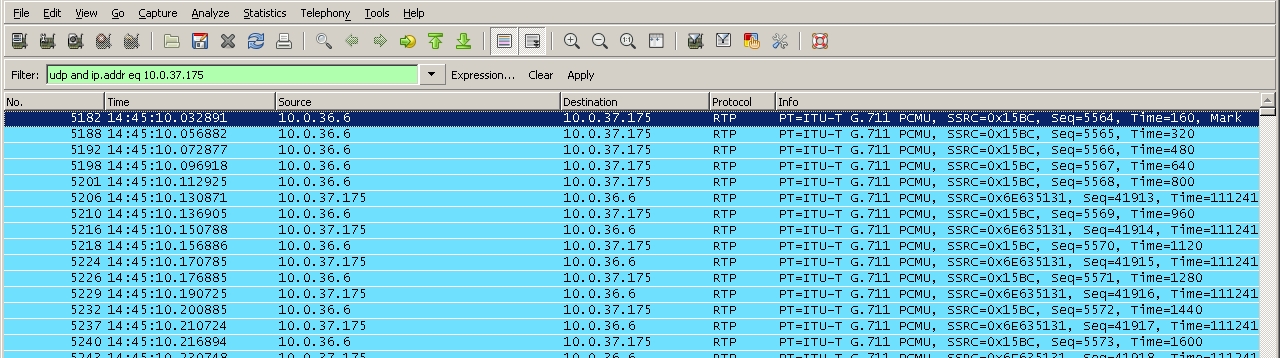

- Use Wireshark's filters. An explanation of how to use this program is out of the scope. The filter I would suggest would be something like this, with 10.0.X.X being one of the 3 enpoints involved on this call. Can you name them?

- rtp and ip.addr == 10.0.X.X

Yes, the 3 enpoints are: the PSTN gateway, and the 2 IP Phones, and the above filter will leave only RTP packets from and to that endpoint so that you can listen to the stream. Remember that the other 2 WAN gateways are not layer 3 destinations, so there is no sense filtering by their IP address. They are potential culprits, but only if we determine that there is a bad stream coming in from the branch site.

- Analyze each stream. Wireshark can rebuild the RTP packets into an audio file so you can listen to the real audio. There are guides out there on how to decode the audio in G.711 and even G.729. This is how you can determine which stream and in which direction was affected.

- Take a decision. By now, you should be able to determine if the telephony devices including the conference bridge are responsible or not. In our particular case, all 3 streams contained perfectly good streams!! What would you conclude from that?? Well, decide for yourself based on this audio file rebuilt from the packet capture, just extract the content and play it out wih Banshee or Windows Media Player.

The stream belongs to the leg between the CallManager bridge and the PSTN gateway. Note at around second 40, how the gentleman says he started experiencing degraded audio, and mentions also a timestamp of 12 seconds. Its been 15 seconds since he was invited to the conference, but already 40 in the absolute conference time. That is why the users reported 15 seconds for it to start. Also note, that the other 2 ladies continue speaking normally, and the audio sent out from CallManager is pretty good.

- Conclusion: the audio is impecable on its way out to the gateway, there must be another device lowering the audio quality. In summary, this was a real case, and the workaround was to adjust the jitter buffer of the PSTN gateway. The permanent fix is an IOS upgrade.

Troubleshooting checklist

You may want to provide the following details to TAC when opening a Service Request on these matters:

- Is it a sound quality problem or a dead air problem?

- How can the sound be described?

- Are both parties experiencing dead air or just one of them? (dead air vs 1 way audio)

- If 1 way audio, please specify which user can hear and which one cannot

- Does it happen with regular calls from one IP Phone to another IP Phone only?

- Does it happen with calls from one IP Phone to an outside number only?

- Does it happen when connecting to a internal voice service like a contact center or voice mail system?

- Is it occurring within the same Local Area Network?

- Is it occurring between an IP Phone in one site, and an IP Phone in another site linked up by a MAN (Metropolitan Area Network) or a WAN (Wide Area Network)?

- Are there security devices in between like firewalls and the alike?

- Does it happen with all phone models?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

+5 Good Document

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: