- Cisco Community

- Technology and Support

- Data Center and Cloud

- Data Center and Cloud Knowledge Base

- Basic NIC Bonding in RHEL

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

01-24-2016 03:55 PM - edited 03-01-2019 06:04 AM

To configure bonding you will need to stop and start network services so you will need access to the console. While the normal KVM will work just fine, it will be much easier if you have configured Serial Over LAN so you can use the clipboard to copy/paste as well as view the kernel boot messages. (I will be adding a HowTo on setting up SoL soon).

This document is based on the Cisco C-Series and contains examples from the C240 M4 with a 1225 MLOM and a 3160 with dual IOC. It also uses many RHEL 6 commands instead of systemd.

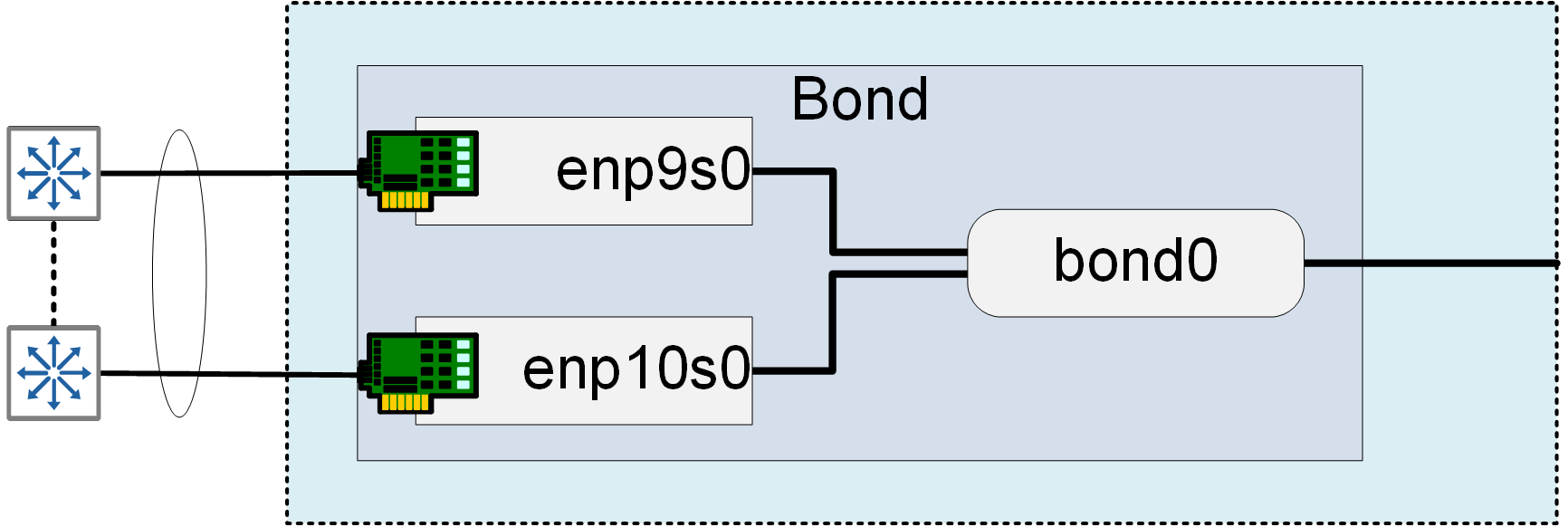

Basic bonding diagram:

- This document describes how to configure the VNICS:

Using vnics with the Cisco C Series Server in Stand Alone Mode

Step #1: Stop networking and disable NetworkManager

- You don't want to use NetworkManager - it breaks many upstream tools and is only really useful for a desktop system. Turn it off and disable it. The "NM_CONTROLLED=no" directive will disable it on a per interface basis, but it is a good idea to disable it globally.

RHEL 6 - non-systemd:

# chkconfig NetworkManager off

# service NetworkManager stop

RHEL 7 - systemd:

# systemctl list-unit-files | grep Network

NetworkManager-dispatcher.service enabled

NetworkManager-wait-online.service enabled

NetworkManager.service enabled

# systemctl disable NetworkManager.service

# systemctl list-unit-files | grep Network

NetworkManager-dispatcher.service disabled

NetworkManager-wait-online.service disabled

NetworkManager.service disabled

# systemctl status NetworkManager.service

NetworkManager.service - Network Manager

Loaded: loaded (/usr/lib/systemd/system/NetworkManager.service; disabled; vendor preset: enabled)

Active: inactive (dead)

- Stop the networking service before you bring up bond0 interface, you will have issues getting the bond to come up cleanly if you don't:

# service network stop

or -

# systemctl stop network.service

Step #2: Create a bond0 configuration file

Red Hat Linux stores network configuration in /etc/sysconfig/network-scripts/ directory. First, you need to create the ifcfg-bond0 interface configuration file and populate it with the settings from the current primary interface. The choice of the name "bond0" is entirely arbitrary, due largely to convention and to be consistent with the standard documents. As long as you are consistent throughout, you can name it what makes sense. For example you might name it based on the VLAN configured on those ports.

Note: The Cisco VIC uses the enic driver and the interfaces will be named "enp##s0". Depending on the system they can start as low as enp6s0, or as high as enp14s0.

The easiest way to get the file configured is to copy the primary ifcfg interface file to the new bond0 file and edit the file down:

# cd /etc/sysconfig/network-scripts/

# cat ifcfg-enp9s0 > ifcfg-bond0

# vi /etc/sysconfig/network-scripts/ifcfg-bond0

Add the following lines to it:

DEVICE=bond0

NAME=bond0

TYPE=bond

BONDING_MASTER=yes

BONDING_OPTS="mode=802.3ad miimon=10 lacp_rate=1"

USERCTL=no

UCS related notes on bonding modes (see bottom of this document for summary of modes):

- UCS Managed servers and blades can only use modes 5, 6, 1, and 0 - "mode 4" is *not* supported when connected to a FI.

- Interfaces used with KVM bridges *require* mode 4.

- Which means, you cannot use bridged networking with KVM on UCS. You should stop now and just use Fabric Failover.

- Stand alone servers with VIC support all modes.

The merged file should look like this (PEERDNS=1 will populate /etc/resolv.conf so DNS resolution won't stop after a link failure). Your individual file may look slightly different depending on the system and use case.

DEVICE=bond0

NAME=bond0

TYPE=bond

BONDING_MASTER=yes

BONDING_OPTS="mode=802.3ad miimon=10 lacp_rate=1"

USERCTL=no

NM_CONTROLLED=no

BOOTPROTO=none

ONBOOT=yes

IPADDR=172.29.175.

NETMASK=255.255.255.192

GATEWAY=172.29.175.129

DEFROUTE=yes

PEERDNS=yes

DNS1=172.29.74.154

DNS2=172.29.74.155

IPV4_FAILURE_FATAL="no"

IPV6INIT="no"

* Replace above IP address etc with your actual network configuration. Save file and exit to shell prompt.

Step #3: Modify existing interface config files:

In later versions of the Linux kernel MAC addresses are not required in the bond slave files, most people still include them. So make backup copies of the originals before proceeding to preserve MAC addresses. Then you will edit the files to remove refrences to the underlying hardware. Since all bond members are virtually identical, edit the first one and copy it over the others, then change the "DEVICE=" parameter appropriately. This example is based on a system with the Cisco VIC which uses the enic driver.

Older versions of RHEL may require the MAC to be included in the interface files, RHEL 7 does not.

# vi /etc/sysconfig/network-scripts/ifcfg-enp9s0

Modify/append directive as follows:

DEVICE=enp9s0

NAME=bond0-slave

MASTER=bond0

TYPE=Ethernet

BOOTPROTO=none

ONBOOT=yes

NM_CONTROLLED=no

SLAVE=yes

Open enp10s0 configuration file using vi text editor:

# vi /etc/sysconfig/network-scripts/ifcfg-enp10s0

Make sure file read as follows for eth1 interface:

DEVICE=enp10s0

NAME=bond0-slave

MASTER=bond0

TYPE=Ethernet

BOOTPROTO=none

ONBOOT=yes

NM_CONTROLLED=no

SLAVE=yes

Step #4: Restart network and verify configuration

- First, verify/load the bonding module:

[root@sj19-hh28-colusa ~]# lsmod | grep bond

bonding 129237 0

It is running - this should be the case with any current Linux distribution

- Start networking service in order to bring up bond0 interface:

# service network start

Step # 4: Troubleshooting and Verification

- View currently configured bonds in:

/proc/net/bonding/

- View the currently active mode for a bond

# cat /sys/class/net/bond0/bonding/mode

balance-rr 0

- Change the mode temporarily:

# ifdown bond0

# echo 1 > /sys/class/net/bond0/bonding/mode

# cat /sys/class/net/bond0/bonding/modeactive-backup 1

# ifup bond0

- You can view the configured bonds and interface parameters here:

/sys/class/net/

[root@sj19-hh28-colusa01 ~]# ls /sys/class/net/

bond0 bonding_masters enp15s0 enp16s0 enp6s0 enp7s0 lo

[root@sj19-hh28-colusa01 ~]# ls /sys/class/net/bond0

addr_assign_type broadcast duplex iflink operstate slave_enp15s0 speed type

address carrier flags link_mode phys_port_id slave_enp16s0 statistics uevent

addr_len dev_id ifalias mtu power slave_enp6s0 subsystem

bonding dormant ifindex netdev_group queues slave_enp7s0 tx_queue_len

- Example of a working bond0 config on a Cisco 3160 with both IOC in a channel bond using mode 6

[root@sj19-hh28-colusa01 ~]# cat /proc/net/bonding/bond0

Ethernet Channel Bonding Driver: v3.7.1 (April 27, 2011)

Bonding Mode: adaptive load balancing

Primary Slave: None

Currently Active Slave: enp15s0

MII Status: up

MII Polling Interval (ms): 0

Up Delay (ms): 0

Down Delay (ms): 0

Slave Interface: enp15s0

MII Status: up

Speed: 10000 Mbps

Duplex: full

Link Failure Count: 0

Permanent HW addr: fc:5b:39:00:7d:9d

Slave queue ID: 0

Slave Interface: enp16s0

MII Status: up

Speed: 10000 Mbps

Duplex: full

Link Failure Count: 0

Permanent HW addr: fc:5b:39:00:7d:9e

Slave queue ID: 0

Slave Interface: enp7s0

MII Status: up

Speed: 10000 Mbps

Duplex: full

Link Failure Count: 0

Permanent HW addr: fc:5b:39:00:80:de

Slave queue ID: 0

Slave Interface: enp6s0

MII Status: up

Speed: 10000 Mbps

Duplex: full

Link Failure Count: 0

Permanent HW addr: fc:5b:39:00:80:dd

Slave queue ID: 0

- A misconfigured bonding configuration for bond1 - the slave files were copied and the master bond was left bond0 (C240 M4 using VIC 1225 MLOM):

[root@sj19-ii29-admin ~]# cat /proc/net/bonding/bond1

Ethernet Channel Bonding Driver: v3.7.1 (April 27, 2011)

Bonding Mode: IEEE 802.3ad Dynamic link aggregation

Transmit Hash Policy: layer2 (0)

MII Status: down

MII Polling Interval (ms): 0

Up Delay (ms): 0

Down Delay (ms): 0

802.3ad info

LACP rate: slow

Min links: 0

Aggregator selection policy (ad_select): stable

bond bond1 has no active aggregator

|_____________________|

|

|---- This usually means your slave interfaces are misconfigured somehow

Working now -

[root@sj19-ii29-admin ~]# cat /proc/net/bonding/bond1

Ethernet Channel Bonding Driver: v3.7.1 (April 27, 2011)

Bonding Mode: IEEE 802.3ad Dynamic link aggregation

Transmit Hash Policy: layer2 (0)

MII Status: up

MII Polling Interval (ms): 0

Up Delay (ms): 0

Down Delay (ms): 0

802.3ad info

LACP rate: slow

Min links: 0

Aggregator selection policy (ad_select): stable

Active Aggregator Info:

Aggregator ID: 1

Number of ports: 2

Actor Key: 33

Partner Key: 32781

Partner Mac Address: 00:23:04:ee:c0:94

Slave Interface: enp11s0

MII Status: up

Speed: 10000 Mbps

Duplex: full

Link Failure Count: 0

Permanent HW addr: 74:a0:2f:42:72:b2

Aggregator ID: 1

Slave queue ID: 0

Slave Interface: enp12s0

MII Status: up

Speed: 10000 Mbps

Duplex: full

Link Failure Count: 0

Permanent HW addr: 74:a0:2f:42:72:b1

Aggregator ID: 1

Slave queue ID: 0

- If things aren't working at all-

Verify that bond driver/module is loadable and configured (required?)

Make sure bonding module is loaded when the channel-bonding interface (bond0) is brought up. You need to modify kernel modules configuration file:

# vi /etc/modprobe.conf

Append following two lines:

alias bond0 bonding

options bond0 mode=balance-alb miimon=100

Save file and exit to shell prompt. You can learn more about all bounding options in kernel source documentation file (click here to read file online).

- Verify port channel configuration on switch

Reference Links:

Driver:

http://www.linuxfoundation.org/collaborate/workgroups/networking/bonding

HowTo:

http://www.cyberciti.biz/howto/question/static/linux-ethernet-bonding-driver-howto.php

Bonding Background:

Sometimes refered to as "port trunking" but be careful, switch focussed engineers will think you mean 801.q VLAN trunking if you call it that. What is bonding for and why do it? Purpose - to make n number of physical NICs (uplinks) function as one logical port. Why? To increase bandwidth when using 1Gb interfaces, to provide HA failover of uplinks, and to distribute network traffic across the uplinks.

In the bond interface config file the "mode=" directive can use either the number or the descriptive name.

Either of the following is valid:

BONDING_OPTS="mode=802.3ad miimon=10 lacp_rate=1"

BONDING_OPTS="mode=4 miimon=10 lacp_rate=1"

Types of bonds in Linux

# | Mode | Description |

0 | balance-rr | Transmits packets through network interface cards in sequential order. Packets are transmitted in a loop that begins with the first available network interface card in the bond and end with the last available network interface card in the bond. All subsequent loops then start with the first available network interface card. Mode 0 offers fault tolerance and balances the load across all network interface cards in the bond. NOTE - Mode 0 cannot be used in conjunction with bridges, and is therefore not compatible with virtual machine logical networks. This mode provides load balancing and fault tolerance. |

1 | active-backup | Only one slave in the bond is active. A different slave becomes active if, and only if, the active slave fails. The bond's MAC address is externally visible on only one port (network adapter) to avoid confusing the switch. In bonding version 2.6.2 or later, when a failover occurs in active-backup mode, bonding will issue one or more gratuitous ARPs on the newly active slave. One gratutious ARP is issued for the bonding master interface and each VLAN interfaces configured above it, provided that the interface has at least one IP address configured. Gratuitous ARPs issued for VLAN interfaces are tagged with the appropriate VLAN id. This mode provides fault tolerance. |

2 | balance-xor | Transmit based on the selected transmit hash policy. The default policy is a simple [(source MAC address XOR'd with destination MAC address) modulo slave count]. Alternate transmit policies may be selected via the xmit_hash_policy option. This mode provides load balancing and fault tolerance. |

3 | broadcast | Transmits all packets to all network interface cards. This mode provides fault tolerance. |

4 | 802.3ad | Creates aggregation groups that share the same speed and duplex settings. Utilizes all slaves in the active aggregator according to the 802.3ad specification. Slave selection for outgoing traffic is done according to the transmit hash policy, which may be changed from the default simple XOR policy via the xmit_hash_policy option, documented below. Note that not all transmit policies may be 802.3ad compliant, particularly in regards to the packet mis-ordering requirements of section 43.2.4 of the 802.3ad standard. Differing peer implementations will have varying tolerances for noncompliance. Prerequisites:

This mode provides load balancing and fault tolerance. |

5 | balance-tlb | Adaptive transmit load balancing: Channel bonding that does not require any special switch support. The outgoing traffic is distributed according to the current load (computed relative to the speed) on each slave. Incoming traffic is received by the current slave. If the receiving slave fails, another slave takes over the MAC address of the failed receiving slave. Prerequisite:

NOTE - Mode 5 cannot be used in conjunction with bridges, therefore it is not compatible with virtual machine logical networks. This mode provides load balancing and fault tolerance. |

6 | balance-alb | Adaptive load balancing: Includes balance-tlb plus receive load balancing (rlb) for IPV4 traffic, and does not require any special switch support. The receive load balancing is achieved by ARP negotiation. The bonding driver intercepts the ARP Replies sent by the local system on their way out and overwrites the source hardware address with the unique hardware address of one of the slaves in the bond such that different peers use different hardware addresses for the server. Receive traffic from connections created by the server is also balanced. When the local system sends an ARP Request the bonding driver copies and saves the peer's IP information from the ARP packet. When the ARP Reply arrives from the peer, its hardware address is retrieved and the bonding driver initiates an ARP reply to this peer assigning it to one of the slaves in the bond. A problematic outcome of using ARP negotiation for balancing is that each time that an ARP request is broadcast it uses the hardware address of the bond. Hence, peers learn the hardware address of the bond and the balancing of receive traffic collapses to the current slave. This is handled by sending updates (ARP Replies) to all the peers with their individually assigned hardware address such that the traffic is redistributed. Receive traffic is also redistributed when a new slave is added to the bond and when an inactive slave is re-activated. The receive load is distributed sequentially (round robin) among the group of highest speed slaves in the bond. When a link is reconnected or a new slave joins the bond the receive traffic is redistributed among all active slaves in the bond by initiating ARP Replies with the selected mac address to each of the clients. The updelay parameter (detailed below) must be set to a value equal or greater than the switch's forwarding delay so that the ARP Replies sent to the peers will not be blocked by the switch. Prerequisites:

NOTE - Mode 6 cannot be used in conjunction with bridges, therefore it is not compatible with virtual machine logical networks. This mode provides load balancing and fault tolerance. |

| BONDING_OPTS Parameter | Description |

|---|---|

miimon= | Specifies (in milliseconds) how often MII link monitoring occurs. This is useful if high availability is required because MII is used to verify that the NIC is active. To verify that the driver for a particular NIC supports the MII tool, type the following command as root:

In this command, replace If using a bonded interface for high availability, the module for each NIC must support MII. Setting the value to |

lacp_rate | Specifies the rate at which link partners should transmit LACPDU packets in 802.3ad mode. Possible values are:

|

| xmit_hash_policy | Selects the transmit hash policy used for slave selection in

|

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello, i have a server with bonding interface bond1 which i want to attach on cisco switch, can i use mode 1 with port-security activated on the switch? Or should i disable security-port . Bond1 use the same mac address on slave and master eth1&2, the first port on the switch secure tis mac and the second can’t have the same mac secured too?

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: