- Cisco Community

- Technology and Support

- Networking

- Networking Knowledge Base

- How to Setup a TransitVNET in Azure with CSR 1000v and Azure Internal Load Balancer (ILB)

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

07-23-2019 02:47 PM - edited 07-23-2019 03:43 PM

This how-to is a step-by-step guide to setup a so-called TransitVNET in Microsoft Azure with CSR 1000v and Azure Internal Load Balancer (ILB).

Solution Description

The goal of this solution is to have a scalable and highly available way of interconnecting VNETs in Azure to each other and back to an on-premise network (i.e. via IPSec). This is achieved by placing an Internal Load Balancer between the application / spoke VNETs and the CSR 1000v virtual routers in the TransitVNET.

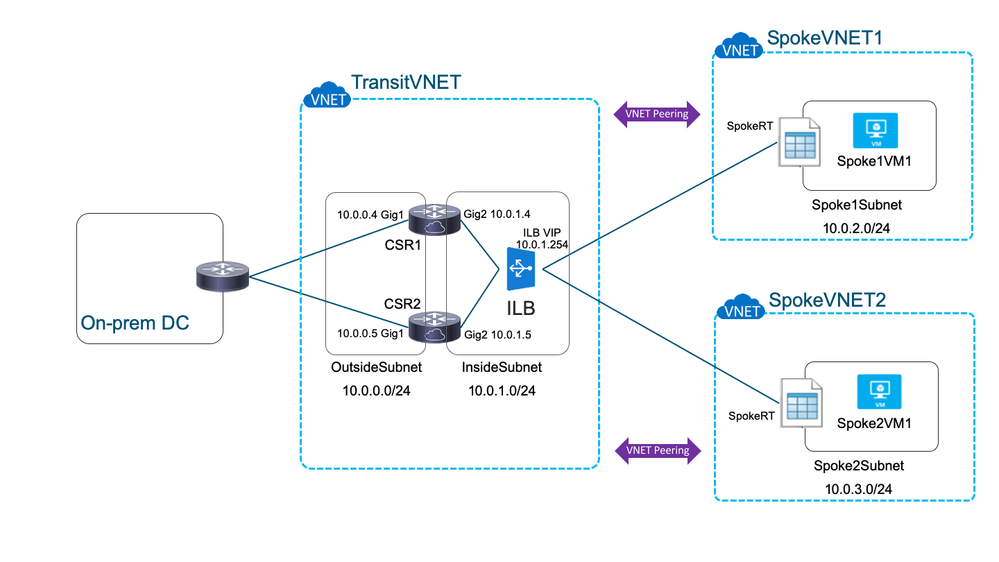

Please consider the following diagram for the specific setup used in this how-to:

-

Two spoke VNETs (SpokeVNET1 & SpokeVNET2), both house one VM each.

-

One TransitVNET housing two CSR 1000v routers (CSR1 & CSR2) and one Internal Load Balancer (ILB).

-

One on-premise Datacenter housing a random (Cisco) router (e.g. ASR 1000 or ISR 4000).

-

Note: Configuration and Setup of the on-premise router and IPSec connection are not covered in this how-to and left as an exercise to the reader.

-

-

VNET peering is configured between each spoke VNET and the TransitVNET. This means, that there is direct IP connectivity between each spoke VNET and the TransitVNET and that no tunneling is needed between them. Subnets must not overlap.

-

A UDR (user-defined route table) is setup in each spoke VNET. The next-hop of the route to the on-premise network (10.0.0.0/8 in this case) points to the VIP (virtual IP) of the ILB.

-

The ILB is setup in a way that it will forward all protocols and ports coming in on the VIP to the CSRs using load balancing. This makes the solution scalable from one CSR to multiple CSRs.

-

The ILB will do a health check of each CSR by periodically connecting to port 80/TCP. If one of the CSRs is not reachable, it will be taken out of service. This makes the solution highly available.

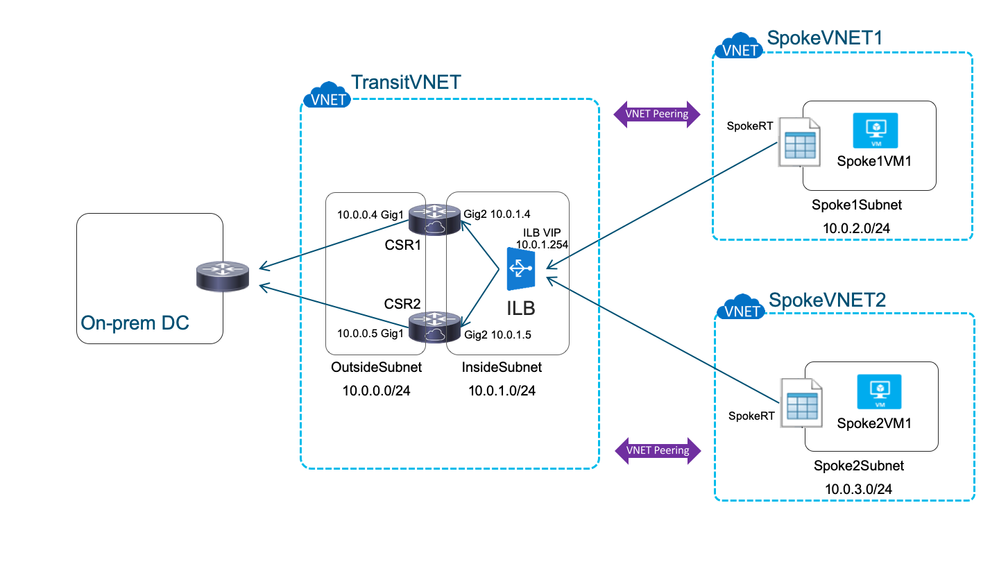

Traffic flow from spoke VNET to the on-premise DC is via the ILB:

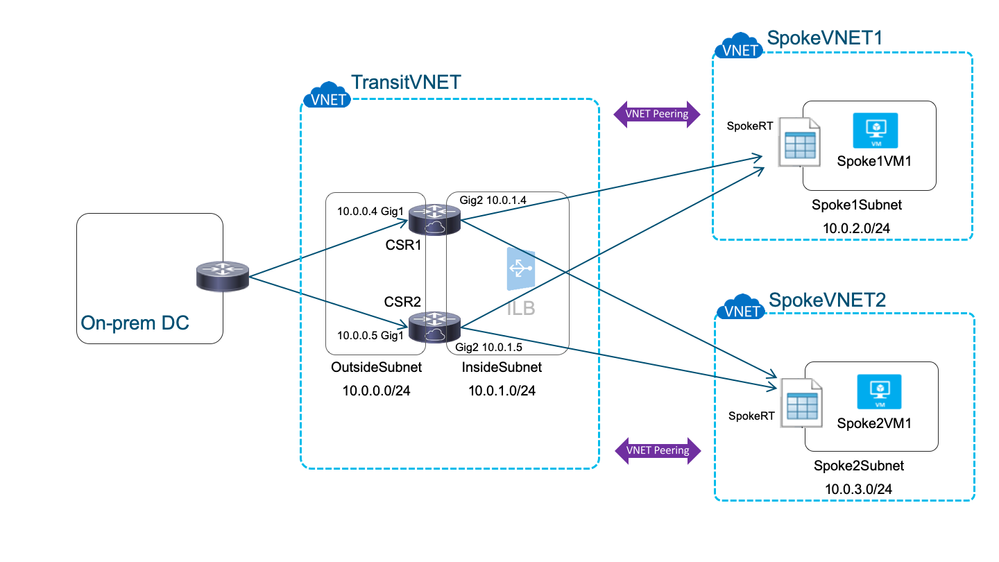

Traffic flow from the on-premise DC to the spoke VNETs is direct, leveraging the VNET peering and bypassing the ILB:

Prerequisites

-

A valid Azure subscription account. If you don’t have one, you can create your free azure account (https://azure.microsoft.com/en-us/free/) today.

-

Bash shell. If you are using Windows 10, you can install Bash shell on Ubuntu on Windows (http://www.windowscentral.com/how-install-bash-shell-command-line-windows-10).

-

Azure CLI 2.0, follow these instructions to install: https://docs.microsoft.com/en-us/cli/azure/install-azure-cli

-

Basic knowledge of Azure networking.

-

Basic knowledge of Cisco IOS or IOS-XE.

-

SSH private/public key-pair setup in the default location

Step-by-step instructions to setup TransitVNET, ILB & CSRs

Note: To help in setting this solution up as quickly as possible for testing or reference, a convenient shell script is attached to this post. Please see section "Automating the setup" for details below.

1. Create resource group, TransitVNET and two subnets

echo Creating resource group...

az group create --name TVNET --location EastUS

echo Creating VNET...

az network vnet create --name TransitVNET --resource-group TVNET --address-prefix 10.0.0.0/23

echo Creating inside subnet...

az network vnet subnet create --address-prefix 10.0.0.0/24 --name OutsideSubnet --resource-group TVNET --vnet-name TransitVNET

echo Creating outside subnet...

az network vnet subnet create --address-prefix 10.0.1.0/24 --name InsideSubnet --resource-group TVNET --vnet-name TransitVNET

2. Create the internal load balancer with HA port

echo Creating Azure Internal Load Balancer... az network lb create --name TVNET_ILB --resource-group TVNET --sku Standard --private-ip-address 10.0.1.254 --vnet-name TransitVNET --subnet InsideSubnet --backend-pool-name ILBBackendpool

echo Creating ILB health probe to connect to port 80 with minimal possible interval... az network lb probe create --resource-group TVNET --lb-name TVNET_ILB --name ILBHealthProbe --protocol tcp --port 80 --interval 5 --threshold 2

echo Creating ILB rule to forward all packets to the CSRs... az network lb rule create --resource-group TVNET --lb-name TVNET_ILB --name ILBPortsRule --protocol All --frontend-port 0 --backend-port 0 --backend-pool-name ILBBackendpool --probe-name ILBHealthProbe

3. Create NSG (Network Security Groups)

echo Creating network security group for outside subnet... az network nsg create --resource-group TVNET --name OutsideSubnetNSG --location EastUS

echo Creating nsg rule Allow_IPSEC1 ...

az network nsg rule create --resource-group TVNET --nsg-name OutsideSubnetNSG --name Allow_IPSEC1 --access Allow --protocol udp --direction Inbound --priority 100 --source-address-prefix * --source-port-range * --destination-address-prefix * --destination-port-range 500

echo Creating nsg rule Allow_IPSEC2...

az network nsg rule create --resource-group TVNET --nsg-name OutsideSubnetNSG --name Allow_IPSEC2 --access Allow --protocol udp --direction Inbound --priority 110 --source-address-prefix * --source-port-range * --destination-address-prefix * --destination-port-range 4500

echo Creating nsg rule Allow_SSH... az network nsg rule create --resource-group TVNET --nsg-name OutsideSubnetNSG --name Allow_SSH --access Allow --protocol tcp --direction Inbound --priority 120 --source-address-prefix Internet --source-port-range * --destination-address-prefix * --destination-port-range 22

echo Creating nsg rule Allow_All_Out... az network nsg rule create --resource-group TVNET --nsg-name OutsideSubnetNSG --name Allow_All_Out --access Allow --protocol * --direction Outbound --priority 140 --source-address-prefix * --source-port-range * --destination-address-prefix * --destination-port-range *

echo Creating network security group for inside subnet... az network nsg create --resource-group TVNET --name InsideSubnetNSG --location EastUS

echo Creating nsg rule Allow_All_Out (2)... az network nsg rule create --resource-group TVNET --nsg-name InsideSubnetNSG --name Allow_All_Out --access Allow --protocol * --direction Outbound --priority 140 --source-address-prefix * --source-port-range * --destination-address-prefix * --destination-port-range *

4. Create file with initial IOS-XE config

cat >csr_customdata_temp.txt <<endofcustemdata Section: IOS configuration ! Azure sources the ILB probes from 168.63.129.16 ip route 168.63.129.16 255.255.255.255 10.0.1.1

! Static routing towards the spoke VNETs pointing out the internal interface

!! Do not forget to create new static routes if you create new spoke VNETs !! ip route 10.0.2.0 255.255.255.0 10.0.1.1

ip route 10.0.3.0 255.255.255.0 10.0.1.1 endofcustemdata

5. Create the two CSRs

(including public IPs, NICs and availability set)

echo Creating public IP for CSR1... az network public-ip create --name CSR1PublicIP --resource-group TVNET --idle-timeout 30 --allocation-method Static --sku standard

echo Creating public IP for CSR2... az network public-ip create --name CSR2PublicIP --resource-group TVNET --idle-timeout 30 --allocation-method Static --sku standard

echo Creating outside interface for CSR1... az network nic create --name CSR1OutsideInterface -g TVNET --subnet OutsideSubnet --vnet TransitVNET --public-ip-address CSR1PublicIP --private-ip-address 10.0.0.4 --ip-forwarding true --network-security-group OutsideSubnetNSG --accelerated-networking true

echo Creating inside interface for CSR1... az network nic create --name CSR1InsideInterface -g TVNET --subnet InsideSubnet --vnet TransitVNET --ip-forwarding true --private-ip-address 10.0.1.4 --network-security-group InsideSubnetNSG --lb-name TVNET_ILB --lb-address-pools ILBBackendpool --accelerated-networking true

echo Creating outside interface for CSR2... az network nic create --name CSR2OutsideInterface -g TVNET --subnet OutsideSubnet --vnet TransitVNET --public-ip-address CSR2PublicIP --private-ip-address 10.0.0.5 --ip-forwarding true --network-security-group OutsideSubnetNSG --accelerated-networking true

echo Creating inside interface for CSR2... az network nic create --name CSR2InsideInterface -g TVNET --subnet InsideSubnet --vnet TransitVNET --ip-forwarding true --private-ip-address 10.0.1.5 --network-security-group InsideSubnetNSG --lb-name TVNET_ILB --lb-address-pools ILBBackendpool --accelerated-networking true

echo Creating availability set... az vm availability-set create --resource-group TVNET --name CSRAvailabiliySet --platform-fault-domain-count 2 --platform-update-domain-count 2

echo Creating CSR1... az vm create --resource-group TVNET --location EastUS --name CSR1 --size Standard_DS4_v2 --nics CSR1OutsideInterface CSR1InsideInterface --image cisco:cisco-csr-1000v:16_10-byol:16.10.120190108 --authentication-type ssh --generate-ssh-keys --availability-set CSRAvailabiliySet --custom-data csr_customdata_temp.txt

echo Creating CSR2... az vm create --resource-group TVNET --location EastUS --name CSR2 --size Standard_DS4_v2 --nics CSR2OutsideInterface CSR2InsideInterface --image cisco:cisco-csr-1000v:16_10-byol:16.10.120190108 --authentication-type ssh --generate-ssh-keys --availability-set CSRAvailabiliySet --custom-data csr_customdata_temp.txt

Step-by-step instructions to setup the spoke VNETs and attach to ILB

1. Create two new spoke VNETs

(including subnets and Ubuntu VMs)

echo Creating VNET for Spoke1... az network vnet create --name Spoke1VNET --resource-group TVNET --address-prefix 10.0.2.0/24 --output json

echo Creating subnet for Spoke1... az network vnet subnet create --address-prefix 10.0.2.0/24 --name Spoke1Subnet --resource-group TVNET --vnet-name Spoke1VNET --output json

echo Creating VNET for Spoke2... az network vnet create --name Spoke2VNET --resource-group TVNET --address-prefix 10.0.3.0/24

echo Creating subnet for Spoke2... az network vnet subnet create --address-prefix 10.0.3.0/24 --name Spoke2Subnet --resource-group TVNET --vnet-name Spoke2VNET

echo Creating instance Spoke1... az vm create -n Spoke1VM1 -g TVNET --image UbuntuLTS --vnet-name Spoke1VNET --size Standard_DS4_v2 --subnet Spoke1Subnet --accelerated-networking true

echo Creating instance Spoke2... az vm create -n Spoke2VM1 -g TVNET --image UbuntuLTS --vnet-name Spoke2VNET --size Standard_DS4_v2 --subnet Spoke2Subnet --accelerated-networking true

2. Setup peering between each spoke VNET and the TransitVNET

echo Setting up peering between TransitVNET and Spoke1... az network vnet peering create -g TVNET -n TransitVNETtoSpoke1 --vnet-name TransitVNET --remote-vnet Spoke1VNET --allow-vnet-access

echo Setting up peering between Spoke1 and TransitVNET... az network vnet peering create -g TVNET -n TransitVNETtoSpoke1 --vnet-name Spoke1VNET --remote-vnet TransitVNET --allow-vnet-access --allow-forwarded-traffic

echo Setting up peering between TransitVNET and Spoke2... az network vnet peering create -g TVNET -n TransitVNETtoSpoke2 --vnet-name TransitVNET --remote-vnet Spoke2VNET --allow-vnet-access

echo Setting up peering between Spoke2 and TransitVNET... az network vnet peering create -g TVNET -n TransitVNETtoSpoke2 --vnet-name Spoke2VNET --remote-vnet TransitVNET --allow-vnet-access --allow-forwarded-traffic

3. Setup UDR in spoke VNETs

echo Creating route table for Spokes... az network route-table create --name SpokeRT -g TVNET --location EastUS

echo Configuring route via the ILB... az network route-table route create -g TVNET --route-table-name SpokeRT -n ViaILB --next-hop-type VirtualAppliance --address-prefix 10.0.0.0/8 --next-hop-ip-address 10.0.1.254

echo Attaching route table to Spoke1Subnet... az network vnet subnet update --name Spoke1Subnet --resource-group TVNET --vnet-name Spoke1VNET --route-table SpokeRT

echo Attaching route table to Spoke2Subnet... az network vnet subnet update --name Spoke2Subnet --resource-group TVNET --vnet-name Spoke2VNET --route-table SpokeRT

Note: In this how-to we only route the 10.0.0.0/8 private network via the ILB to retain the ability to directly connect to the VMs from the Internet via ssh and to have direct internet access for the VMs.

4. Query all assigned IP addresses

(so we know where to connect to)

echo Getting IP addresses... az vm list-ip-addresses -g TVNET --output table

VirtualMachine PrivateIPAddresses PublicIPAddresses

---------------- -------------------- -------------------

CSR1 10.0.1.4

CSR1 10.0.0.4 52.151.239.79

CSR2 10.0.1.5

CSR2 10.0.0.5 52.151.239.88

Spoke1VM1 10.0.2.4 40.114.8.96

Spoke2VM1 10.0.3.4 40.114.49.237

Verify

Perform the following steps to verify the setup

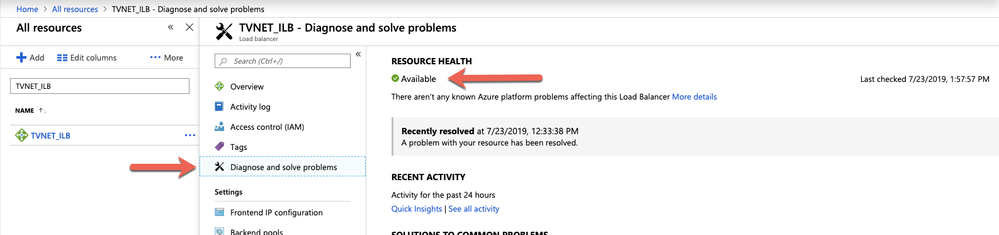

Verify the ILB resource health in the Azure portal:

Please see the following documentation for more options to check the health of your ILB: https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-standard-diagnostics

Verify that the ILB is sending probes to the CSRs by connecting to port 80:

thulsdau$ ssh 52.151.239.79 "show ip http server statistics" ; echo HTTP server statistics: Accepted connections total: 769 thulsdau$ ssh 52.151.239.88 "show ip http server statistics" ; echo HTTP server statistics: Accepted connections total: 736

Log into Spoke1VM1 and ping Spoke2VM1 as well as the CSRs:

thulsdau$ ssh 40.114.8.96 The authenticity of host '40.114.8.96 (40.114.8.96)' can't be established. ECDSA key fingerprint is SHA256:smahT708NMluJYneNVZ9UhajLYrt+LjnhHLrq/duodg. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added '40.114.8.96' (ECDSA) to the list of known hosts. Welcome to Ubuntu 18.04.2 LTS (GNU/Linux 4.18.0-1024-azure x86_64) thulsdau@Spoke1VM1:~$ for IP in 10.0.1.4 10.0.1.5 10.0.2.4 10.0.3.4 ; do ping -c 2 $IP ; done PING 10.0.1.4 (10.0.1.4) 56(84) bytes of data. 64 bytes from 10.0.1.4: icmp_seq=1 ttl=255 time=2.38 ms 64 bytes from 10.0.1.4: icmp_seq=2 ttl=255 time=1.76 ms --- 10.0.1.4 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1001ms rtt min/avg/max/mdev = 1.763/2.075/2.388/0.315 ms PING 10.0.1.5 (10.0.1.5) 56(84) bytes of data. 64 bytes from 10.0.1.5: icmp_seq=1 ttl=255 time=2.74 ms 64 bytes from 10.0.1.5: icmp_seq=2 ttl=255 time=1.85 ms --- 10.0.1.5 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1001ms rtt min/avg/max/mdev = 1.852/2.298/2.744/0.446 ms PING 10.0.2.4 (10.0.2.4) 56(84) bytes of data. 64 bytes from 10.0.2.4: icmp_seq=1 ttl=64 time=0.023 ms 64 bytes from 10.0.2.4: icmp_seq=2 ttl=64 time=0.027 ms --- 10.0.2.4 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1031ms rtt min/avg/max/mdev = 0.023/0.025/0.027/0.002 ms PING 10.0.3.4 (10.0.3.4) 56(84) bytes of data. 64 bytes from 10.0.3.4: icmp_seq=1 ttl=63 time=3.82 ms 64 bytes from 10.0.3.4: icmp_seq=2 ttl=63 time=3.02 ms --- 10.0.3.4 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1002ms rtt min/avg/max/mdev = 3.026/3.426/3.826/0.400 ms thulsdau@Spoke1VM1:~$

Verify with tracepath that traffic between the Ubuntu VMs traverses the CSRs (note that both CSRs are listed, indicating that load balancing is working as expected):

thulsdau@Spoke1VM1:~$ tracepath 10.0.3.4

1?: [LOCALHOST] pmtu 1500

1: 10.0.1.4 2.189ms

1: 10.0.1.5 2.164ms

2: 10.0.3.4 2.946ms reached

Resume: pmtu 1500 hops 2 back 2

thulsdau@Spoke1VM1:~$

Automating the setup

To help in setting up the whole solution as described in this article as quickly as possible and as a starting point for custom automation, as shell script (in az_create_ilb_transitvnet.zip) is attached to this document.

All parameters are defined as variables to make adaption simple.

Please make sure that Azure CLI is working on your computer before running the script (see section "Prerequisites").

Running the script is straight forward:

thulsdau$ ./az_create_ilb_transitvnet.sh *** Setting up TransitVNET with Cisco CSR and Azure Internal Load Balancer *** Creating resource group... Creating VNET... Creating inside subnet... Creating outside subnet... Creating Azure Internal Load Balancer... Creating ILB probe... Creating ILB rule... Creating network security group for outside subnet... Creating nsg rule Allow_IPSEC1 ... Creating nsg rule Allow_IPSEC2... Creating nsg rule Allow_SSH... Creating nsg rule Allow_All_Out... Creating network security group for inside subnet... Creating nsg rule Allow_All_Out (2)... Creating public IP for CSR1... Creating public IP for CSR2... Creating outside interface for CSR1... Creating inside interface for CSR1... Creating outside interface for CSR2... Creating inside interface for CSR2... Creating availability set... Creating CSR1... Creating CSR2... Creating VNET for Spoke1... Creating subnet for Spoke1... Creating VNET for Spoke2... Creating subnet for Spoke2... Creating instance Spoke1... Creating instance Spoke2... Setting up peering between TransitVNET and Spoke1... Setting up peering between Spoke1 and TransitVNET... Setting up peering between TransitVNET and Spoke2... Setting up peering between Spoke2 and TransitVNET... Creating route table for Spokes... Configuring route via the ILB... Attaching route table to Spoke1Subnet... Attaching route table to Spoke2Subnet... Getting IP addresses... VirtualMachine PrivateIPAddresses PublicIPAddresses ---------------- -------------------- ------------------- CSR1 10.0.1.4 CSR1 10.0.0.4 52.151.239.79 CSR2 10.0.1.5 CSR2 10.0.0.5 52.151.239.88 Spoke1VM1 10.0.2.4 40.114.8.96 Spoke2VM1 10.0.3.4 40.114.49.237 Script started: Tue Jul 23 12:15:12 PDT 2019 Script finished: Tue Jul 23 12:34:31 PDT 2019 *** TransitVNET created ***

Deleting the setup

To delete the whole setup, we can simply delete the resource group and Azure will take care of deleting all associated resources:

$ time az group delete -g TVNET --yes real 19m37.327s user 0m2.447s sys 0m0.394s

(Timing just given as an indication how long the operation will typically take)

Planned improvements to this how-to

- Add ability for CSR to take itself 'out-of-service' if its connection to the on-premise DC is down

- Include performance tests

References

Please see these links for additional information:

Acknowledgments

The idea for the format of this how-to, specifically the use of Azure CLI instead of GUI screenshots came from the labs of github user jwrightazure: https://github.com/jwrightazure/lab

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Question:

For the communication from Spoke VM to on premise, the request will hit iLB VIP. While forwarding the traffic to CSRs won't the iLB change the destination IP from on prem server to CSR IP address? And if it does that then how does the CSR know what the destination is and where to forward the traffic?

Thank you

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Is there a downloadable Visio for the images in this solution?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thank you for this guide, very helpful. regarding the "Add ability for CSR to take itself 'out-of-service' if its connection to the on-premises DC is down", any news to share on that? this would be extremely helpful.

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: