- Cisco Community

- Technology and Support

- Networking

- Networking Knowledge Base

- Cisco Software Defined Access Case Studies

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

06-04-2019 11:44 AM - edited 06-10-2019 03:27 PM

Imran Bashir

May 2019

- Introduction

- About This Document

- Cisco Software-Defined Solution Overview

- Cisco Software-Defined Access Components

- Cisco Software-Defined Access Solution Operations

- Appendix A: Troubleshooting

- Appendix B: Cisco Software-Defined Access Fabric Design

Introduction

About Cisco Software Defined Access (SDA)

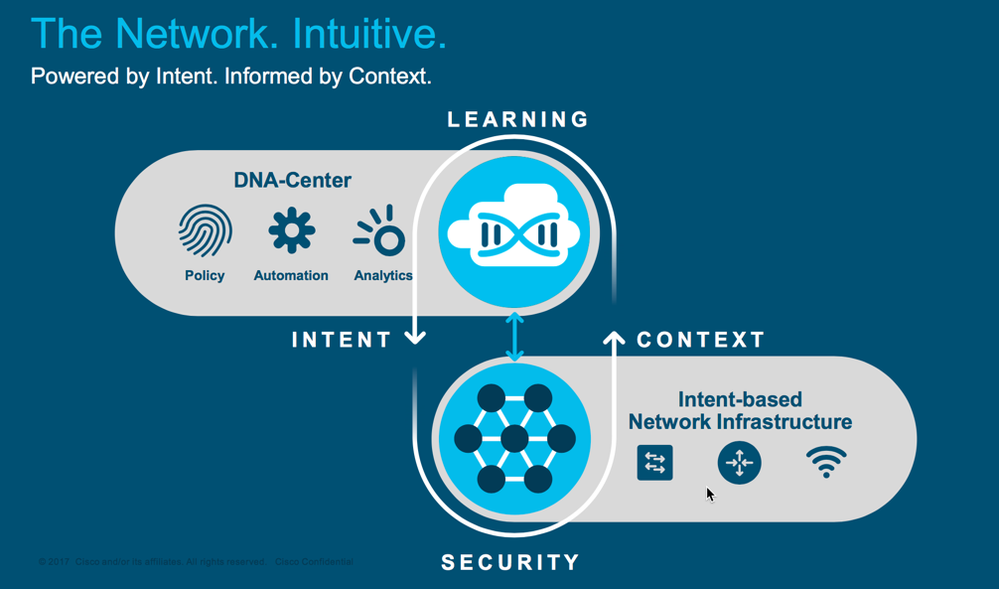

Cisco® Software-Defined Access (SD-Access) enables customers to ease their network management worries, it gives you a single network fabric, from the edge to the cloud. You can set policy-based automation for users, devices, and things. Provide access to any application, without compromising on security. All while gaining awareness of what is hitting your network. And with automatic segmentation of users, devices, and applications, you can deploy and secure services faster.

There are a few components which makes the solution

-

Cisco DNA Center™ software for designing, provisioning, applying policy, and facilitating the creation of an intelligent campus wired and wireless network with assurance

-

The SDA Fabric, which enables wired and wireless campus networks with programmable overlays and easy-to-deploy network virtualization, permitting a physical network to host one or more logical networks as required to meet the design intent.

-

Cisco Identity Services Engine, to enable micro segmentation in the Fabric.

What is Covered in This Document?

This document provides an overview of SD-Access physical components, logical architecture, and how and SD-Access networks functions. Then, we will walk thru the steps and case studies of building the network, growing it, and enabling the new capabilities provided by the network.

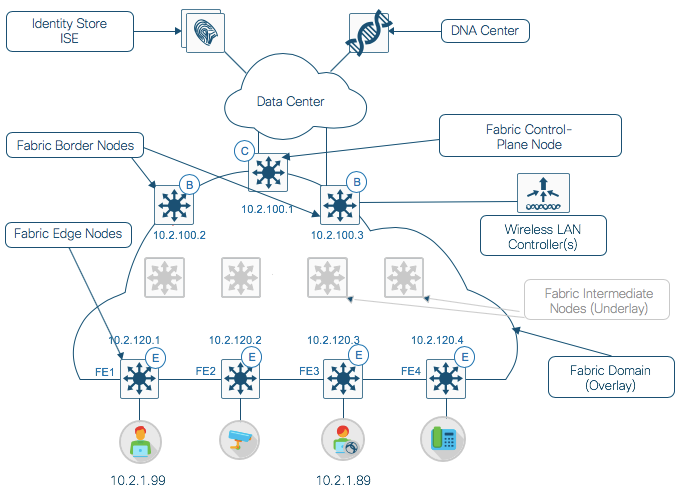

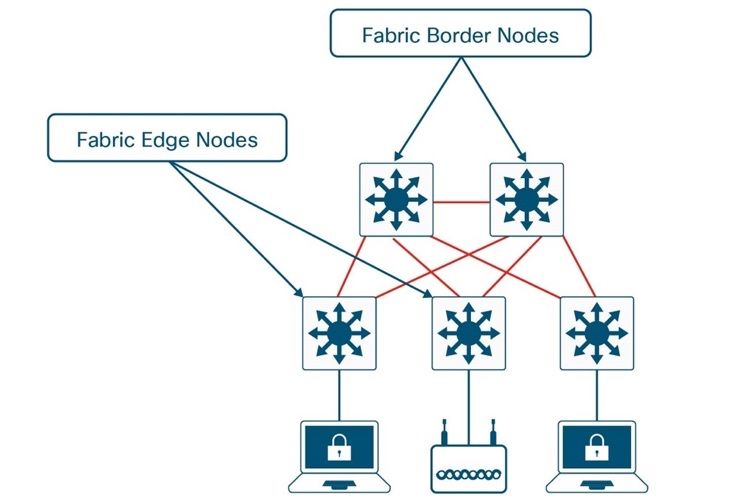

Figure2: Fabric components

The following are the solution components described in this document:

| Term | Description |

|---|---|

| Cisco DNA Center | Cisco DNA Center is a software solution that resides on the Cisco DNA Center appliance. The solution receives data in the form of streaming telemetry from every device (switch, router, access point, and wireless access controller) on the network. |

| ISE | AAA Policy Server -- Cisco Identity Services Engine (ISE) is a network administration product that enables the creation and enforcement of security and access policies for endpoint devices connected to the company's routers and switches. The purpose is to simplify identity management across diverse devices and applications. |

| Wireless LAN Controller | A wireless LAN (WLAN) controller is used in combination with the Lightweight Access Point Protocol (LWAPP) to manage light-weight access points in large quantities by the network administrator or network operations center. The wireless LAN controller is part of the Data Plane within the Cisco Wireless Model. |

| Fabric Domain | A logical (administrative) construct consisting of one or more Fabric or more Transits. Multiple independent Fabrics are connected to each other using a Transit. |

| Fabric Control Node | The SD-Access fabric control plane node is based on the LISP Map-Server (MS) and Map-Resolver (MR) functionality combined on the same node. The control plane database tracks all endpoints in the fabric site and associates the endpoints to fabric nodes, decoupling the endpoint IP address or MAC address from the location (closest router) in the network. |

| Fabric Border | The location where traffic exits the fabric as the default path to all other networks is an external border |

| Fabric Edge Nodes | The SD-Access fabric edge nodes are the equivalent of an access layer switch in a traditional campus LAN design. The edge nodes implement a Layer 3 access design |

| Fabric Intermediate Node | The fabric intermediate nodes are part of the Layer 3 network used for interconnections among the edge nodes to the border nodes |

What is Not Covered in This Document?

Although this is a case study document for Cisco software defined access, details on design consideration for Cisco DNA and Cisco ISE or platforms switches are not covered in this document. For more information about these, see the Cisco DNA design, Cisco ISE Design and Integration Guides and the platforms page.

We will not be covering Cisco SDA Setup and Configurations, please refer to the Cisco Validated Design documents.

About This Document

This guide is intended to provide information on how the Cisco Software Defined Access Solution works and the packet level walk of wired and wireless users connecting to the network to handle various endpoint onboarding scenarios.

Figure3: What is in this document

This document contains three major sections:

-

The Solution Overview section provides information about the problem statement and what is the solution offering.

-

The Solution Components section describes definitions for the various elements of the Cisco SDA solution.

-

The Operate section provides information about packet walks for Wired and Wireless users and devices connecting to the Cisco SDA fabric. It does not include any configurations, please reference the Cisco Validated Design (CVD) or the configuration guide for SDA setup and configuration guidelines.

Cisco Software-Defined Solution Overview

This guide provides an overview of SD-Access physical components, logical architecture, and how and SD-Access networks functions. Then, the guide steps through case studies of building the network, growing it, and enabling the new capabilities provided by the network.

Within the SD-Access solution, a fabric site is composed of an independent set of fabric control plane nodes, edge nodes, intermediate (transport only) nodes, and border nodes. Wireless integration adds fabric WLC and fabric mode AP components to the fabric site. Fabric sites can be interconnected using an SD-Access transit network to create a larger fabric domain. This section describes the functionality for each role, how the roles map to the physical campus topology, and the components required for solution management, wireless integration, and policy application.

Control plane node

The users and devices connect to the Edge node in a fabric, consider this Edge node as an access switch in a traditional network. The difference in Fabric vs non-fabric is that the communication from Edge to Border (exit point from the Fabric) is now Layer 3 (instead of Layer 2 in non-Fabric environment).

The connectivity between the clients (users or devices) is still running layer 2, which means that ARP will still work for clients on the same switch, however the network in Fabric is now running at layer 3 between Fabric Edge and the Border node hence the traditional ARP look-up for clients on different switches will not work.

Control plane provides location of the clients by using LISP protocol, with-in control plane host tracking database (HTDB) is used to track and look-up which clients are connected to what fabric edge (FE) switches.

The SD-Access fabric control plane node is based on the LISP Map-Server (MS) and Map-Resolver (MR) functionality combined on the same node. The control plane database tracks all endpoints in the fabric site and associates the endpoints to fabric nodes, decoupling the endpoint IP address or MAC address from the location (closest router) in the network.

The control plane node enables the following functions:

-

Host tracking database—The host tracking database (HTDB) is a central repository of EID-to-fabric-edge node bindings.

-

Map server—The LISP MS is used to populate the HTDB from registration messages from fabric edge devices.

-

Map resolver—The LISP MR is used to respond to map queries from fabric edge devices requesting RLOC mapping information for destination EIDs.

Border node

As mentioned above, the communication between FE and Border is Layer 3 and the control plane protocol is LISP. The traffic outside the Fabric might not be using LISP and hence we need an exit point that can convert the packets in to IP headers. Also, if there is micro segmentation enabled within the fabric and the upstream devices are not TrustSec/ SGT enabled, these packets might get dropped.

The fabric border nodes serve as the gateway between the SD-Access fabric site and the networks external to the fabric. The fabric border node also is responsible for network virtualization interworking and SGT propagation from the fabric to the rest of the network. The fabric border nodes can operate as the gateway for specific network addresses such as a shared services or data center network, or can be a useful common exit point from a fabric, such as for the rest of an enterprise network along with the Internet, you can use the same Border node for combined role (gateway for specific network and/ or rest of the world).

The control plane node functionality can be collocated with a border node or can use dedicated nodes for scale and between two and six nodes are used for resiliency. Border and edge nodes register with and use all control plane nodes, so resilient nodes chosen should be of the same type for consistent performance.

Border nodes implement the following functions:

-

Advertisement of Endpoint Identifier (EID) subnets— The mapping and resolving of endpoints requires a control plane protocol, and SD-Access uses Locator/ID Separation Protocol (LISP) for this task. LISP brings the advantage of routing based not only on the IP address or MAC address as the Endpoint Identifier (EID) for a device but also on an additional IP address that it provides as a Routing Locator (RLOC) to represent the network location of that device. The RLOC address resides on the Fabric Edge node (FE)

-

Fabric domain exit point— As mentioned above, the fabric border is the gateway of last resort for the fabric edge nodes. This is implemented using LISP Proxy Tunnel Router functionality. Border node can also be connected to networks with a well-defined set of IP subnets (e.g. Private Data Centers etc ..) , adding the requirement to advertise those subnets into the fabric.

-

Mapping of LISP instance to VRF—The fabric border can extend network virtualization from inside the fabric to outside the fabric (SDA-Transit) by using external VRF instances in order to preserve the virtualization.

-

Policy mapping—The fabric border node also maps SGT information from within the fabric to be appropriately maintained when exiting that fabric. SGT information is propagated from the fabric border node to the network external to the fabric either by transporting the tags to Cisco TrustSec-aware devices using SGT Exchange Protocol (SXP) or by directly mapping SGTs into the Cisco metadata field in a packet, using inline tagging capabilities implemented for connections to the border node.

|

Note: The roles of borders are expanding to scale to larger distributed campus deployments with local site services interconnected with a transit control plane and managed by Cisco DNA Center. Check your release notes for general availability of these new roles and features. |

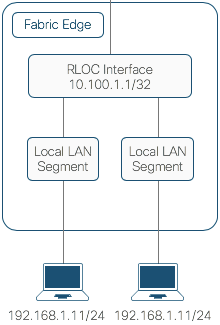

Edge node

The SD-Access fabric edge nodes are the equivalent of an access layer switch in a traditional campus LAN design. The edge nodes implement a Layer 3 access design with the addition of the following fabric functions:

-

Endpoint registration—We discussed earlier that Control Plane Node (CP) has all the entries for the endpoints in its HTDB, but the CP relies on the FE to populate these entries. As endpoints connected or are detected by the fabric edge, it is added to a local host tracking database on FE called the EID-table. The edge device then issues a LISP map-register message to inform the CP of the endpoint detection so that CP can populate the HTDB.

-

Mapping of user to virtual network—Endpoints are placed into virtual networks by assigning the endpoint to a VLAN associated with a LISP instance, the assignment of endpoints to VLAN is usually done statically or recommendation is dynamic assignment by a AAA Server (Cisco ISE) by using MAB, 802.1X or WebAuth. An SGT can also be assigned to provide micro segmentation and policy enforcement at the fabric edge.

|

Note: Cisco IOS® Software enhances 802.1X device capabilities with Cisco Identity Based Networking Services (IBNS) 2.0. For example, concurrent authentication methods and interface templates have been added. Likewise, Cisco DNA Center has been enhanced to aid with the transition from IBNS 1.0 to 2.0 configurations, which use Cisco Common Classification Policy Language (commonly called C3PL). See the release notes and updated deployment guides for additional configuration capabilities. For more information about IBNS, see: https://cisco.com/go/ibns |

-

Anycast Layer 3 gateway—A common gateway (IP and MAC addresses) can be used at every node that shares a common EID subnet providing optimal forwarding and mobility across different RLOCs i.e without requiring a change of IP address on the endpoint, we can move these endpoints across the FE’s in a Fabric.

-

LISP forwarding—Now that we have alternate ways to look-up an endpoint, so instead of a typical routing-based decision, the fabric edge nodes query the map server to determine the RLOC associated with the destination EID and use that information as the traffic destination.

In case of a failure to resolve the destination RLOC, the traffic is sent to the default fabric border in which the global routing table is used for forwarding. The response received from the map server is stored in the LISP map-cache, which is merged to the Cisco Express Forwarding (CEF) table and installed in hardware.

If traffic is received at the fabric edge for an endpoint not locally connected, a LISP solicit-map-request is sent to the sending fabric edge in order to trigger a new map request; this addresses the case where the endpoint may be present on a different fabric edge switch.

-

VXLAN encapsulation/de-encapsulation—The traffic between the FE and the Border node is encapsulated in VXLAN headers and hence the endpoints can use the same IP address (within the VXLAN encapsulation) and can move between FE’s. This is possible since the FE use the RLOC address associated with the destination IP address to encapsulate the traffic with VXLAN headers.

Similarly, VXLAN traffic received at a destination RLOC is de-encapsulated. Again, this encapsulation and de-encapsulation of traffic enables the location of an endpoint to change and be encapsulated with a different edge node and RLOC in the network, without the endpoint having to change its address within the encapsulation.

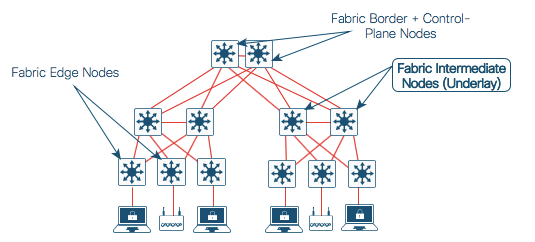

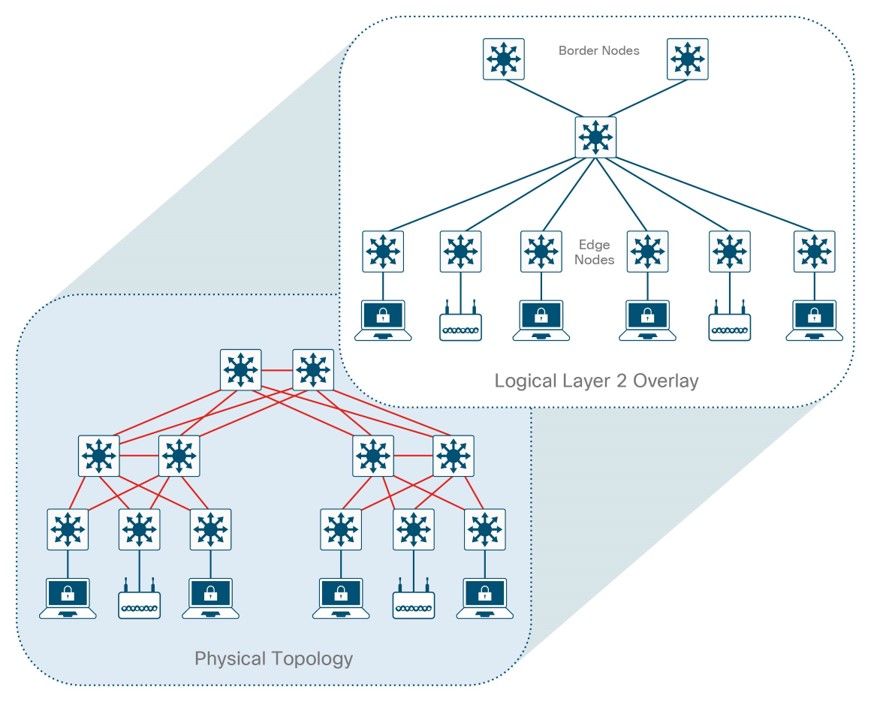

Cisco SDA Physical topologies

The underlying design principles and concepts for Fabric and non-fabric campus architectures are similar for the underlying campus network, i.e. a hierarchical design by splitting the network into modular groups. The following example shows the physical topology of a three-tier campus design in which all nodes are dual homed with equal-cost links that will provide for load-balancing, redundancy, and fast convergence. The Borders nodes could be at a campus core or the border can be configured separate from the core at another aggregation point.

Figure4: SDA 3-Tier Physical Topology

For smaller deployments, an SD-Access fabric can be implemented using a two-tier design. The same design principles should be applied but without the need for an aggregation layer implemented by intermediate nodes.

Figure5: SDA 2-Tier Physical Topology

In general, SD-Access topologies should be deployed as spoke networks with the fabric border node at the exit point hub for the spokes, although other physical topologies can be used.

Topologies in which the fabric is a transit network (connecting multiple SDA Fabrics via IP or SDA transit) should be planned carefully in order to ensure optimal forwarding. If the border node is implemented at a node that is not the aggregation point for exiting traffic, sub-optimal routing results when traffic exits the fabric at the border and then doubles back to the actual aggregation point.

Intermediate node

The fabric intermediate nodes are part of the Layer 3 network used for interconnections among the edge nodes to the border nodes. In case of a three-tier campus design using a core, distribution, and access, the intermediate nodes are the equivalent of distribution switches, though the number of intermediate nodes is not limited to a single layer of devices. Intermediate nodes route and transport IP traffic inside the fabric. No VXLAN encapsulation/de-encapsulation or LISP control plane messages are required from an intermediate node, which has only the additional fabric MTU requirement to accommodate the larger-size IP packets encapsulated with VXLAN information.

Cisco Software-Defined Access Components

The SD-Access architecture is supported by fabric technology implemented for the campus, which enables the use of virtual networks (overlay networks) running on a physical network (underlay network).

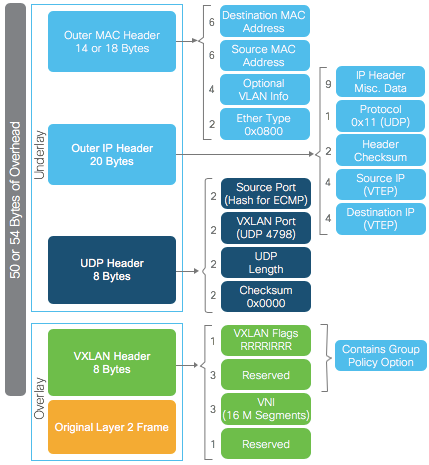

Overlay and Underlay

So far, we have learned the communication between the FE, Border and the control plane, let’s look at a combined packet when it gets across the Fabric i.e. underlay and overlay encapsulation.

We can also take a high-level look at the function for an overlay and underlay

| Underlay Network | Overlay Network |

| Routing ID (RLOC) – IP address of the LISP router facing ISP | Endpoint Identifier (EID) - IP address of a host VRF Instance Id Dynamic EID VLAN |

In summary, Overlay networks in data center fabrics are commonly used to provide Layer 2 and Layer 3 logical networks with virtual machine mobility (examples: Cisco ACI™, VXLAN/EVPN, and FabricPath). Overlay networks are also used in wide-area networks to provide secure tunneling from remote sites (examples: MPLS, DMVPN, and GRE).

SDA Across multiple Sites

|

Note: This section gives an overview of connecting multiple SDA Sites, it is referenced from Cisco SDA book. see: https://www.cisco.com/c/dam/en/us/products/se/2018/1/Collateral/nb-06-software-defined-access-ebook-en.pdf |

An SD-Access fabric may be composed of multiple sites. Each site may require different aspects of scale, resiliency, and survivability. The overall aggregation of sites (i.e. the fabric) must also be able to accommodate a very large number of endpoints, scale horizontally by aggregating sites, and having the local state be contained within each site.

Multiple fabric sites corresponding to a single fabric will be interconnected by a transit network area. The transit network area may be defined as a portion of the fabric which interconnects the borders of individual fabrics, and which has its own control plane nodes — but does not have edge nodes. Furthermore, the transit network area shares at least one border node from each fabric site that it interconnects. The following diagram depicts a multi-site fabric.

In general terms, a transit network area exists to connect to the external world. There are several approaches to external connectivity, such as:

-

IP transit

-

Software-Defined WAN transit (SD-WAN)

-

SD-Access transit (native)

In the fabric multi-site model, all external connectivity (including internet access) is modeled as a transit network. This creates a general construct that allows connectivity to any other sites and/or services.

The traffic across fabric sites, and to any other type of site, uses the control plane and data plane of the transit network to provide connectivity between these networks. A local border node is the handoff point from the fabric site, and the traffic is delivered across the transit network to other sites. The transit network may use additional fea‐ tures. For example, if the transit network is a WAN, then features such as performance routing may also be used.

To provide end-to-end policy and segmentation, the transit network should be capable of carrying the endpoint context information (VRF and SGT) across this network. Otherwise, a re-classification of the traffic will be needed at the destination site border.

Cisco Software-Defined Access Solution Operations

SD-Access Wired and Wireless client on-boarding operation

This section of the document will be focused on how clients connect to the SDA Fabric, we will also cover connecting Access points and wireless users

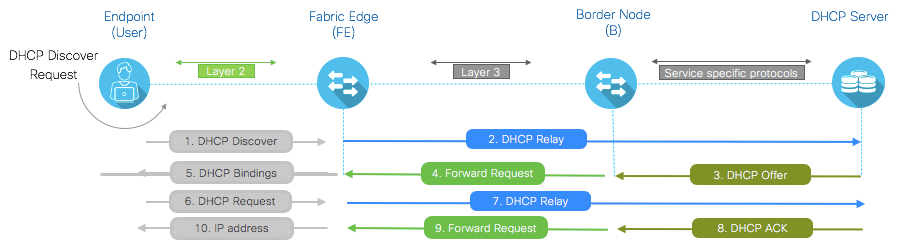

DHCP Operations in Fabric

Once the Fabric is configured and the Edge nodes, Border nodes and Control plane is operational, you can start connecting your clients (users and devices) to the Edge Nodes.

The main difference in traditional network and the Fabric enabled network is the requirement for your DHCP Server to support Option 82. In summary Option-82 Remote-ID Sub Option:Stringencodedas “SRLOCIPv4 address" and "VxLANL3 VNI ID" associated with Client segment.

The connected clients will start sending DHCP requests to obtain an IP address, the DHCP flow in Fabric is fundamentally different compared to traditional networks, let’s go through the process step-by-step.

Figure 8: Wired DHCP flow in Fabric

The connectivity between the clients (users or devices) is still running layer 2, however the network in Fabric is now running at layer 3 between Fabric Edge and the Border node and the protocols between the Border node and DHCP Server is specific to the service e.g NTP, DNS, DHCP etc …

Here is further explanation in to steps

-

DHCP Discover: The client sends a DHCP request to the Fabric Edge node:

FE#show ip dhcp snooping binding

MacAddress IpAddress Lease(sec) Type VLAN Interface

------------------ --------------- ---------- ------------- ---- --------------------

00:13:a9:1f:b2:b0 10.1.2.99 691197 dhcp-snooping 1021 TenGigabitEthernet1/0/23

FE#debug ip dhcp snooping ?

H.H.H DHCP packet MAC address

agent DHCP Snooping agent

event DHCP Snooping event

packet DHCP Snooping packet

redundancy DHCP Snooping redundancy

Received DHCP Discover

015016: *Feb 26 00:07:35.296: DHCP_SNOOPING: received new DHCP packet from input interface (GigabitEthernet4/0/3)

015017: *Feb 26 00:07:35.296: DHCP_SNOOPING: process new DHCP packet, message type: DHCPDISCOVER, input interface: Gi4/0/3, MAC da: ffff.ffff.ffff, MAC sa: 00ea.bd9b.2db8, IP da: 255.255.255.255, IP sa: 0.0.0.0, DHCP ciaddr: 0.0.0.0, DHCP yiaddr: 0.0.0.0, DHCP siaddr: 0.0.0.0, DHCP giaddr: 0.0.0.0, DHCP chaddr: 00ea.bd9b.2db8, efp_id: 374734848, vlan_id: 1022

-

type-DHCPDICOVER message sent by the client

-

MAC-Source and destination MAC address of the client.

-

DHCP Relay: FE uses DHCP Snooping to add it’s RLOC (Circuit ID and Remote ID) in Option 82 which defines which port, line card and RLOC the request if coming from and it also sets giaddress the Anycast SVI. Using DHCP Relay the request is forwarded to the Border.

Adding Relay Information Option

015018: *Feb 26 00:07:35.296: DHCP_SNOOPING: add relay information option.

015019: *Feb 26 00:07:35.296: DHCP_SNOOPING: Encoding opt82 CID in vlan-mod-port format

015020: *Feb 26 00:07:35.296: :VLAN case : VLAN ID 1022

015021: *Feb 26 00:07:35.296: VRF id is valid

015022: *Feb 26 00:07:35.296: LISP ID is valid, encoding RID in srloc format

015023: *Feb 26 00:07:35.296: DHCP_SNOOPING: binary dump of relay info option, length: 22 data:

0x52 0x14 0x1 0x6 0x0 0x4 0x3 0xFE 0x4 0x3 0x2 0xA 0x3 0x8 0x0 0x10 0x3 0x1 0xC0 0xA8 0x3 0x62

015024: *Feb 26 00:07:35.296: DHCP_SNOOPING: bridge packet get invalid mat entry: FFFF.FFFF.FFFF, packet is flooded to ingress VLAN: (1022)

015025: *Feb 26 00:07:35.296: DHCP_SNOOPING: bridge packet send packet to cpu port: Vlan1022.

-

0x52-DHCP Option 82

-

0x3 0xFE 0x4 0x3 -0x3 0xFE = 3FE = VLAN ID 1022, 0x4 = Module 4, 0x3 = Port 3

-

0x0 0x10 0x3 - LISP Instance-id 4099

-

0xC0 0xA8 0x3 0x62 - RLOC IP 192.168.3.98

Continue with Option 82

015026: *Feb 26 00:07:35.297: DHCPD: Reload workspace interface Vlan1022 tableid 2.

015027: *Feb 26 00:07:35.297: DHCPD: tableid for 1.1.2.1 on Vlan1022 is 2

015028: *Feb 26 00:07:35.297: DHCPD: client's VPN is Campus.

015029: *Feb 26 00:07:35.297: DHCPD: No option 125

015030: *Feb 26 00:07:35.297: DHCPD: Option 125 not present in the msg.

015031: *Feb 26 00:07:35.297: DHCPD: Option 125 not present in the msg.

015032: *Feb 26 00:07:35.297: DHCPD: Sending notification of DISCOVER:

015033: *Feb 26 00:07:35.297: DHCPD: htype 1 chaddr 00ea.bd9b.2db8

015034: *Feb 26 00:07:35.297: DHCPD: circuit id 000403fe0403

015035: *Feb 26 00:07:35.297: DHCPD: table id 2 = vrf Campus

015036: *Feb 26 00:07:35.297: DHCPD: interface = Vlan1022

015037: *Feb 26 00:07:35.297: DHCPD: class id 4d53465420352e30

-

Circuit ID = 0x3 0xFE = 3FE; VLAN ID 1022; 0x4 = Module 4 , 0x3 = Port 3

-

DHCP Offer: DHCP Server replies to the Border node with offer to Anycast SVI.

Sending Discover to DHCP server

015040: *Feb 26 00:07:35.297: DHCPD: Looking up binding using address 1.1.2.1

015041: *Feb 26 00:07:35.297: DHCPD: setting giaddr to 1.1.2.1.

015042: *Feb 26 00:07:35.297: DHCPD: BOOTREQUEST from 0100.eabd.9b2d.b8 forwarded to 192.168.12.240.

015043: *Feb 26 00:07:35.297: DHCPD: BOOTREQUEST from 0100.eabd.9b2d.b8 forwarded to 192.168.12.241.

-

giaddr = Gateway IP address field of the DHCP packet. The giaddr provides the DHCP server with information about the IP address subnet in which the client resides.

-

Forward Request: Border Node will now inspect the packet for Option 82 to find out which RLOC it needs to forward the request, so basically it uses the remote ID in option 82 to forward the packet to the Fabic Edge node.

Forwarding ACK

015089: *Feb 26 00:07:35.302: DHCPD: Reload workspace interface LISP0.4099 tableid 2.

015090: *Feb 26 00:07:35.302: DHCPD: tableid for 1.1.7.4 on LISP0.4099 is 2

015091: *Feb 26 00:07:35.302: DHCPD: client's VPN is .

015092: *Feb 26 00:07:35.302: DHCPD: No option 125

015093: *Feb 26 00:07:35.302: DHCPD: forwarding BOOTREPLY to client 00ea.bd9b.2db8.

015094: *Feb 26 00:07:35.302: DHCPD: Forwarding reply on numbered intf

015095: *Feb 26 00:07:35.302: DHCPD: Option 125 not present in the msg.

015096: *Feb 26 00:07:35.302: DHCPD: Clearing unwanted ARP entries for multiple helpers

015097: *Feb 26 00:07:35.303: DHCPD: src nbma addr as zero

015098: *Feb 26 00:07:35.303: DHCPD: creating ARP entry (1.1.2.13, 00ea.bd9b.2db8, vrf Campus).

015099: *Feb 26 00:07:35.303: DHCPD: egress Interfce Vlan1022

015100: *Feb 26 00:07:35.303: DHCPD: unicasting BOOTREPLY to client 00ea.bd9b.2db8 (1.1.2.13).

015101: *Feb 26 00:07:35.303: DHCP_SNOOPING: received new DHCP packet from input interface (Vlan1022)

015102: *Feb 26 00:07:35.303: No rate limit check because pak is routed by this box

015103: *Feb 26 00:07:35.304: DHCP_SNOOPING: process new DHCP packet, message type: DHCPACK, input interface: Vl1022, MAC da: 00ea.bd9b.2db8, MAC sa: 0000.0c9f.f45d, IP da: 1.1.2.13, IP sa: 1.1.2.1, DHCP ciaddr: 0.0.0.0, DHCP yiaddr: 1.1.2.13, DHCP siaddr: 0.0.0.0, DHCP giaddr: 1.1.2.1, DHCP chaddr: 00ea.bd9b.2db8, efp_id: 374734848, vlan_id: 1022

-

DHCP Bindings: Once the Fabric Edge node gets the request from Border node, it will send the packet back to the Client.

-

As per DHCP protocol the client can now request for DHCP IP address by sending a DHCP Request packet.

-

Just like Step 2, FE uses DHCP Snooping to add it’s RLOC (Circuit ID and Remote ID) in Option 82 which defines which port, line card and RLOC the request if coming from and it also sets giaddress the Anycast SVI. Using DHCP Relay the request is forwarded to the Border.

-

DHCP Server replies to the Border node with offer to Anycast SVI.

-

Border Node will now inspect the packet for Option 82 to find out which RLOC it needs to forward the request, so basically it uses the remote ID in option 82 to forward the packet to the Fabic Edge node.

-

The client now has an IP address.

Wired and Wireless Host Onboarding

Once DHCP flow is operational, the next step is to understand how the clients gets on-boarded on the network. We will take a quick look at the Fabric design before getting in to details of the host on-boarding steps.

As the clients connect to the network, they go through the following steps for host-onboarding

Figure 9: Host On-boarding flow

| Term | Description |

|---|---|

| Host Registration | On-boarding the types of clients on the network (Wired, Wireless and Access Points), think of AP’s as special wired clients. This is where the hosts are registered to the control plane nodes |

| Host Resolution | A method for clients to get address resolution for the other client on another Fabric Edge |

| External Connectivity | When clients are communicating with destinations outside of the Fabric |

| East-West Traffic | Clients are communicating with-in the Fabric, after host-onboarding the devices (hosts) would be learned by the Control Plane and on both the Fabric Edges. |

| Host Mobility | Leveraging Wired IP host mobility functionality |

Host Registration

As clients start connecting to the network, the first step is Host Registration which is registering the host in to the control plane (map-server)

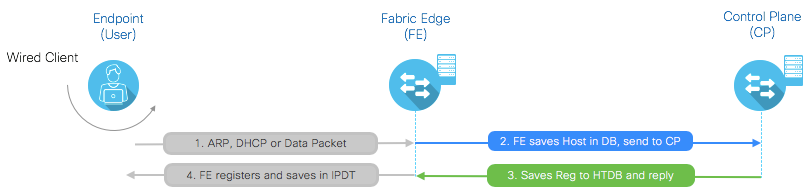

Figure 10: Host Registration

Here is further explanation in to steps

-

ARP, DHCP or Data Packet: Once the client connects to the Fabric Edge (FE), the FE detects the device as the device starts speaking, this could be any type of traffic e.g. ARP, DHCP or any data packet.

FE1#show mac address

1021 0013.a91f.b2b0 DYNAMIC Te1/0/23

Note: If you don’t see the MAC address entry, then it’s a SILENT HOST.

FE2050#show ip arp vrf BruEsc

Protocol Address Age (min) Hardware Addr Type Interface

Internet 192.168.1.1 - 0000.0c9f.f45c ARPA Vlan1021

Internet 192.168.1.8 4 001e.f635.5341 ARPA Vlan1021

FE2050#show ip cef vrf BruEsc adjacency vlan 1021 192.168.1.8 detail

IPv4 CEF is enabled for distributed and running

VRF BruEsc

15 prefixes (15/0 fwd/non-fwd)

Table id 0x2

Database epoch: 1 (15 entries at this epoch)

192.168.1.8/32, epoch 1, flags [attached, subtree context]

SC owned,sourced: LISP local EID -

SC inherited: LISP cfg dyn-EID - LISP configured dynamic-EID

LISP EID attributes: localEID Yes, c-dynEID Yes, d-dynEID Yes

SC owned,sourced: LISP generalised SMR - [disabled, not inheriting, 0xFFA756E668 locks: 1]

Adj source: IP adj out of Vlan1021, addr 192.168.1.8 FF9A925960

Dependent covered prefix type adjfib, cover 192.168.1.0/24

2 IPL sources [no flags]

nexthop 192.168.1.8 Vlan1021

-

1021 - VLAN

-

0013.a91f.b2b0 - Source MAC address of the client.

-

Fabric Edge device registration: In this step since the FE has seen the device, it saves the host info in local database and also sends the registration message to CP (Map–server)

FE1#show arp vrf Campus

Protocol Address Age (min) Hardware Addr Type Interface

Internet 10.2.1.99 0 0013.a91f.b2b0 ARPA Vlan1021

-

10.2.1.99 – IP address assigned to the client

-

Vlan1021 – VLAN assigned to the client.

-

CP Saves Registration: The control plane node registers and maintains entry for every client IP address that connects on the network, since all the look-up are performed against the control plane first and then the RIB table second.

The control plane registers the IP address in the host tracking database (HTDB) and then sends the reply back to the Fabric Edge (FE).

CP#show lisp site instance-id 4099

Site Name Last Up Who Last Inst EID Prefix

Register Registered ID

site_sjc never no -- 4099 10.2.1.0/24

3d23h yes# 10.2.120.1 4099 10.2.1.99/32

-

10.2.120.1 – FE1 RLOC

-

4099 – Instance ID is the table switch (FE) populates locally

-

10.2.1.99 – EID

The above commands show mac address and show arp vrf Campus are same for any traditional network design, the differentiation in Fabric and non-fabric is the device tracking database, this database is used to check if the device is still present on the network or they have disconnected. Once the devices are disconnected and cleared from device tracking DB, they are then removed from all the tables. The device tracking database is also used for assurance.

FE1#show device-tracking database

Network Layer Address Link Layer Address Interface vlan

ARP 10.2.1.99 0013.a91f.b2b0 Te1/0/23 1021

Note: Fabric Edge can learn the IP address from ARP, DHCP or DATA pack. If device tracking entry is missing then check if client got an IP

FE2050#show mac ad int gi 1/0/12

1021 001e.f635.5341 DYNAMIC Gi1/0/12

FE2050#show ip arp vrf BruEsc

Protocol Address Age (min) Hardware Addr Type Interface

Internet 192.168.1.1 - 0000.0c9f.f45c ARPA Vlan1021

Internet 192.168.1.8 4 001e.f635.5341 ARPA Vlan1021

FE2050#show device-tracking database

Binding Table has 2 entries, 1 dynamic

Codes: L - Local, S - Static, ND - Neighbor Discovery, ARP - Address Resolution Protocol,

DH4 - IPv4 DHCP, DH6 - IPv6 DHCP

, PKT - Other Packet, API - API created

Preflevel flags (prlvl):

0001:MAC and LLA match 0002:Orig trunk 0004:Orig access

0008:Orig trusted trunk 0010:Orig trusted access 0020:DHCP assigned

0040:Cga authenticated 0080:Cert authenticated 0100:Statically assigned

Network Layer Address Link Layer Address Interface vlan prlvl age state Time left

DH4 192.168.1.8 001e.f635.5341 Gi1/0/12 1021 0025 3mn REACHABLE 115s try 0(6334s)

L 192.168.1.1 0000.0c9f.f45c Vl1021 1021 0100 2623mn REACHABLE

-

10.2.1.99 – IP address assigned to the client

-

1021 – VLAN assigned to the client.

-

Fabric Edge (FE) Registration: The last step is when the FE registers and saved the client in to the IP device tracking database.

Step 1 and Step 2 are same for any traditional network design, the differentiation in Fabric and non-fabric is the device tracking database, this database is used to check if the device is still present on the network or they have disconnected. Once the devices are disconnected and cleared from device tracking DB, they are then removed from all the tables. The device tracking database is also used for assurance.

FE1#show device-tracking database

Network Layer Address Link Layer Address Interface vlan

ARP 10.2.1.99 0013.a91f.b2b0 Te1/0/23 1021

Note: Fabric Edge can learn the IP address from ARP, DHCP or DATA pack. If device tracking entry is missing then check if client got an IP

-

10.2.1.99 – IP address assigned to the client

-

1021 – VLAN assigned to the client.

Additionally, we can also see the control sessions (LISP) on the FE

FE1#show device-tracking database FE1#show ip lisp instance-id 4099 database

LISP ETR IPv4 Mapping Database for EID-table vrf Campus (IID 4099)

LSBs: 0x1 Entries total 3, no-route 0, inactive 0

10.2.1.99/32, dynamic-eid 10_2_1_0-Campus, locator-set rloc_021

Locator Pri/Wgt Source State

10.2.120.1 10/10 cfg-intf site-self, reachable

-

10.2.1.99 – IP address assigned to the client

-

1021 – VLAN assigned to the client.

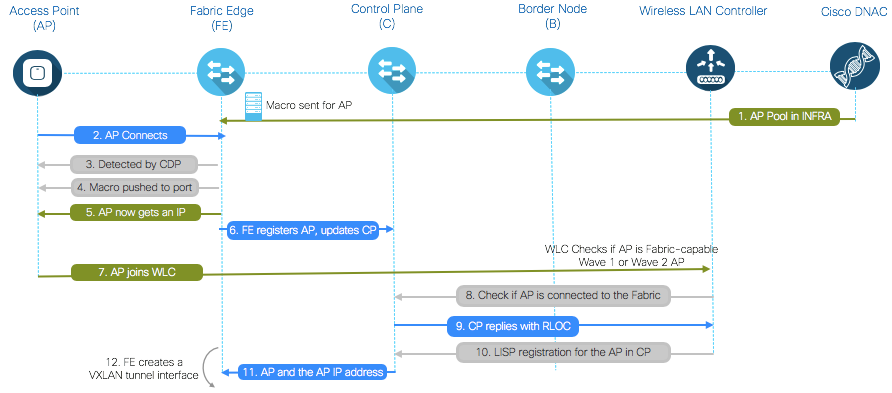

Host Onboarding – Access Point

Today the same Wireless LAN controller (WLC) can be part of Fabric and non-Fabric, i.e. a single WLC can have a set of SSID’s part of fabric and another set of SSID’s for non-Fabric. This helps our customers to plan their migrations to the fabric instead of installing a separate WLC for Fabric and non-Fabric deployments.

As mentioned above, since the same WLC can be part of Fabric and non-Fabric, we need to perform checks when Access Points (AP’s) connect to the FE’s e.g. is the AP part of the Fabric or not, hence the AP’s in a Fabric are considered as special wired clients, ISE profiling can be used to dynamically profile and access point and then place them in to the AP VLAN (IP-Pool) or when the AP on-boarding is configured via Cisco DNAC, the ports on FE (where the AP’s are connecting) could be placed in to an Access Point IP-Pool which maps to a no authentication mode. This is all handled by the underlay network.

In addition, the system also pushes some macro’s on the switch port to identity the AP’s as they connect to the network.

Let’s go through the step-by-step AP on-boarding process.

-

Admin configures AP pool in Cisco DNA Center in INFRA_VN. Cisco DNA Center pre-provision a configuration macro on all the FEs

-

AP is plugged in and powers up.

-

FE discovers it’s an AP via CDP and applies the macro to assign the switch port the the right VLAN

-

Macro is pushed by the FE (to provision AP)

-

AP gets an IP address via DHCP in the overlay

-

Fabric Edge registers AP’s IP address and MAC (EID) and updates the Control Plane (CP)

-

AP learns WLC’s IP and joins using traditional methods. Fabric AP joins in Local mode

-

WLC checks if AP is fabric-capable (Wave 2 or Wave 1 APs)

-

If AP is supported, WLC queries the CP to know if AP is connected to Fabric. Control Plane (CP) replies to WLC with RLOC. This means AP is attached to Fabric and will be shown as “Fabric enabled”

-

WLC does a L2 LISP registration for the AP in CP (a.k.a. AP “special” secure client registration). This is used to pass important metadata information from WLC to the FE

-

In response to this proxy registration, Control Plane (CP) notifies Fabric Edge and pass the metadata received from WLC (flag that says it’s an AP and the AP IP address)

-

Fabric Edge processes the information, it learns it’s an AP and creates a VXLAN tunnel interface to the specified IP (optimization: switch side is ready for clients to join).

Host Resolution

Once the clients are on-boarded to the fabric and have an IP address, their entries would be in the Fabric Edge and the control plane nodes.

FE1#show ip lisp map-cache instance-id 4099

LISP IPv4 Mapping Cache for EID-table vrf Campus (IID 4099), 5 entries

10.2.1.89/32, uptime: 00:05:16, expires: 23:57:59, via map-reply, complete

Locator Uptime State Pri/Wgt

10.2.120.3 00:04:23 up 10/10

CP#show lisp site instance-id 4099

Site Name Last Up Who Last Inst EID Prefix

Register Registered ID

site_sjc never no -- 4099 10.2.1.0/24

3d23h yes# 10.2.120.1 4099 10.2.1.99/32

3d23h yes# 10.2.120.3 4099 10.2.1.89/32

In case your network has silent host, you’ll not be able to see the MAC address. The easiest solution is to use Layer 2 flooding. L2 flooding can be configured from Cisco DNAC. Under Host On-boarding, there is a check box, once that check box is enabled it sets-up the required configuration to enable L2 flooding. This could be setup per IP pools, which then starts flooding ARP messages per that IP pool.

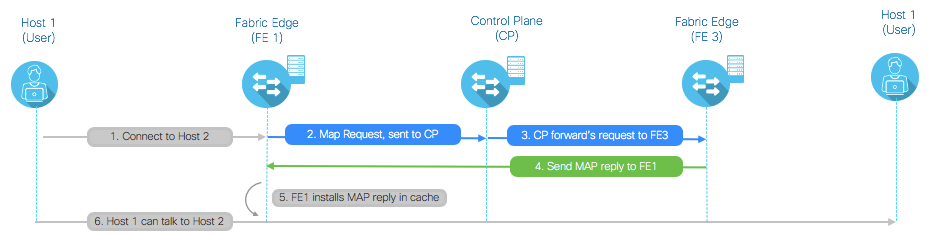

How clients get resolution for the other client on another FE

-

A client wants to establish communication to a Host2

-

No local map-cache entry Host2 on FE1. Map-Request is sent to the CP (Map-Resolver)

-

CP (Map Server) forwards the original Map-Request to the FE3(ETR) that last registered the EID subnet

-

FE3(ETR) sends to the FE1(ITR) a Map-Reply containing the requested mapping information

-

FE1(ITR) installs the mapping information in its local map-cache

-

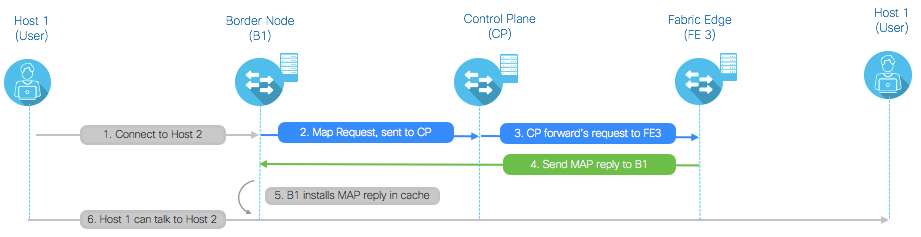

In case the hosts needs to go through the border nodes, the border node goes through the same look-up process to the control plane (just like an FE).

Figure 13: Wired Host Resolution across border node

External Connectivity

This covers the scenarios where the hosts are communicating with destinations outside of the network. The look-up is similar to the previous scenarios.

Let take a look at few things, first verify in Control Plane that there is a route to send the traffic to outside destinations.

CP#show lisp site instance-id 4099

Site Name Last Up Who Last Inst EID Prefix

Register Registered ID

site_sjc never no -- 4099 10.2.1.0/24

3d23h yes# 10.2.120.3 4099 10.2.1.89/32

At the Fabric Edge verify that you have a route inside LISP for the destination, in Fabric it’s called Proxy ETR, which means send the traffic to the border. Any destination that is outside of the normal Fabric will be sent to the Border node.

FE3#show ip lisp map-cache instance-id 4099

LISP IPv4 Mapping Cache for EID-table vrf Campus (IID 4099), 5 entries

32.0.0.0/4, uptime: 00:01:30, expires: 00:00:21, via map-reply, forward-native

Encapsulating to proxy ETR

Finally, at the border node, the address would be learned already and the border knows to send the packet to the destination address.

BDR#show ip lisp map-cache instance-id 4099

LISP IPv4 Mapping Cache for EID-table vrf Campus (IID 4099), 5 entries

10.2.1.89/32, uptime: 00:05:16, expires: 23:57:59, via map-reply, complete

Locator Uptime State Pri/Wgt

10.2.120.3 00:04:23 up 10/10

East-West Traffic

In this case, the hosts are communicating with-in the Fabric, after host-onboarding the devices (hosts) would be learned by the Control Plane and on both the Fabric Edges.

First, let’s take a look at the control plane

CP#show lisp site instance-id 4099

Site Name Last Up Who Last Inst EID Prefix

Register Registered ID

site_sjc never no -- 4099 10.2.1.0/24

2d05h yes# 10.2.120.1 4099 10.2.1.99/32

2d02h yes# 10.2.120.2 4099 10.2.1.89/32

4d02h yes# 10.2.120.2 4099 10.2.1.88/32

In case any of Host IP are missing, simply run the Host Registration flow as mentioned above.

On Fabric Edge verify that you have host entries in the local table, this ensures that the clients are connected to you.

Entry in FE 1 for Host 10.2.1.99

FE1#show ip lisp instance-id 4099 database

10.2.1.99/32, locator-set rloc_021a8c01-5c45-4529-addd-b0d626971a5f

Locator Pri/Wgt Source State

10.2.120.1 10/10 cfg-intf site-self, reachable

Entries in FE 3 for Host 10.2.1.89

FE3#show ip lisp instance-id 4099 database

10.2.1.89/32, locator-set rloc_021a8c01-5c45-4529-addd-b0d626971a5f

Locator Pri/Wgt Source State

10.2.120.3 10/10 cfg-intf site-self, reachable

Next, verify you have routes to the external destination on the Fabric Edges.

Entry in FE 1 for external Host 10.2.1.89

FE1#show ip lisp map-cache instance-id 4099

10.2.1.89/32, uptime: 00:00:06, expires: 23:59:53, via map-reply, complete

Locator Uptime State Pri/Wgt

10.2.120.3 00:00:06 up 10/10

Entries in FE 3 for external Host 10.2.1.99

FE3#show ip lisp map-cache instance-id 4099

10.2.1.99/32, uptime: 00:00:06, expires: 23:59:53, via map-reply, complete

Locator Uptime State Pri/Wgt

10.2.120.1 00:00:06 up 10/10

Host Mobility

In this scenario, we will go through the use case of a Host moving from one fabric edge to another Fabric Edge. An example could be of special devices in hospitals or industrial companies where it’s not feasible to change the IP address of the hosts and these hosts can move from one switch port to another.

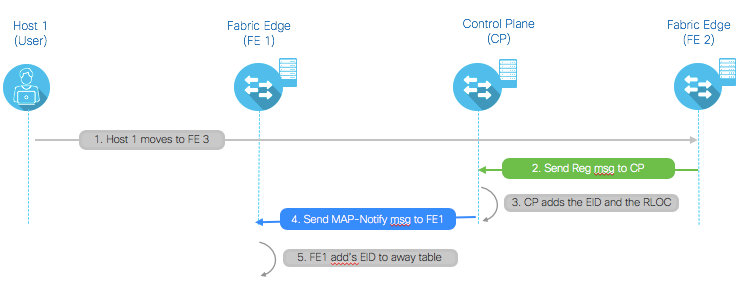

Figure 15: Host Mobility

-

Host1 moves from FE1 to FE2

-

FE2 saves the host info in local database. Send the registration message to control plane

-

The Map-Server adds to the database the entry for the specific EID, associated to the RLOCs

-

The Map-Server sends a Map-Notify message to the last FE1 that registered the 10.2.1.99/32 prefix

-

FE1 receives the Map-Notify message from the CP and adds route associated to the 10.2.1.99 EID to away table

Once the FE 1 receives Map-Notify from CP, the mac address of the Host (which now moved to FE 2), is placed in the away table and it stays there for 4 hours.

Let's take a look at the FE 1 and FE 2 local table, please note that the client has moved to FE 2 therefore the MAC address is places in the away table, if you look at the FE 2, it has the entry for the client.

FE1#show ip lisp away instance-id 4099

LISP Away Table for router lisp 0 (Campus) IID 4099

Entries: 1

Prefix Producer

10.2.1.99/32 local EID

FE2#show ip lisp instance-id 4099 database

10.2.1.99/32, locator-set rloc_021a8c01-5c45-4529-addd-b0d626971a5f

Locator Pri/Wgt Source State

10.2.120.2 10/10 cfg-intf site-self, reachable

Now, if there is another host (Host 2) on another Fabric Edge (FE 3) , trying to connect to Host 1 which has moved to Fabric Edge 2. The entry in FE 3 will still point to FE 1 for Host 1 since the MAP-Cache for FE 3 is not updated.

Figure 16: Host Mobility – Cache update

Map Request Message flow

-

The LISP process on FE1 receiving the first data packet creates a control plane message SMR and sends it to the remote FE3(ITR) that generated the packet

-

Send a new Map-Request for the desired destination (10.17.1.99) to the Map-Server

-

Map-Request is forwarded by the Map-Server to the FE2 that registered last the /32 EID address

-

FE2 replies with updated mapping information to the remote FE3

-

FE3 updates the information in its map-cache, adding the specific /32 EID address associated to the xTRs deployed in the East site (10.2.120.1 and 10.2.120.2).

Appendix A: Troubleshooting

The following sections addresses several troubleshooting information that are related to identifying and resolving problems that you may experience when you deploy Cisco SDA Solution.

Cisco DNAC Services involved:

-

orchestration-engine-service

-

spf-device-manager-service

-

spf-service-manager-service

-

apic-em-network-programmer-service

DHCP Troubleshooting

-

FE# show ip dhcp snooping binding

-

FE# debug ip dhcp snooping ?

Host-Onboarding Troubleshooting

-

FE1# show arp vrf Campus

-

FE1# show device-tracking database

-

FE1# show ip lisp instance-id Number database

-

CP# show lisp site instance-id Number

Verification for registration message

-

debug lisp control-plane map-server-registration FE1 RLOC

-

debug lisp forwarding eligibility-process-switching

Cisco IOS Troubleshooting

Some of the Cisco IOS show and debug commands that help you understand and troubleshoot ISE operations are:

-

show running-config aaa - Displays AAA configuration in the running configuration.

-

show authentication sessions/show access-session – Displays current authentication manager sessions like interface, mac, method, session-id & status.

-

show dot1x all – Displays 802.1x status for all interfaces

-

show aaa servers – Displays status and number of packets that are sent to and received from all AAA servers.

-

show device-sensor cache all – Displays Protocol, Name/Len/Value of TLV for the cached entries

-

show device-tracking database – Displays entries in the ip device tracking table.

-

show platform hardware fed switch active fwd-asic resource tcam utilization – Displays device-specific hardware resource usage information

-

show platform software fed switch active acl usage – Displays number of ACL entries used for different ACL feature types

Cisco SDA Troubleshooting

Kindly reference the following links for details on SDA troubleshooting

https://learningnetwork.cisco.com/docs/DOC-35366

Lesson 1: SDA Fabric Overview and Authentication with Cisco DNA Center

Lesson 2: After Authentication with ISE

Lesson 3: How Wireless On-Boarding Works in Cisco DNA Center

Lesson 4: Cisco DNA Center Wireless Client On-Boarding to SDA Fabric

Lesson 5: Verification on Control Plane in Cisco DNA Center

Appendix B: Cisco Software-Defined Access Fabric Design

This section provides an overview of SD-Access design components covering Underlay, Overlay and the Fabric. Its an optional section, if you’d like design guidance, please refer to the Cisco Validated Design (CVD).

Underlay network design

The underlay network is defined by the physical switches and routers that are used to deploy the SD-Access network. All network elements of the underlay must establish IP connectivity via the use of a routing protocol. Instead of using arbitrary network topologies and protocols, the underlay implementation for SD-Access uses a well-designed Layer 3 foundation inclusive of the campus edge switches (also known as a routed access design), to ensure performance, scalability, and high availability of the network.

The Cisco DNA Center LAN Automation feature is an alternative to manual underlay deployments for new networks and uses an IS-IS routed access design. Though there are many alternative routing protocols, the IS-IS selection offers operational advantages such as neighbor establishment without IP protocol dependencies, peering capability using loopback addresses, and agnostic treatment of IPv4, IPv6, and non-IP traffic. In the latest versions of Cisco DNA Center, LAN Automation uses Cisco Network Plug and Play features to deploy both unicast and multicast routing configuration in the underlay, aiding traffic delivery efficiency for services built on top.

In SD-Access, the underlay switches support the end-user physical connectivity. However, end-user subnets are not part of the underlay network—they are part of a programmable Layer 2 or Layer 3 overlay network.

Having a well-designed underlay network will ensure the stability, performance, and efficient utilization of the SD-Access network. Automation for deploying the underlay is available using Cisco DNA Center.

Underlay networks for the fabric have the following design requirements:

-

Layer 3 to the access design—The use of a Layer 3 routed network for the fabric provides the highest level of availability without the need to use loop avoidance protocols or interface bundling techniques.

-

Increase default MTU—The VXLAN header adds 50 and optionally 54 bytes of encapsulation overhead. Some Ethernet switches support a maximum transmission unit (MTU) of 9216 while others may have an MTU of 9196 or smaller. Given that server MTUs typically go up to 9,000 bytes, enabling a network wide MTU of 9100 ensures that Ethernet jumbo frames can be transported without any fragmentation inside and outside of the fabric.

-

Use point-to-point links—Point-to-point links provide the quickest convergence times because they eliminate the need to wait for the upper layer protocol timeouts typical of more complex topologies. Combining point-to-point links with the recommended physical topology design provides fast convergence after a link failure. The fast convergence is a benefit of quick link failure detection triggering immediate use of alternate topology entries preexisting in the routing and forwarding table. Implement the point-to-point links using optical technology and not copper, because optical interfaces offer the fastest failure detection times to improve convergence. Bidirectional Forwarding Detection should be used to enhance fault detection and convergence characteristics.

-

Dedicated IGP process for the fabric—The underlay network of the fabric only requires IP reachability from the fabric edge to the border node. In a fabric deployment, a single area IGP design can be implemented with a dedicated IGP process implemented at the SD-Access fabric. Address space used for links inside the fabric does not need to be advertised outside of the fabric and can be reused across multiple fabrics.

-

Loopback propagation—The loopback addresses assigned to the underlay devices need to propagate outside of the fabric in order to establish connectivity to infrastructure services such as fabric control plane nodes, DNS, DHCP, and AAA. As a best practice, use /32 host masks. Apply tags to the host routes as they are introduced into the network. Reference the tags to redistribute and propagate only the tagged loopback routes. This is an easy way to selectively propagate routes outside of the fabric and avoid maintaining prefix lists.

Overlay network design

An overlay network is created on top of the underlay to create a virtualized network. The data plane traffic and control plane signaling is contained within each virtualized network, maintaining isolation among the networks in addition to independence from the underlay network. The SD-Access fabric implements virtualization by encapsulating user traffic in overlay networks using IP packets that are sourced and terminated at the boundaries of the fabric. The fabric boundaries include borders for ingress and egress to a fabric, fabric edge switches for clients, and fabric APs for wireless clients. The details of the encapsulation and fabric device roles are covered in later sections. Overlay networks can run across all or a subset of the underlay network devices. Multiple overlay networks can run across the same underlay network to support multitenancy through virtualization. Each overlay network appears as a virtual routing and forwarding (VRF) instance for connectivity to external networks. You preserve the overlay separation when extending the networks outside of the fabric by using VRF-lite, maintaining the network separation within devices connected to the fabric and also on the links between VRF-enabled devices.

In earlier versions of SD-Access, IPv4 multicast forwarding within the overlay operates using headend replication of multicast packets into the fabric for both wired and wireless endpoints. Recent versions of SD-Access use underlay multicast capabilities, configured manually or by using LAN Automation, for more efficient delivery of traffic to interested edge switches versus using headend replication. The multicast is encapsulated to interested fabric edge switches, which de-encapsulate the multicast, replicating the multicast to all the interested receivers on the switch. If the receiver is a wireless client, the multicast (just like unicast) is encapsulated by the fabric edge towards the AP with the multicast receiver. The multicast source can exist either within the overlay or outside of the fabric. For PIM deployments, the multicast clients in the overlay use an RP at the fabric border that is part of the overlay endpoint address space. Cisco DNA Center configures the required multicast protocol support.

|

Note: The SD-Access 1.2 solution supports both PIM SM and PIM SSM, and the border node must include the IP multicast rendezvous point (RP) configuration. For available multicast underlay optimizations, see the release notes. |

Multicast support in the underlay network enables Layer 2 flooding capability in overlay networks. SD-Access now supports overlay flooding of ARP frames, broadcast frames, and link-local multicast frames, which addresses some specific connectivity needs for silent hosts, requiring receipt of traffic before communicating, and mDNS services.

Layer 2 overlays

Layer 2 overlays emulate a LAN segment to transport Layer 2 frames, carrying a single subnet over the Layer 3 underlay. Layer 2 overlays are useful in emulating physical topologies and depending on the design can be subject to Layer 2 flooding. SD-Access supports transport of IP frames without Layer 2 flooding of broadcast and unknown multicast traffic. Without broadcasts from the fabric edge, ARP functions by using the fabric control plane for MAC-to-IP address table lookups.

Figure 6: Layer 2 overlay—connectivity logically switched

Layer 3 overlays

Layer 3 overlays abstract IP-based connectivity from physical connectivity and allow multiple IP networks as part of each virtual network. Overlapping IP address space across different Layer 3 overlays is outside the scope of validation, and should be approached with the awareness that the network virtualization must be preserved for communications outside of the fabric, while addressing any IP address conflicts.

|

Note: The SD-Access 1.2 solution supports IPv4 overlays. Overlapping IP addresses are not supported for wireless clients on the same WLC. For IPv6 overlays, see the release notes for your software version to verify support. |

Figure 7:Layer 3 overlay—connectivity logically routed

Fabric design

In the SD-Access fabric, the overlay networks are used for transporting user traffic within the fabric. The fabric encapsulation also carries scalable group information that can be used for traffic segmentation inside the overlay. The following design considerations should be considered when deploying virtual networks:

-

Virtualize as needed for network requirements—Segmentation using SGTs allows for simple-to-manage group-based policies and enables granular data plane isolation between groups of endpoints within a virtualized network, accommodating many network policy requirements. Using SGTs also enables scalable deployment of policy, without having to do cumbersome updates for policies based on IP addresses, which can be prone to breakage. VNs support the transport of SGTs for group segmentation. Use virtual networks when requirements dictate isolation at both the data plane and control plane. For those cases, if communication is required between different virtual networks, you use an external firewall or other device to enable inter-VN communication. You can choose either or both options to match your requirements.

-

Reduce subnets and simplify DHCP management—In the overlay, IP subnets can be stretched across the fabric without flooding issues that can happen on large Layer 2 networks. Use fewer subnets and DHCP scopes for simpler IP addressing and DHCP scope management. Subnets are sized according to the services that they support versus being constrained by the location of a gateway. Enabling optional broadcast flooding features can limit the subnet size based on the additional bandwidth and endpoint processing requirements for the traffic mix within a specific deployment.

-

Avoid overlapping IP subnets—Different overlay networks can support overlapping address space, but be aware that most deployments require shared services across all VNs and other inter-VN communication. Avoid overlapping address space so that the additional operational complexity of adding a network address translation device is not required for shared services and inter-VN communication.

Fabric control plane design

The fabric control plane contains the database used to identify endpoint location for the fabric elements. This is a central and critical function for the fabric to operate. A control plane that is overloaded and slow to respond results in application traffic loss on initial packets. If the fabric control plane is down, endpoints inside the fabric fail to establish communication to remote endpoints that do not already exist in the local database.

Cisco DNA Center automates the configuration of the control plane functionality. For redundancy, you should deploy two control plane nodes to ensure high availability of the fabric, as a result of each node containing a duplicate copy of control plane information. The devices supporting the control plane should be chosen to support the HTDB, CPU, and memory needs for an organization based on fabric endpoints.

If the chosen border nodes support the anticipated endpoint scale requirements for a fabric, it is logical to collocate the fabric control plane functionality with the border nodes. However, if the collocated option is not possible (example: Nexus 7700 borders lacking the control plane node function or endpoint scale requirements exceeding the platform capabilities), then you can add devices dedicated to this functionality, such as physical routers or virtual routers at a fabric site.

Fabric border design

The fabric border design is dependent on how the fabric is connected to the outside network. VNs inside the fabric should map to VRF-Lite instances outside the fabric. Depending on where shared services are placed in the network the border design will have to be adapted. For more information, see "End-to-End Virtualization Considerations," later in this guide.

Larger distributed campus deployments with local site services are possible when interconnected with a transit control plane. You can search for guidance for this topic after these new roles are a generally available feature.

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: