- Cisco Community

- Technology and Support

- Service Providers

- Service Providers Knowledge Base

- ASR9000/XR Feature Order of operation

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on 04-13-2013 06:35 AM

Introduction

This document provides an overview as to in what order features and forwarding is applied in the ASR9000 ucode architecture.

After reading this document you understand better when packets are subject to a type of classification or action.

Also you will better understand how PPS rates are affected when certain features are enabled.

Feature order of operation

The following picture gives a (simplified) overview how packet are handled from ingress to egress

INGRESS

The following main building blocks compose the ingress feature order of operation

I/F Classification

When a packet is received the TCAM is queried to see what (sub)interface this packet belongs to.

The flexible vlan matching rules apply here that you can read more on in the EVC architecture document.

Once we know to which (sub)interface the packet belongs to, we derive the uIDB (micro IDB, Interface Descriptor Block) and know which features need to be applied.

ACL Classification

If there is an ACL applied to the interface, a set of keys are being built and sent over to the TCAM to find out if this packet is a permit or deny on that configured ACL. Only the result is received, no action is taken yet.

QOS Classification

If there is a qos policy applied, the TCAM is queried with the set of keys to find out if a particular class-map is being matched. The return result is effectively an identifier to the class of the policy-map, so we know what functionality to apply on this packet when QOS actions are executed.

NOTE:

As you can see, enabling either ACL or QOS will result in a TCAM lookup for a match. ACL only will result in an X percent of performance degredation. QOS only will result in a Y performance degredation (and X is not equal to Y).

Enabling both ACL and QOS would not give you an X+Y pps degredation because the TCAM lookup for them both is done in parallel. So we save that overhead. It is not the case that 2 separate tcam lookups are done.

BGP flowspec, Open flow and CLI Based PBR use PBR lookup, which happens between ACL and Qos logically.

Forwarding lookup

The ingress forwarding lookup is rather simple, we dont traverse the whole forwarding tree here, but only trying to find out what egress interface, or better put, what egress LC is to be used here for forwarding. The reason for this is that the 9k is distributed in its architecture and therefore the ingress linecard has to do some sort of FIB lookup to find the egress LC.

Also when bundles are in play, and members are spread over different linecards, we need to have knowledge and compute the hash to identify the member the egress LC will be choosing. This so we can forward the packet over only to that linecard which serves that member that is actually going to forward the packet.

NOTE:

If uRPF is enabled, a full ingress FIB lookup, same as the egress is done, this is intense and therefore uRPF will have a relative larger impact on the PPS.

IFIB Lookup

In this stage we determine if the packet is for us and if it is for us, where it needs to go to. For isntance, ARP and netflow are handled by the LC, but BGP and OSPF are handled by the RSP. The iFIB lookup gives us the direction as to where the packet internally needs to go to when we are the recipient.

Security ACL action

If the packet is subject to an ACL deny for instance, the packet is dropped during this stage.

QOS Policer action

Any policer action is done during this stage as well as marking. QOS shaping and buffering is done by the traffic manager which is a separate stage in the NPU.

L2 rewrite

During the L2 rewrite in the ingress stage we are applying the fabric header.

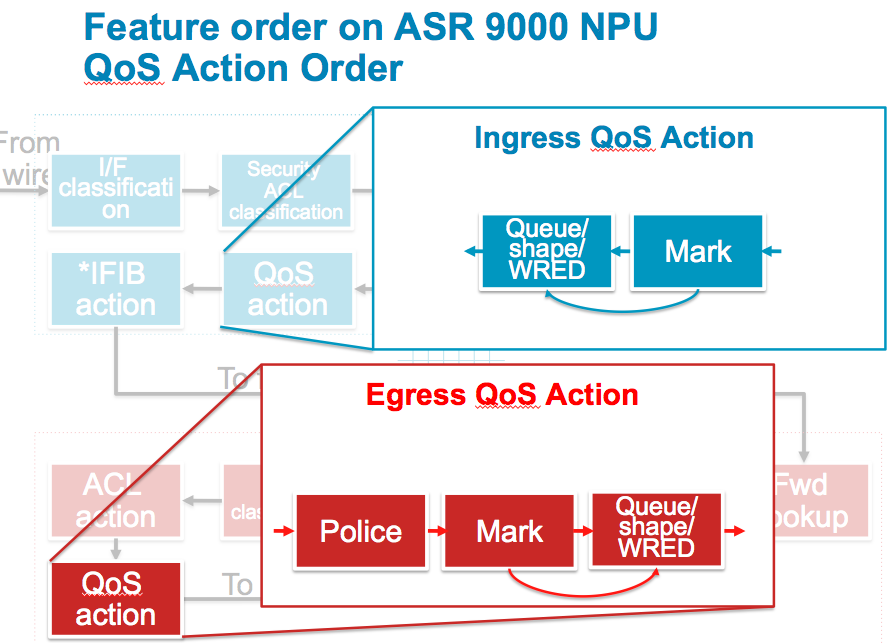

QOS Action

Any other qos action, such as Queuing and Shaping and WRED are executed during this stage. Note that packets that were previously policed or dropped by ACL are not seen in this stage anymore. It is important to understand that dropped packets are removed from the pipeline, so when you think there are counter discrepancies, they may have been processed/dropped by an earlier feature.

See also the next section with more details on the different QOS actions.

iFIB action

Either the packet is forwarded over the fabric or handed over to the LC CPU here. If the packet is destined for the RSP CPU, it is forwarded over the fabric to the RSP destination. Remember that the RSP is a linecard from a fabric point of view. The RSP requests fabric access to inject packets in the same fashion an LC would.

General

The ingress linecard will also do the TTL decrement on the packet and if the TTL exceeds the packet is punted to the LC CPU for an ICMP TTL exceed message. The number of packets we can punt is subject to LPTS policing.

EGRESS

The following section describes the forwarding stages of the egress linecard.

Forwarding lookup

The egress linecard will do a full fib lookup down the leaf to get the rewrite string. This full fib lookup will provide us everything for the packet routing, such as egress interface, encapsulation, and adjacency information.

L2 rewrite

With the information received from the forwarding lookup we can rewrite the packet headers, applying the ethernet header, vlans etc.

Security ACL classification

During the fib lookup we have determined the egress interface and know which features are applied.

Same as in ingress we are able to build the keys and query the tcam for an ACL result based on the application to the egress interface.

QOS Classification

Same as on ingress, the TCAM is queried to identify the QOS class-map and matching criteria for this packet on an egress qOS policy.

The same note as above applies when it comes to ACL and or QOS application to the interface.

ACL action

ACL action is executed

QOS action

QOS action is executed, see next section for more details on the QOS actions

General

MTU verification is only done on the egress linecard to determine if fragmentation is needed.

The egress linecard will punt the packet to the LC CPU of the egress linecard for that fragmentation requirement.

Remember that fragmentation is done in software and no features are applied on these packets on the egress linecard.

The number of packets that can be punted for fragmentation is NPU bound and limited by LPTS.

QOS action notes

The above picture expands on the different QOS actions that can be taken and how they intersect. What is important to understand from this picture is that WRED (that is precedence or DSCP aware) will use the rewritten values from the packet headers.

The almost same applies on egress: shaping, queueing and wred will reuse rewritten PREC/DSCP values from either ingress or the egress policer/remarker.

Packets that were subject to a police drop action will not be seen anymore by the shaper.

Conclusion

From the above a few conclusions can be drawn:

1) Ingress processing is more intense then egress processing.

2) Enabling more features will affect the total PPS performance of the NPU as more cycles are needed in a particular stage of forwarding

3) Packets denied on ingress will not go over the fabric

4) Permitted packets by qos or acl on ingress go over the fabric and might get dropped on egress ACL or QOS. This means that there is a potential of fabric bandwidth wasting if there is a very restrictive ACL or low rate policer/shaper on egress and a high input rate on ingress. (Note that oversubscription will result in back pressure so the ingress FIA will prevent the packets from being sent over the fabric, the head of line blocking from the ASR9000 QOS architecture document).

5) Packets that are remarked on ingress will get their QOS (or ACL) applied based on the REWRITTEN values from ingress

6) Netflow will account for ACL denied packets (not specifically called out in the feature order of operation)

7) WRED uses the rewritten values from ingress or egress policers

8) The ingress linecard is not aware of features configured on the egress linecard (remember the qos priority propagation from the ASR9000 quality of service architecture document)

Related Information

Xander Thuijs CCIE #6775

Principal Engineer ASR9000

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi piyush, aik that you can't exclude. what you are referring to is a functionality called flexible netflow whereby you can use some directives as what is subject to sampling, this is in the works. for now any packet is subject to sampling.

The flag I was referring to is the forwarding status, you see something like this:

IPV4SrcAddr IPV4DstAddr L4SrcPort L4DestPort IPV4Prot IPV4TOS

InputInterface ForwardStatus ByteCount PacketCount Dir

17.1.1.2 99.99.99.99 3357 3357 udp 0

Gi0/1/0/39 DropACLDeny 415396224 8654088 Ing

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Xander,

Thanks for explaining order of operation mechanics so beautifully. I have two questions if you could please help answer.

a) In older platforms like 7600, we had internal mapping which use to put traffic into various hardware queues" show interface queuing <interface name>. So for example, if a peer router sent a BGP update with CS6, it use to fell into respective priority queue and packet sent to CPU.

I saw your Cisco live video which discusses of three queues, P1, P2 and Best effort. Do we have some sort or internal mapping on ASR9K as well and is there a command to check/change it ?

b) In above order of operation, we have L2 rewrite on Ingress line card where fabric header is attached. Do we have a document explaining how this mechanism works ? Can we see this header value on ingress and egress line card by some command, trace etc ? Is there a table maintained for same ?

Can we see some sort of adjacency pointers like we had on 7600 (seen in ELAM) ?

Thanks in advance.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi amandes! thanks!:) got some answers for you!

a) on a9k the system injects with "inject to wire" which means that all locally generated traffic (except for icmp) is bypassing any features and gets ahead of everything for transmission. this is like ios's pak priority.

b) the fab header is not that interesting for us to see, it contains info for the fabric and FIA's to understand where this needs to go to. there is no cmd or trace that can dump the packet on the fabric to see the fab hdr, but I dont think there is much use for it either. We can capture packets like elam via the np packet capture facility (doc on support forum exists: "how to capture dropped or lost packets") and also more detail in CL id 2904 from I thought we did that in sanfran (and some extra words in sandiego 2016)

We're also working on a more sophisticated packet tracer liek the asr1000 has, but that is still in the development stages.

cheers!

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

On IOS XR, LPTS (Local Packet Transport System) takes care of classifying/policing the packets destined to the router. You can read about this in the BRKSPG-2904 slides from Cisco live in 2013/2014.

Before LPTS receives the packet, the NP will ensure that all control plane packets are classified on ingress as high priority and placed into the P1 ingress queue.

The purpose of the fabric header is to have the packet delivered to the desired egress NP. There are no commands or features that you could use to dump the fabric header of any given frame because there is really no need for that in any real life troubleshooting scenario. The adjacencies are a feature of the egress NP because asr9k is a two-stage forwarding platform. You can use the "sh adjacency" or "sh cef .... hardware ..." to see the SW and HW adjacency. This is also nicely explained in the same BRKSPG-2904 deck.

Hope this helps,

/Aleksandar

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Aleksandar,

Thanks for this valuable information.

Regards

Aman

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Xander,

Thanks a lot for your reply. Just another query that is making me very curious.

When an MPLS packet with single IGP label hits ingress line card and needs a SWAP operation, we look at hardware entry on Ingress to find:

1) Egress LC

2) NP on egress LC.

So question is to understand the order of operation:

1) Ingress LC does the forward lookup and then POPs the label.

2) Native IP packet is put into fabric headers and packet is exported to the EGRESS LC.

3) Egress LC imposes the label (as per MPLS SWAP) and sends the packet out.

Is this correct order of operation ? My curiosity is to know which LC does the imposition of new label and does the journey of packet from Ingess to Egress LC is based on native IP packet or is it an MPLS packet travelling from Ingress LC to Egress LC ?

Sorry for hijacking this post with "MPLS query" but I feel this question of mine if again closely related to understanding order of operation but with MPLS packets.

Regards

Aman

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi aman, the label swap happens on the ingress LC. the forwarding entry on ingress will tell us what the label mapping is and that swap operation is and can be done right there. this makes sense since the outgoing label also provides info for the egress interface.

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Xander,

I truly appreciate such a prompt answer and your commitment to have people's technical queries answered.

Regards

Aman

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Xander,

Thank you for the well explained post and for all the awesome documentation you have written in this forum. A couple of questions if you don't mind:

a) At which point are packets failing the uRPF check dropped?

b) In case a packet will match an ACL drop rule, and fail the uRPF check too, which NP counter is going to be incremented? RSV_DROP_ACL_DENY or RSV_DROP_IPV4_URPF_CHK?

Thank you in advance,

Alessandro

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi alessandro! thank you :) some answers also:

a) the search stage finds out the (reverse) next hop and in resolve we will take the rpf action in case it is not a match, that is where the actual drop occurs.

b) the acl check is done before a route lookup, so if a packet is subject to both drops only the acl deny will be seen. I just tested this also to make sure that this matches, and indeed!

rpf only:

171 RSV_DROP_IPV4_URPF_CHK 112899 985

rpf and acl:

45 PARSE_ENET_RECEIVE_CNT 2113278 987

171 RSV_DROP_IPV4_URPF_CHK 129862 0

854 PUNT_ACL_DENY 104027 987

ps. the punt acl deny is because the packet is now punted because of the deny acl and an icmp unreach generation.

cheers!

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello xander,

Do we have any numerical figures (percentage wise) on how bad of a performance impact uRPF would add to the NP?

Would it be reasonable to assume that uRPF practically halves the PPS rate -- given that the whole system has to do the TX FIB look up twice? (one on ingress NP to find src match, again on egress)

James

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi james, for typhoon it is near 50% hit, for tomahawk linecards it is a little less.

it is hard to give solid numbers as one feature may have cost X and another feature may have cost Y. But them together are not necessarily X+Y.

cheers

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Xander,

An NPU always serves traffic in both directions ingress and egress right?

So if it needs to perform several tasks in the pipeline in both directions and we make just one of these tasks more onerous, I would not expect it to decrease the overall performance by half.

Unless however the full lookup is very onerous relative to overall NPU performance budget, or compared to partial lookup or other tasks/features in the pipeline.

So for the 50% performance hit from uRPF to hold true, the full lookup would have to be 60% performance hit compared to partial lookup 10% performance hit?

And since Tomahawk is better NPU the impact of full lookup is less than 60%?

Is the below true please?

If NPU needs to do:

WAN to Fabric: partial lookup(-10%)

Fabric to WAN: full lookup(-60%)

----------------

= line-rate performance

(cause the NPU is built with +70% performance budget in order to be able to perform at line-rate (at a given packet size) doing just simple IP lookup, no features)

If NPU needs to do uRPF on all WAN input traffic:

WAN to Fabric: full lookup(-60%) (extra 50% load resulting from full ingress lookup)

Fabric to WAN: full lookup(-60%)

----------------

= 50% line-rate performance

If NPU needs to do:

WAN to Fabric: ingress-feature(-5%) + partial lookup(-10%)

Fabric to WAN: egress-feature(-5%) + full lookup(-60%)

----------------

= 90% line-rate performance

If NPU needs to do:

WAN to Fabric: ingress-feature(-5%) + partial lookup(-60%)

Fabric to WAN: egress-feature(-5%) + full lookup(-60%)

----------------

= 40% line-rate performance

Thank you very much

adam

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Xander,

Some further checks on 2 stage lookup. My understanding is that packet (mpls/ipv4) is looked up on ingress LC and an nhid value is imposed on the packet that facilitates two stage lookup on ingress card. For example:

Ingress LC0 / A9K-2x100GE-SE/ Typhoon

Egress LC3 / A9K-MOD160-SE/Typhoon

1> Test route:

RP/0/RSP0/CPU0:ASR9006-B#show route 1.1.1.1/32

Thu Nov 17 18:09:43.838 UTC

Routing entry for 1.1.1.1/32

Known via "ospf MHORSFIE", distance 110, metric 20, type extern 2

Installed Nov 15 14:48:32.687 for 2d03h

Routing Descriptor Blocks

207.47.115.26, from 49.44.1.193, via GigabitEthernet0/3/0/1

Route metric is 20

No advertising protos.

2> When traffic arrives on ingress LC0/Typhoon, CEF/Hardware lookup is done and and nhid is imposed on the packet.

RP/0/RSP0/CPU0:ASR9006-B#show cef 1.1.1.1/32 location 0/0/cpu0

Thu Nov 17 18:07:07.314 UTC

1.1.1.1/32, version 9669, internal 0x1000001 0x0 (ptr 0x860e94a8) [1], 0x0 (0x860ac20c), 0xa20 (0x899ea060)

Updated Nov 16 23:07:12.980

remote adjacency to GigabitEthernet0/3/0/1

Prefix Len 32, traffic index 0, precedence n/a, priority 3

via 207.47.115.26/32, GigabitEthernet0/3/0/1, 4 dependencies, weight 0, class 0 [flags 0x0]

path-idx 0 NHID 0xc4 [0x886f1688 0x886f156c] >> NHID Imposed 0xc4

next hop 207.47.115.26/32

remote adjacency

local label 24034 labels imposed {ImplNull}

RP/0/RSP0/CPU0:ASR9006-B#show rib nhid

Thu Nov 17 18:02:31.907 UTC

Nhid NH Address IF Chkpt_id Refcount

------------------------------------------------------------------------

0xc4 207.47.115.26 0xa000100 0x40002e78 265

3> Now egress LC3/Typhoon maintains a database and this nhid value imposed on ingress LC helps egress LC to choose correct exit interface and next hop.

RP/0/RSP0/CPU0:ASR9006-B#show cef nhid location 0/3/cpu0 >> Egress LC

Thu Nov 17 18:13:03.765 UTC

NHID Interface name If-handle Next-hop

0xc4 GigabitEthernet0/3/0/1 0xa000100 207.47.115.26

This answers for two stage lookup. But what I am observing is that no Trident card is maintaining this nhid->next-hop database.

For example:

RP/0/RSP0/CPU0:ASR9006-B#show platform

Thu Nov 17 18:15:55.517 UTC

Node Type State Config State

-----------------------------------------------------------------------------

0/RSP0/CPU0 A9K-RSP440-SE(Active) IOS XR RUN PWR,NSHUT,MON

0/RSP1/CPU0 A9K-RSP440-SE(Standby) IOS XR RUN PWR,NSHUT,MON

0/0/CPU0 A9K-2x100GE-SE IOS XR RUN PWR,NSHUT,MON

0/1/CPU0 A9K-40GE-B IOS XR RUN PWR,NSHUT,MON

0/2/CPU0 A9K-36x10GE-SE IOS XR RUN PWR,NSHUT,MON

0/3/CPU0 A9K-MOD160-SE IOS XR RUN PWR,NSHUT,MON

0/3/0 A9K-MPA-20X1GE OK PWR,NSHUT,MON

0/3/1 A9K-MPA-8X10GE OK PWR,NSHUT,MON

RP/0/RSP0/CPU0:ASR9006-B#show cef nhid location 0/1/cpu0

Thu Nov 17 18:16:08.594 UTC

----- Nothing displayed -----

- Please help me understand if the lookup and forwarding scheme is different in Trident as compared to Typhoon/Tomahawk ?

- What does egress Trident LC do with nhid header imposed by ingress LC.

I hope I am able to clearly explain my question.

Regards

Aman

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi aman, in non agg label situations, the ingress LC does merely a prefix lookup to find the egress LC.

the egress LC will do the label swap and everything for the transmission.

in case of an agg lable, the ingress LC will do the pop and do a prefix lookup and determine the egress LC identification.

trident and typhoon/tomahawk are no different in that regard, althought he impact of an agg label for trident is higher (due to its capabilities) is larger than it would be for TY/TH...

cheers1

xander

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: