- Cisco Community

- Technology and Support

- Data Center and Cloud

- Application Centric Infrastructure

- Re: Ask Me Anything- Migrating Existing Networks to Cisco ACI

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Ask Me Anything- Migrating Existing Networks to Cisco ACI

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-11-2019

01:06 PM

- last edited on

11-11-2019

01:10 PM

by

Hilda Arteaga

![]()

This topic is a chance to discuss more about the migration options from existing network designs to Cisco Application Centric Infrastructure (ACI). In this session the expert will discuss and answer questions that helps to understand the design considerations associated to the different migrating options.

In addition, Tuan will help to clarify and extend Cisco’s ACI main concepts such as Tenant, BD, EPG, Service Graph, L2Out and L3Out among others.

To participate in this event, please use the ![]() button below to ask your questions

button below to ask your questions

Ask questions from Monday 11th to Friday 22th of November, 2019

Tuan Nguyen is a technical instructor has over 20 years of experience as a consultant, systems engineer and Certified Cisco Systems Instructor. He is a Technical Instructor at Sunset Learning Institute and teaches courses across routing and switching, security, service provider, Dara Center and Voice curricula. Tuan specializes in Cisco routers and Cisco IOS. He has extensive knowledge in all aspects from LAN and WAN technologies, including design, implementation and support of Cisco IP Unified communication, IP Multicasting, MPLS, Frame Relay, Routing and Switching, Cisco ISP and Cisco Security. He is also proficient in interconnectivity, data communications, network and analyzing, base lining and troubleshooting, router configuration, Multi-Protocol routing, protocol analysis, security and firewall configuration. Prior joining Sunset Learning Institute, he worked as a Senior Internetwork Engineer and Cisco technical instructor at TBN Consulting providing technical and consulting services. He also worked as a Senior Network Engineer at the Defense Information System Agency (DISA) designing, developing, testing and evaluating Data Center and Networking technologies. Tuan holds a Bachelor of Science degree in Electrical Engineering from the University of Colorado at Denver. He holds different certifications, CCDA, CCDP, CCNAs, CCNPs, CCIP, CCSP, CCVP, CCSI and a CCIE R&S (#2135).

Tuan Nguyen is a technical instructor has over 20 years of experience as a consultant, systems engineer and Certified Cisco Systems Instructor. He is a Technical Instructor at Sunset Learning Institute and teaches courses across routing and switching, security, service provider, Dara Center and Voice curricula. Tuan specializes in Cisco routers and Cisco IOS. He has extensive knowledge in all aspects from LAN and WAN technologies, including design, implementation and support of Cisco IP Unified communication, IP Multicasting, MPLS, Frame Relay, Routing and Switching, Cisco ISP and Cisco Security. He is also proficient in interconnectivity, data communications, network and analyzing, base lining and troubleshooting, router configuration, Multi-Protocol routing, protocol analysis, security and firewall configuration. Prior joining Sunset Learning Institute, he worked as a Senior Internetwork Engineer and Cisco technical instructor at TBN Consulting providing technical and consulting services. He also worked as a Senior Network Engineer at the Defense Information System Agency (DISA) designing, developing, testing and evaluating Data Center and Networking technologies. Tuan holds a Bachelor of Science degree in Electrical Engineering from the University of Colorado at Denver. He holds different certifications, CCDA, CCDP, CCNAs, CCNPs, CCIP, CCSP, CCVP, CCSI and a CCIE R&S (#2135).

**Helpful votes Encourage Participation! **

Please be sure to rate the Answers to Questions

- Labels:

-

Cisco ACI

-

Other ACI Topics

- aci

- ACI Architecture

- ACI components

- aci fabric

- ACI fabric connection

- ACI migration

- ACI Tenant

- APIC

- Application Centric Infrastructure

- Application Policy Infrastructure Controller

- ask me anything

- ask the expert

- BD

- ccdp

- ccie

- ccna

- cisco

- Cisco Community

- Cisco Instructor

- data center

- epg

- forum event

- L2Out

- L3Out

- networking

- SDN controllers

- service graph

- Sunset Learning Institute

- Tuan Nguyen

- UCS migration

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-18-2019 04:28 PM

After doing some research, Yes, the fabric policy model can be used to queried about an existing flow between two endpoints with a default (open) contract between them so you can determine what TCP or UDP ports are actually in use.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-19-2019 08:25 AM

Thank you for looking into that question Tuan. Appreciate that!

I'll do some research to find out what policy model objects are involved.

Regards,

Simon

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-22-2019 06:19 AM

Hello Spreed,

You could get answer for your question through below Link:

as It's based on Syslog Definition for Contract

https://unofficialaciguide.com/2018/08/11/logging-acl-contract-permits-and-denies-with-aci/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-14-2019 07:02 AM - edited 11-14-2019 07:19 AM

Hi there,

We are about to migrate from a traditional DC to ACI fabric (network-centric model first). So I'm glad that I could get some advise from the experts.

There would be a L2 trunk all interface (vPC/Port-channel rather) connecting the greenfield to the brownfield Core switches, which got assigned as a static port in all possible EPGs. Those legacy Core switches would eventually be replaced by ACI as they're approaching End of Support. For Day-1 preparation, how would you go about:

1. Migrating hosts with Etherchannel / MLAG connection from brownfield switches (non-Nexus) to the ACI leaves? How long of a downtime should we expect for each of these LAG / MLAG migration?

2. Can the migration vPC interface (L2 Trunk vPC interface) be used for both EPG static port and L3Out SVI? For migration purpose, as devices having OSPF neighbourship with our Core switches would eventually be migrated to ACI.

--- I read from a Cisco document that if we're using the same encap for interfaces within an L3Out Interface Profile, then it would essentially create an External BD, making OSPF neighbourship formed between ACI, Core switches and outer devices in the same segment. We don't really care whichever would be the DR/BDR as it would just be a temporary setup.

3. In a network-centric model, the current gateway MAC (on our firewall) would also be used by the BD as we migrate the default gateway to the ACI fabric. How long should we expect for the downtime? (The firewall's gonna be inserted as PBR unmanaged eventually)

In version 4.2 (or later than 3.2) what's your opinion on using vzAny-vzAny contract, its subject has common/default filter (with PBR to redirect to firewall)? Would it work, or do we have to use a more detailed filter (like IP traffic being redirected, non-IP bypassing the firewall, etc.)?

Thanks for your assistance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-14-2019 01:22 PM

For question #1, Migrating hosts with Etherchannel / MLAG connection from brownfield switches (non-Nexus) to the ACI leaves? The typical downtime should be between a half an hour to 45 minutes. That would give you times to move hosts, test out connectivity and traffics.

For question #2, Can the migration vPC interface (L2 Trunk vPC interface) be used for both EPG static port and L3Out SVI? Yes, it can be used for both EPG static port and L3Out SVI.

For question #3, In a network-centric model, the current gateway MAC (on our firewall) would also be used by the BD as we migrate the default gateway to the ACI fabric. The typical downtime should be about a half an hour.

For this question, In version 4.2 (or later than 3.2) what's your opinion on using vzAny-vzAny contract, its subject has common/default filter (with PBR to redirect to firewall)? It would work. But for your requirements such as like IP traffic being redirected, non-IP bypassing the firewall, you should use a more detailed filter.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-15-2019 06:41 AM

Hi @tuanguyen1,

I'm glad to get a response from you.

The reason I was asking about vzAny-vzAny contract is because:

1. There are too many EPGs as we use network-centric approach.

2. On this white paper, at Contracts and filtering rule priorities it mentioned vzAny-vzAny contract with common/default would be programmed with priority value of 21 (same as the implicit deny). Which would mean the implicit deny actually takes precedence since deny wins over permit and redirect for the same priority. Shipment hasn't arrived and I couldn't set this up to test on dCloud (as in configuration is allowed but there's no actual endpoint to test that use case).

Also, for Port-channel migration, what would happen if you keep one cable on the brownfield switch, and the other to one of the ACI vPC? Would the existing Port-channel flap?

Thank you very much.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-15-2019 09:34 PM

for Port-channel migration, if you keep one cable on the brownfield switch, and the other to one of the ACI vPC, the existing Port-channel would not flap. Until all endpoints in the brownfield migrated over to the ACI vPC, then you can tear down the vPC links in the brownfield.

Also, the Bridge Domain Setting is important. Make sure that you are temporary "enable flooding of L2 unknown unicast", and "enable ARP flooding". After the migration, put the Bridge Domain setting back to default.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-15-2019 05:40 AM

Hi there

We are planning to move from a Nexus 7000 network to a new ACI network. (network centric)

Is there any best practic for dhcp and pxe boot, inside a ACI network ?

Mvh Mickey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-15-2019 09:06 PM

DHCP Relay Overview

While ACI fabric-wide flooding is disabled by default, flooding within a bridge domain is enabled by default. Because flooding within a bridge domain is enabled by default, clients can connect to DHCP servers within the same EPG. However, when the DHCP server is in a different EPG, BD, or context (VRF) than the clients, DHCP Relay is required. Also, when Layer 2 flooding is disabled, DHCP Relay is required.

This attached documents has all of the details related to "DHCP Relay in ACI" and how to decode Option 82.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-19-2019 02:46 AM

Hi Tuan,

I'm planning to migrate our load balancers F5 to the ACI. It's a simple one-arm design with SNAT. My only concern is the VIP subnet and interconnection subnet (between ACI and F5) are not the same. I found a few solutions on the Internet:

1. static routes on BD (https://community.cisco.com/t5/application-centric/virtual-ips-load-balancer-and-cisco-aci/td-p/3330513)

2. L3out EPG for the F5

3. configure the VIP subnet and interconnection subnet under the same BD

but they are not very detailed.

Could you please advise what the recommended solution is for this migration? Thanks a lot.

Best regards,

Quang

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-19-2019 09:59 AM

The recommended solution for this migration is to deploy the Layer 4 through Layer 7 (L4-L7) service graph. Using the service graph, Cisco ACI can redirect traffic between security zones to a load balancer, without the need for the load balancer to be the default gateway for the servers. In an One-arm mode, the default gateway for the servers is the server-side bridge domain, and the L4-L7 device is configured for source NAT (SNAT).

In this One-arm mode, the VRF instance is created and associated with all three bridge domains. One bridge domain is created for the outside, or client side (BD1); One bridge domain is created for the inside, or server side (BD2); And one bridge domain is created for connectivity with the load balancer (BD3); With this setup, you can optimize flooding on BD2.

In one-arm mode, the default gateway for the servers is the router upstream. The load balancer is connected with one VLAN to the router as well, which is the default gateway for the load balancer itself. The load balancer uses source NAT for the traffic from the clients to the servers to help ensure receipt of the return traffic.

The default gateway for the servers is the subnet on BD2. The default gateway for the load balancer is the subnet on BD3.

The contract is established between the external EPG and the server EPG, and it is associated with the service graph.

On BD3 the load balancer forwards traffic from the clients to the servers by routing it through the Cisco ACI fabric. Therefore, you need to make sure that the only addresses learned in BD3 are the ones that belong to the BD3 subnet: that is, the virtual addresses announced by the load balancer and the NAT addresses.

Additional information about LB-PBR scenario your VIPs will typically be part of the LB-service BD itself, so the VIP-subnet is directly connected to ACI.

https://www.cisco.com/c/en/us/solutions/data-center-virtualization/application-centric-infrastructure/white-paper-c11-739971.html

In the meantime, I am doing some more searches about the detail configurations of your scenarios and if it's available, will forward them to you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-19-2019 05:53 PM

Hi Tuan,

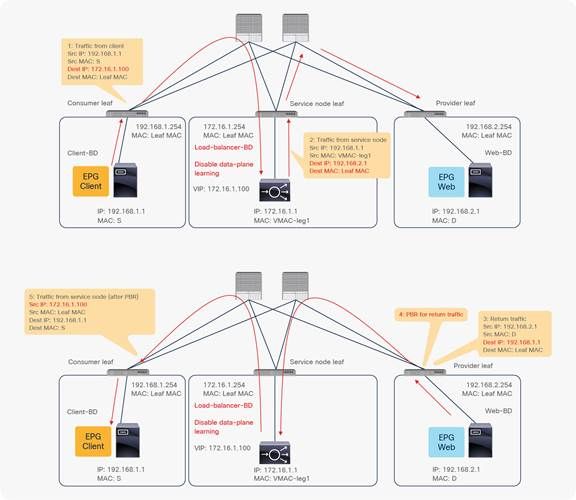

Thank you so much for your quick reply. I think I understand what you explained about the scenario when the VIPs are part of the LB BD subnet. But if they are not? Let me take an example from the PBR whitepaper (picture below), what should I do if the VIP here is not 172.16.1.100 but 172.16.2.100? I suppose that the client will not know how to reach that IP. Can I simply add, for example, a subnet 172.168.2.254/24 to the BD to make it work? I hope my question is clear, thank you for your advice.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-20-2019 04:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-22-2019 07:12 AM - edited 11-22-2019 07:13 AM

How do you define this "application-centric" approach ACI tries to evangelize, in terms of VRF/BD/EPG configuration, how is it different and beneficial to modern applications, and why would a network-centric approach not suffice under that scenario? A follow-up question from developers is potential drawbacks of having several networks in the same BD which goes in the opposite direction of what a traditional network model does.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-22-2019 08:35 PM

First of all, both the Application Centric Design and the Network Centric Design are valid design strategies, and you can read more of the official Cisco view on the URL link:

https://www.cisco.com/c/en/us/td/docs/switches/datacenter/aci/apic/sw/1-x/ACI_Best_Practices/b_ACI_Best_Practices/b_ACI_Best_Practices_chapter_010.html#id_26532

Pros of an Application Centric Design vs Network Centric Design

1. Application developers don't need to be tied to the Network team for the allocation of new subnets and VLANs to be able to implement policy between groups of servers (EPGs). Once an initial Bridge Domain and Subnet has been allocated, they can peel off as many EPGs as they want until they run out of IPs. And even then...

2. ...if required, the same Bridge Domain can be allocated multiple IP Addresses across multiple subnets, and if this is done...

3. ...there is no restriction about which servers are in which subnet - the only restrictions come via the Contracts between the EPGs.

Cons of an Application Centric Design vs Network Centric Design

1. A single Bridge Domain is still a single Broadcast Domain, just as a single VLAN is a broadcast domain in a traditional network - apart from the fact that ACI can reduce ARP broadcast traffic and some BUM traffic (Broadcast, Unknown Unicast and Multicast traffic). So, you need to remember that...

2. ...just like since forever, you should be wary of having broadcast domains that are too big. How big is too big? But in part it depends on how the following options are enabled on your Bridge Domain:

* L2 Unknown Unicast [Not flooded by default - instead sent to the proxy]

* L3 Unknown Multicast Flooding [Flooded by default. Can be Optimized so only Multicast Router ports receive L3 Unknown Multicast]

* Multi-Destination Flooding. [Flooded in BD by default]

* ARP Flooding [Disabled by default. ARP traffic is routed based on the Target IP address]

Assuming you have left the options above at their defaults (which is what happens if you specify Optimize as the Forwarding method when you create the bridge domain) then you will be able to create a larger Bridge Domain than the traditional Broadcast Domain you would have been able to create using traditional networking methods. (Switches/VLANs). But, there are some limitations on those optimized values, and if you need to change them in the future, you may find that your BD suddenly has to cope with more BUM traffic than before. Specifically:

* L2 Unknown Unicast - not much to worry about there, you should never have any valid Unknown L2 traffic, so let it be sent to the Proxy

* L3 Unknown Multicast Flooding - Not sure why you wouldn't set this to Optimized Flood, but again, you are unlikely to have to worry about too much unknown Multicast traffic. However, if you DO optimize L3 Unknown Multicast traffic, you are limited to optimizing 50 BDs per switch. Which should be plenty in most cases but might a pain to troubleshoot if exceeded.

* Multi-Destination Flooding - This is one that can get a little out of control. Multi-Destination means L2 Multicasts and Broadcasts that aren't consumed by the Leaf Switch. (E.g. LLDP Multicasts are consumed by the Leaf Switch). Capture a few minutes of traffic on a given switch port on your own corporate network (if you are allowed to do that without breaching company policy) and look at all the weird traffic you capture that is NOT being sent to your PC. This is your typical Multi-Destination traffic. Amongst it you will likely see some NetBIOS broadcasts as well as lots or ARP requests.

* If you tell ACI to Drop Mult-Destination frames, you may break some protocols - I can't think of any in particular at the moment, but my first suspects would be file sharing applications, like Windows Filesharing or Apple File Sharing and even DropBox.

* IMPORTANT In a single BD with Multiple EPGs, each EPG is defined by a different VLAN encapsulation. IF those VLANs extend into you legacy non-ACI network, then (by default) these frames will leak from one VLAN to another - which is probably NOT what you want, which is why there is a Multi-Destination Flooding option to Flood in Encapsulation - to prevent leakage from one EPG to another - but again, this may break some protocols. But before setting your BD to Flood in Encapsulation re-read the IF statement above.

* ARP Flooding is the most important of these options. There are times when you need to enable ARP flooding, such as if you have a silent host on a subnet that does not exist in the ACI environment (if your BD has an IP address on the same subnet as the silent host, ACI will encourage the silent host to speak by sending it an ARP request). The quintessential case for this is when one of you EPs is in fact a router port or a firewall interface. So, just remember that IF you have such a situation, it may be worth separating the BD where the Firewall/Router lives into a separate BD if possible.

* Application Centric Designs do not follow the normal expectations of network engineers who have to troubleshoot networking issues, so troubleshooting may be more difficult.

Pros of a Network Centric Design vs Application Centric Design

1. If your situation is one of migrating an existing network to ACI, a Network Centric approach is much more aligned to the existing structure and therefore much easier to implement. This is pretty much an overriding reason why most ACI implementations end up with at least some part of the design being Network Centric.

2. A Network Centric approach looks much more like a traditional design. This gives some people a lot of comfort, especially Network engineers who can't handle the new-fangled software-designed thingy that might threaten their life. The more like the old network it is, the better they like it.

3. There are some cases, such as when leaking routes between VRFs, that are easier to implement in a network centric design.

4. When working with Layer 4-7 devices that require traffic to directed to an IP address that is not part of the ACI Fabric (think Firewall), a Network Centric design is easier to work with.

Cons of a Network Centric Design vs Application Centric Design

1. A network centric design requires more careful IP subnet planning - much the same as it is now.

2. Application designers and writers of orchestration applications will probably find it harder to work within the restraints of a Network Centric Design. Think about it. If you are writing an Orchestration Application that is going to spin up half a dozen VMs with policies between them, is it easier to put all servers in the same subnet (Application Centric), or easier to find say 3 different subnets to put the servers in (Network Centric)?

Conclusion

One approach is not necessarily better than the other.

When migrating existing VLANs/Subnets, a Network Centric approach is recommended.

When writing an Orchestration Application, an Application Centric approach is recommended.

If neither is more important, and each is equally easy to implement, recommendation is to stick with a Network Centric approach because it gives you better flexibility to later implement L4-7 services and control BUM traffic.

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide