- Cisco Community

- Technology and Support

- Data Center and Cloud

- Data Center and Cloud Knowledge Base

- Understanding and Deploying UCS B-Series LAN Networking

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on

11-16-2009

11:27 AM

- edited on

03-25-2019

01:23 PM

by

ciscomoderator

![]()

Introduction

This document describes the procedure and concepts that can be used to configure the UCS for the networking on your LAN network. There could be many different VLAN configured in a LAN environment for different applications and purposes. For example one VLAN VMware ESXi server management and access purposes and another for the server application that is running on the VMware ESXi server as a virtual machine.

In UCS the implementation of VLAN is influenced by the concepts of End-host mode. The UCS system by default works in End-host mode. In End-host mode the UCS 6100 series fabric interconnect appears to the external LAN as an end station with many adapters.

Make sure you have read and undersood the following document. It will provide the foundation for the concepts discussed here

Deploying UCS Blade Server with UCS Manager for Virtualization

Network Adapters

The IP networking between the virtual machine is done via the different type of adapters that are part of the blade server. There are four types of network adapters that are supported to provide functionality required for the diverse workload in a Data Center.

- UCS 82598KR-CI 10 Gigabit Ethernet Adapter

- UCS M81KR Virtual Interface Card

- UCS M71KR-E Emulex Converged Network Adapter

- UCS M71KR-Q QLogic Converged Network Adapter

Follow the links above to take a look at the detailed description of each one of them.

UCS 82598KR is 10 Gigabit Ethernet card which provides dual port Ethernet connectivity and designed for efficient high performance Ethernet transport. The important thing to notice here is that this card doesn't provide Fiber Channel port.

UCS M71KR-E provides a dual port connection to the midplane to the blade server chassis. This card has 10 Gigabits per second Ethernet and 4 Gigabits per second fiber channel controller for fiber channel traffic all on the same mezzanine card. The UCS M71KR-E presents two discrete fiber channel HBA ports and two 10 Gbps Ethernet network ports to the operating system.

UCS M71KR-Q provides a dual port connection to the midplane to the blade server chassis. This card has 10 Gigabits per second Ethernet and 4 Gigabits per second fiber channel controller for fiber channel traffic all on the same mezzanine card. The UCS M71KR-R presents two discrete fiber channel HBA ports and two 10 Gbps Ethernet network ports to the operating system.

The only difference between M71KR-E and M71KR-Q is that former has a Emulex fiber channel controller and later has Q-Logic FC controller.

UCS M81KR card is a dual port 10 Gbps Ethernet mezzanine card that supports upto 128 PCIe virtual interfaces that can be dynamically configured.

This document and the concepts presented here are based on the UCS M71KR Converged Network AdapterVLAN implementation

In Unified Computing system (UCS) each VLAN is identified by a unique ID. The VLAN ID is a number that represents that particular VLAN. VLAN can be created in such a way that it is visible in either one fabric interconnect or both fabric interconnect. It is also possible to create separate VLANs with same name on both of the fabric interconnects but with different VLAN IDs. While creating a VLAN in UCS, the VLAN is also assigned a name but the name of a VLAN is known only within the UCS environment, and outside of the UCS the VLAN is represented by the unique ID.

Guidelines and Requirements

The configuration and options presented in this document assumes that following guidelines are met and understood.

- UCS-6120XP Fabric Interconnect Switch should be in the End Host mode. Switch mode is not discussed as part of this document

- This document only presents the UCS Manager GUI based configuration option.

- There could be multiple ways to configure the UCS Manager GUI to achive the same tasks

- In End Host mode, all the blade servers and their interfaces attached with the 6120XP looks like bunch of NIC cards to the 6120XP

- There is an inherit pinning happening between the blade server and uplink Ethernet switch (CAT6K or Nexus5K)

- VLAN creation in UCS means that the configured VLANs are automatically allowed as well

- vNIC within the UCS system is a host-presented PCI device which is managed centrally by the UCS manager.

Uplink Switch Configuration

Make sure that the appropriate VLANs are created on the uplink switch (e.g CAT6500).

Following snipper shows a CAT6500 configuration

interface TenGigabitEthernet5/2

description Connected To: UCS-6120-XP Fabric Interconnect Port 20

switchport

switchport trunk encapsulation dot1q

switchport mode trunk

spanning-tree portfast edgeshow vlan

VLAN Name Status

---- -------------------------------- ---------

433 CVP_TestBed_VLAN active

100 Management VLAN active

UCS Fabric Interconnect Configuration using UCS Manager

It is highly recommended to create all the necessary VLANs before configuring the Service Profile.

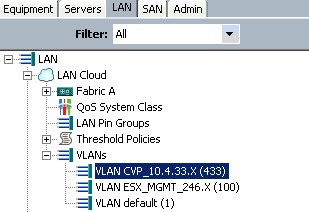

- Create a Common/Global VLAN under the LAN Tab in the UCS Manager as shown in the following picture

- A common/global VLAN as the name suggest visible to both fabric interconnect switches

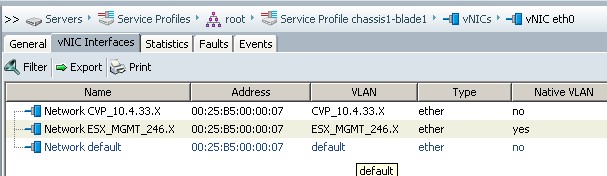

- This VLAN (For example VLAN 433) must be visible under the Servers tab. Also make sure right VLAN-ID is populated

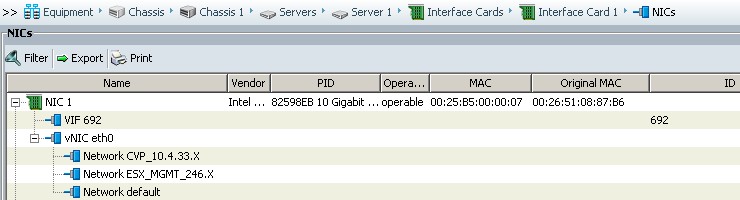

- Further verification can be done by looking under the equipment tab in UCS Manager by making sure that the VLAN are visible under the appropriate vNIC as shown in the following picture

- With the above configuration the virtual machine should be able to ping the default gateway and the IP connectivity should be completed.

Creating VLANs After Creating Service Profile

As mentioned before that it is recommended to create all the VLANs before creating a service profile. But UCS is flexible to accomodate and apply the new VLAN to the existing Service Profiles as well. So in this situation, few considerations should be made.

If you configure a VLAN after the Service Profile creation and if you notice that the "new" VLAN is not bind to the vNIC on the blade server then the solution is to modify the vNIC configuration and add the new VLAN to it.

Under the Servers tab in UCS Manager check to see if the "new" VLAN is visible or not. Following picture shows that the "new" VLAN 433 is not visible.

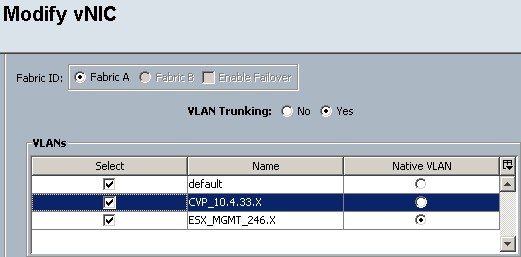

- Click "Modify" on the vNIC configuration option (shown in the above picture)

- Following screen shows the Modify vNIC screen

- Make sure the VLAN trunking options is checked and Native VLANs is correctly selected as per your IP network policy. And then check the "new" VLAN (VLAN 433 in this case)

- This should add the new VLAN to the vNIC

Next:

Understanding and Deploying UCS B-Series Storage Area Networking

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Shazhad, a couple of comments:

You mention, a couple of times, about adding VLANs before creating service profiles in case "VLANs will not bind to vNICs" and "so you don't have to reboot the switch". I think this confuses people a little... I appreciate you're trying to suggest a best practice, but the reality is that many customers _will_ have to add/edit/delete VLANs and update their service profiles later, and this is always possible and never requires a reboot.

So perhaps it's a language thing but if I was a customer reading this doc I'd get the impression that UCS isn't as flexible as it actually is... so instead of saying it's difficult/problematic to change VLANs, I'd suggest writing how easy it can be with Updating vNIC templates, perhaps?

Keep up the good work!

Steve

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks Steve for the the feedback. I will fix the document accordingly.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Specific to a VMware or other like clustered environment. Illustrating the use of VNIC Templates for consistent easy management of serveral nodes from one control point would be beneficial. We use vNIC template exclusively for our internal ESX Clusters network configurations.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Ken,

I agree completly that vNIC templates and service profile templates are great for ease of management in terms of configuration. I will try to have that illustrated in this doc. I delibrately skipped that part because I wanted to keep this doc very simple. The intention is that this deployment guide will be referenced by Unified Communications customers/engineers/admin in the future. And typilcayy this is the level of details that they would need to understand the Data Center pieces of the puzzle.

Thanks,

Shahzad

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: