- Cisco Community

- Technology and Support

- Data Center and Cloud

- Storage Networking

- Problem on FCOE in our enviroment

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Problem on FCOE in our enviroment

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-31-2014 05:04 AM

Hi all,

from the beginning of the implementation in our datacentre, we have many problems and deterioration on our FCOE infrastructure.

We made many troubleshooting, we check the configuration at least 100 times in Cisco Nexus 4k, Cisco Nexus 5k, MDS ... no problems were found by us, by our external support but in particular by Cisco TAC.

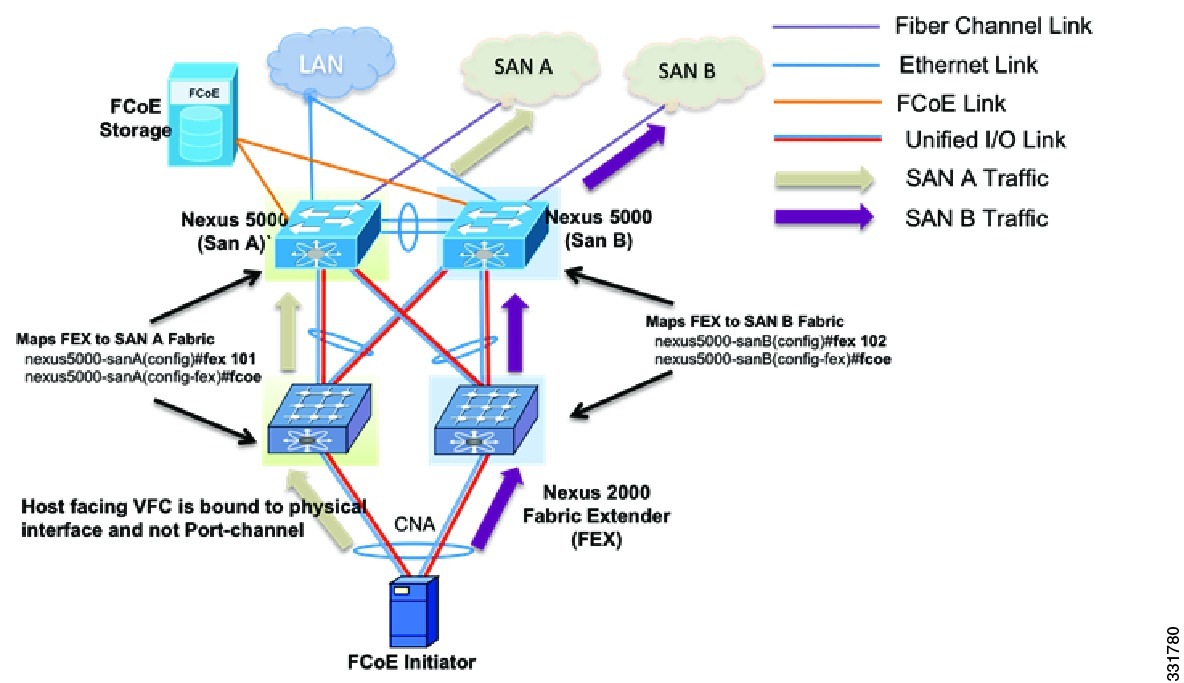

Here below an example of our topology, we have Nexus 4k and not Nexus 2k, more MDS in fron of the Storage, and Nexus 5k in npv mode.

We have opened a case in Cisco, but from almost 1 month, 2 Webex done, no good news received from their side.

They asked to upgrade Nexus 5000 and Nexus 4k to the last version of the software.

It's done ... but immediately after the activities, again many pause frames were detected into the interfaces between IBM BLADE Servers and Nexus 4k. This is causing interruption and fault on the services, loss of packets and servers that are not able to boot via SAN.

Browsing in internet and checking independently the root cause of the problems, we found that there's something wrong on the DCBX negotiation from Nexus 4k and CNA Qlogic of our IBM Servers.

We configured the standard QUEUE Policy on the devices (50% ethernet and 50% FCOE) on all the active channels and devices involved in the flows.

Nexus 5k have no issue when complete the negotiation with a server directly connected that mounts a CNA Qlogic.

---

DCBX TLV (Type-Length-Value) Data

=================================

DCBX Parameter Type and Length

DCBX Parameter Length: 13

DCBX Parameter Type: 2

DCBX Parameter Information

Parameter Type: Current (Attuale configurata sulla CNA) Pad Byte Present: Yes

DCBX Parameter Valid: Yes

Reserved: 0

DCBX Parameter Data

Priority Group ID of Priority 1: 0

Priority Group ID of Priority 0: 0

Priority Group ID of Priority 3: 1

Priority Group ID of Priority 2: 0

Priority Group ID of Priority 5: 0

Priority Group ID of Priority 4: 0

Priority Group ID of Priority 7: 0

Priority Group ID of Priority 6: 0

Priority Group 0 Percentage: 50

Priority Group 1 Percentage: 50

Priority Group 2 Percentage: 0

Priority Group 3 Percentage: 0

Priority Group 4 Percentage: 0

Priority Group 5 Percentage: 0

Priority Group 6 Percentage: 0

Priority Group 7 Percentage: 0

Number of Traffic Classes Supported: 2

DCBX Parameter Information

Parameter Type: Remote

Pad Byte Present: Yes

DCBX Parameter Valid: Yes

Reserved: 0

DCBX Parameter Data

Priority Group ID of Priority 1: 0

Priority Group ID of Priority 0: 0

Priority Group ID of Priority 3: 1

Priority Group ID of Priority 2: 0

Priority Group ID of Priority 5: 0

Priority Group ID of Priority 4: 0

Priority Group ID of Priority 7: 0

Priority Group ID of Priority 6: 0

Priority Group 0 Percentage: 50

Priority Group 1 Percentage: 50

Priority Group 2 Percentage: 0

Priority Group 3 Percentage: 0

Priority Group 4 Percentage: 0

Priority Group 5 Percentage: 0

Priority Group 6 Percentage: 0

Priority Group 7 Percentage: 0

Number of Traffic Classes Supported: 2

DCBX Parameter Information

Parameter Type: Local

Pad Byte Present: Yes

DCBX Parameter Valid: Yes

Reserved: 0

DCBX Parameter Data

Priority Group ID of Priority 1: 0

Priority Group ID of Priority 0: 0

Priority Group ID of Priority 3: 1

Priority Group ID of Priority 2: 0

Priority Group ID of Priority 5: 0

Priority Group ID of Priority 4: 0

Priority Group ID of Priority 7: 0

Priority Group ID of Priority 6: 0

Priority Group 0 Percentage: 50

Priority Group 1 Percentage: 50

Priority Group 2 Percentage: 0

Priority Group 3 Percentage: 0

Priority Group 4 Percentage: 0

Priority Group 5 Percentage: 0

Priority Group 6 Percentage: 0

Priority Group 7 Percentage: 0

---

Conversely, ALL the blades directly connected to the Nexus 4k, even if the QOS are properly configured, are netotiating very strange values (88,12)

That can be view from the CNA report and from a tcpdump directly made on the Nexus 4k

---

DCBX TLV (Type-Length-Value) Data

=================================

DCBX Parameter Type and Length

DCBX Parameter Length: 13

DCBX Parameter Type: 2

DCBX Parameter Information

Parameter Type: Current (Attuale configurata sulla CNA)

Pad Byte Present: Yes

DCBX Parameter Valid: Yes

Reserved: 0

DCBX Parameter Data

Priority Group ID of Priority 1: 0

Priority Group ID of Priority 0: 0

Priority Group ID of Priority 3: 1

Priority Group ID of Priority 2: 0

Priority Group ID of Priority 5: 0

Priority Group ID of Priority 4: 0

Priority Group ID of Priority 7: 0

Priority Group ID of Priority 6: 0

Priority Group 0 Percentage: 88

Priority Group 1 Percentage: 12

DCBX Parameter Information

Parameter Type: Remote (Inviata dal Cisco 4k)

Pad Byte Present: Yes

DCBX Parameter Valid: Yes

Reserved: 0

DCBX Parameter Data

Priority Group ID of Priority 1: 0

Priority Group ID of Priority 0: 0

Priority Group ID of Priority 3: 1

Priority Group ID of Priority 2: 0

Priority Group ID of Priority 5: 0

Priority Group ID of Priority 4: 0

Priority Group ID of Priority 7: 0

Priority Group ID of Priority 6: 0

Priority Group 0 Percentage: 88

Priority Group 1 Percentage: 12

Priority Group 2 Percentage: 0

Priority Group 3 Percentage: 0

Priority Group 4 Percentage: 0

Priority Group 5 Percentage: 0

Priority Group 6 Percentage: 0

Priority Group 7 Percentage: 0

Number of Traffic Classes Supported: 2

DCBX Parameter Information

Parameter Type: Local (I parameter configurati manualmente sulla CAN ma ignorati se ricevuti via DCBX)

Pad Byte Present: Yes

DCBX Parameter Valid: Yes

Reserved: 0

DCBX Parameter Data

Priority Group ID of Priority 1: 0

Priority Group ID of Priority 0: 0

Priority Group ID of Priority 3: 1

Priority Group ID of Priority 2: 0

Priority Group ID of Priority 5: 0

Priority Group ID of Priority 4: 0

Priority Group ID of Priority 7: 0

Priority Group ID of Priority 6: 0

Priority Group 0 Percentage: 50

Priority Group 1 Percentage: 50

Priority Group 2 Percentage: 0

Priority Group 3 Percentage: 0

Priority Group 4 Percentage: 0

Priority Group 5 Percentage: 0

Priority Group 6 Percentage: 0

Priority Group 7 Percentage: 0

---

We are waiting for a Cisco troubleshooting from 10 days ... technically during the webex these should be the operation that i expect to be done directly from them!

In any case this seems sincerely a problem generated by :

- wrong queue configuration (very strange, more than 20 person and Cisco technician view the config in the last year)

- bug or incompatibility between Nexus 4k and CNA (updated also to the last ver of the firmware)

HBA Model : QMI8142

HBA Description : QMI8142 QLogic PCI Express 10GbE Expansion Card (CFFh) for IBM BladeCenter (FCoE)

HBA ID : 0-QMI8142

Anyone have never had something similar in your business?

Samuele

- Labels:

-

Storage Networking

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-03-2014 02:26 PM

Are you using twinax cables some point? which ones?

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide