Howdy out there in automation land! Well look here... a quick blog turnaround. I am happy to post more often... I just like to wait until I find things of true value. This week I did! In an effort to just see "is it possible"... I was able to hook in a Hadoop cluster database into CPO via JDBC adapter and JDBC driver. This gave me a great opportunity to talk to how you can use the JDBC adapter to plug into *ANY* database that has a JDBC driver.... the expansion opportunities are near endless!! So what should our movie poster of the blog be... Reloaded... hmmm

how about?

DVD? Does anyone out there still use that? :)

Eitherway, let's talk JDBC driver and CPO and more specifically Hadoop/Hive setup. Here are some steps to follow (with links) and of course, I have a video below...

- You want to acquire the JDBC driver for your database. Mysql, DB2, Hadoop, etc. In our case, you can find the Cloudera Hadoop/Hive JDBC driver here - https://www.cloudera.com/downloads/connectors/hive/jdbc/2-5-4.html

- The above driver is the one i used and it works fine. You will download and extract it to your CPO node(s). NOTE: If you have multiple notes you need to repeat these steps for *EACH* node.

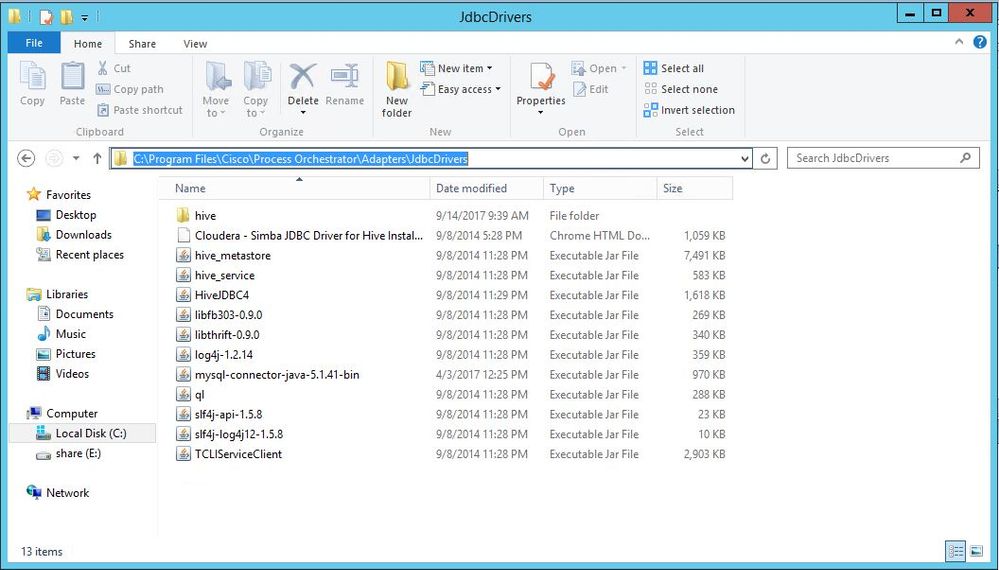

- Copy all the files into your JDBC driver directory in CPO. By default this is C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers

- The above step is for any/all JDBC drivers. The next step is based on Hadoop/Hive only and their documentation is found at http://www.cloudera.com/documentation/other/connectors/hive-jdbc/2-5-4/Cloudera-JDBC-Driver-for-Apache-Hive-Install-Guide-2-5-4.pdf

- For Hadoop/Hive you need to add all the above files (.JARs) to your CLASSPATH for *EACH* CPO server. This is a bit of a pain but it is a must. To do this, follow below...

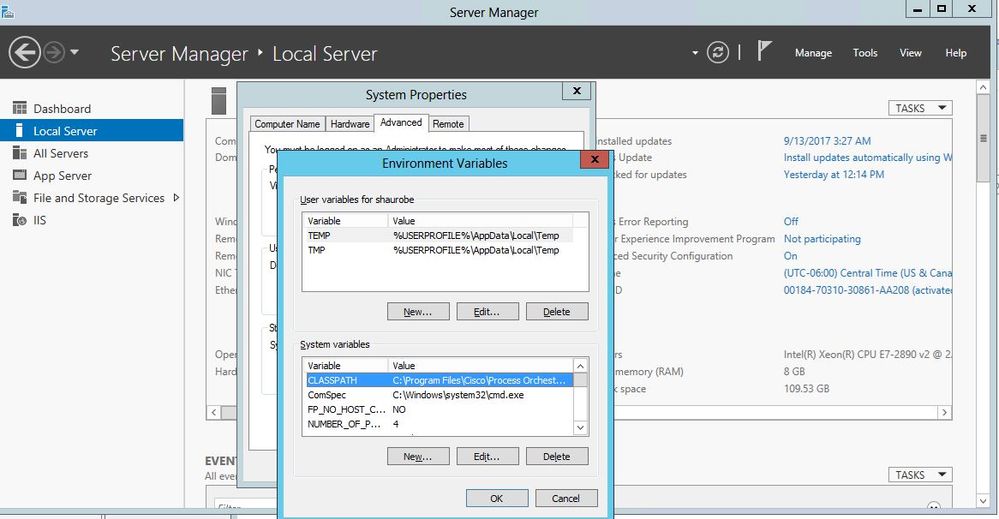

- Click on the server manager, and then click on the machine name on the Local Server area.

- On the new dialog, go to the Advanced tab and click Environment Variables

- If you do not have CLASSPATH, add it and then add the values. In some cases you might have to add them to your PATH variable as well. I did not, but it's possible.

- The CLASSPATH you want(for this) is as follows:

C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\HiveJDBC4.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\TCLIServiceClient.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\hive_metastore.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\hive_service.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\libfb303-0.9.0.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\libthrift-0.9.0.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\log4j-1.2.14.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\ql.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\slf4j-api-1.5.8.jar;C:\Program Files\Cisco\Process Orchestrator\Adapters\JdbcDrivers\slf4j-log4j12-1.5.8.jar

- Copy the above into your NEW or already existing classpath.

- If you had to change your CLASSPATH (for any JDBC) you will need to restart your server (each node). If you only had to add the .JAR files to the directory then you just need to bounce the CPO service, again on each node.

- Now you will go into the JDBC adapter, by going to Administration workspace in CPO, then Adapters. Right click -> properties on your JDBC adapter. Select the JDBC driver tab

- Click New and create a new JDBC driver

- On the JDBC driver tab you will fill out the following information:

- Jar File - click the "..." and select the main JAR file. In the hadoop case that would be "HiveJDBC4.jar"

- Driver Class Full Name - Type in the full class name. Most of the time you can find this in the documentation. In my case I used hive2 so I used "com.cloudera.hive.jdbc4.HS2Driver" for hadoop

- Provider Name - give it a provider name, who wrote the JDBC driver

- Default port - what is the default port for the DB connections

- Connection URL - This will be the default URL to connect with. You can use CPO variables to help you construct it

- Now you can click OK and save it.

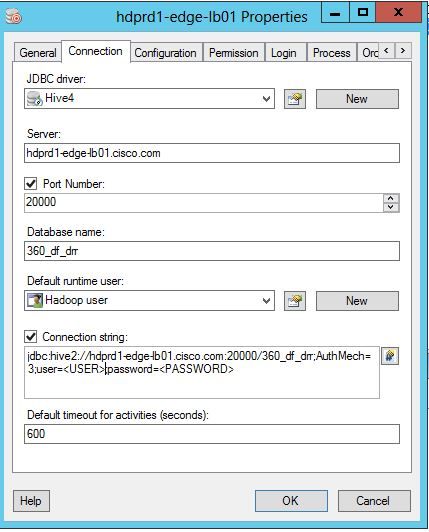

- Next you can go to your Targets area and create your JDBC database connection!

- In targets you can right click->New and select JDBC database and begin to fill out the information

- You will select your JDBC driver you created above and then fill out the connection information

- If you need to use a more "custom" connection string, you can override it there

- In my case for Hadoop it looks like this:

Pretty neat huh? Now you can make select/insert/update/delete calls to your JDBC based databases! Reload them and use them!

As always I have a good VOD for you to review... so

ONTO THE VIDEO:

Standard End-O-Blog Disclaimer:

Thanks as always to all my wonderful readers and those who continue to stick with and use CPO. Big things are on the horizon and I hope that you will continue to use CPO and find great uses for it! If you have a really exciting automation story, please email me it! (see below) I would love to compile some stories and feature customers or individual stories in an upcoming blog!!!

AUTOMATION BLOG DISCLAIMER: As always, this is a blog and my (Shaun Roberts) thoughts on CPO and automation, my thoughts on best practices, and my experiences with the product and customers. The above views are in no way representative of Cisco or any of it's partners, etc. None of these views, etc are supported and this is not a place to find standard product support. If you need standard product support please do so via the current call in numbers on Cisco.com or email tac@cisco.com

Thanks and Happy Automating!!!

--Shaun Roberts

shaurobe@cisco.com