- Cisco Community

- Technology and Support

- Networking

- Networking Knowledge Base

- GRE Tunnel MTU, Interface MTU, and Fragmentation

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

07-24-2018 10:07 AM - edited 07-24-2018 02:22 PM

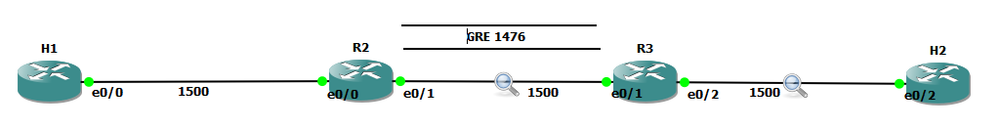

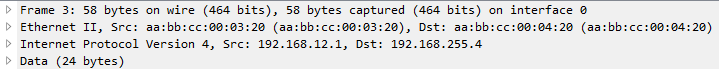

Whenever we create tunnel interfaces, the GRE IP MTU is automatically configured 24 bytes less than the outbound physical interface MTU. Ethernet interfaces have an MTU value of 1500 bytes. Tunnel interfaces by default will have 1476 bytes MTU. 24 bytes less the physical.

Why do we need tunnel MTU to be 24 bytes lower (or more) than interface MTU? Because GRE will add 4 bytes GRE header and another 20 bytes IP header. If your outbound physical interface is configured as ethernet, the frame size that will cross the wire is expected be 14 bytes more, 18 bytes if link is configured with 802.1q encapsulation. If the traffic source sends packet with 1476 bytes, GRE tunnel interface will add another 24 bytes as overhead before handing it down to the physical interface for transmission. Physical interface would see a total of 1500 bytes ready for transmission and will add L2 header (14 or 18 bytes for ethernet and 802.q respectively). This scenario would not lead to fragmentation. Life is good.

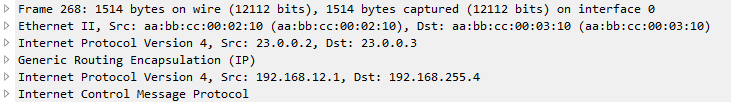

GRE traffic captured between R2 and R3 with a total of 1514 bytes

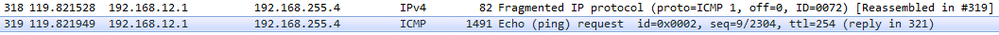

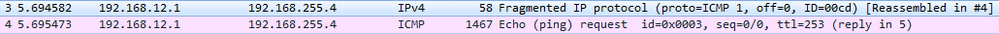

What if H1 sends 1477 bytes packet? When router (R2 in this case) receives the packet and routes it out to the GRE tunnel interface, it will see that the packet is larger than the tunnel interface IP MTU which is 1476. This will cause fragmentation. When a GRE tunnel fragments a packet, all fragmented packets will be encapsulated with GRE headers before handing it over to frame encapsulation. (Wireshark just reads the inner IP header and not the outer IP header for GRE)

|

Frame 319 |

Size (1491 bytes) |

Frame 318 |

Size (82 bytes) |

|

Ethernet |

14 |

Ethernet |

14 |

|

Outer IP Header |

20 |

Outer IP Header |

20 |

|

GRE |

4 |

GRE |

4 |

|

Original IP Header |

20 |

Original IP Header |

20 |

|

ICMP |

1433 |

ICMP |

24 |

When R3 receives the GRE packets, it will decapsulate the GRE headers and will transmit the fragmented packets (without reassembly) to H2. (Wireshark capture between R3 and H2)

|

Frame 4 |

Size (1467 bytes) |

Frame 3 |

Size (58 bytes) |

|

Ethernet |

14 |

Ethernet |

14 |

|

Original IP Header |

20 |

Original IP Header |

20 |

|

ICMP |

1433 |

ICMP |

24 |

This kind of situation where the GRE headend interface fragmented the packet, the receiving host (not the receiving tunnel) will be the one to reassemble the fragmented packets. In this case, H2. There will be extra work on the receiving host to reassemble the fragmented packets. This would mean that the NIC interface at the receiving end will have to put these packets into a buffer for proper reassembly.

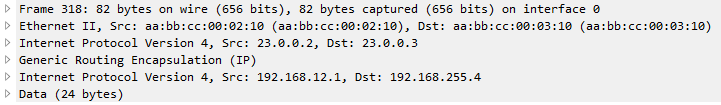

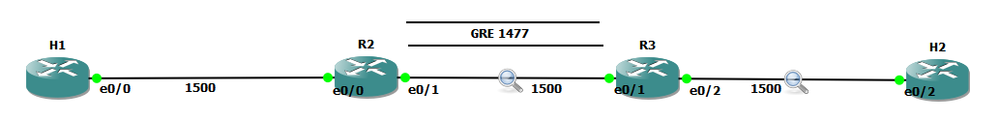

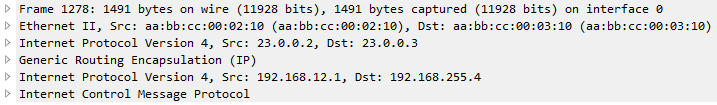

Another example. What if the GRE interface’s MTU was increased above 1476 while retaining an ethernet MTU of 1500? Let’s say the GRE IP MTU was increased to 1477 bytes. This would increase the packet size that’s being handed over for transmission to ethernet to 1501 bytes and would indeed need fragmentation. This time, one GRE packet will be fragmented by the ethernet interface for transmission.

R2(config-if)#int tunnel 0

R2(config-if)#ip mtu 1477

%Warning: IP MTU value set 1477 is greater than the current transport value 1476, fragmentation may occur

*Jul 22 02:17:09.542: %TUN-4-MTUCONFIGEXCEEDSTRMTU_IPV4: Tunnel0 IPv4 MTU configured 1477 exceeds tunnel transport MTU 1476

Let’s send 1477 bytes from H1 to H2 (192.168.255.4)

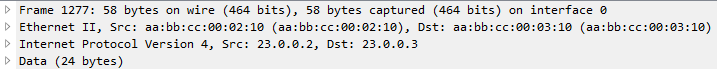

Note: Wireshark reads the inner IP header of frame 1278 but since frame 1277 only has one IP header, the source and destination IPs captured by Wireshark are the terminating end-points.

|

Frame 1278 |

Size (1491 bytes) |

Frame 1277 |

Size (58 bytes) |

|

Ethernet |

14 |

Ethernet |

14 |

|

Outer IP Header |

20 |

Outer IP Header |

|

|

GRE |

4 |

GRE |

|

|

Original IP Header |

20 |

Original IP Header |

20 |

|

ICMP |

1433 |

ICMP |

24 |

As you would notice here, the GRE packet was fragmented into two frames. However, only one has GRE encapsulation (frame 1278) and the other doesn’t have GRE headers, only IP header (frame 1277).

The problem with this kind of setup is R3 would do extra work to reassemble the fragmented traffic.

H1:

ping 192.168.255.4 size 1477 repeat 100

Type escape sequence to abort.

Sending 100, 1477-byte ICMP Echos to 192.168.255.4, timeout is 2 seconds:

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

Success rate is 100 percent (100/100), round-trip min/avg/max = 3/8/29 ms

R3:

sh ip traffic int eth0/1

Ethernet0/1 IP-IF statistics :

Rcvd: 200 total, 152100 total_bytes

0 format errors, 0 hop count exceeded

0 bad header, 0 no route

0 bad destination, 0 not a router

0 no protocol, 0 truncated

0 forwarded

200 fragments, 100 total reassembled

0 reassembly timeouts, 0 reassembly failures

0 discards, 100 delivers

Sent: 1 total, 84 total_bytes 0 discards

1 generated, 0 forwarded

0 fragmented into, 0 fragments, 0 failed

Mcast: 0 received, 0 received bytes

0 sent, 0 sent bytes

Bcast: 0 received, 0 sent

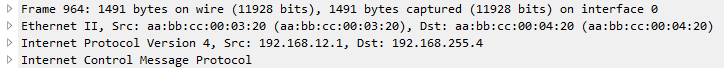

When R3 transmits the traffic to H2, the fragments were reassembled and sent with single frame.

|

Frame 964 |

Size (1491 bytes) |

|

Ethernet |

14 |

|

Original IP Header |

20 |

|

ICMP |

1457 |

When H2 respond with the ICMP request, it will reply with the same size causing the same scenario for R3 to R2. Both R2 and R3 may do double work, fragmentation and reassembly.

This is the reason why we don’t want GRE IP MTU and interface MTU to be less than 24 bytes apart. Some implementations recommend setting the GRE IP MTU to 1400 bytes to cover additional overhead especially when encryption comes into play (GRE/IPSEC). We do not want the exit interface to do the fragmentation because the tail-end of the GRE tunnel will be the one responsible to reassemble the fragmented data and this may cause high CPU when there is significant amount of traffic. Same with H2, R3 will allocate a buffer to place these fragmented packets for reassembly. Not to mention if there are any security devices in the path of the GRE tunnel and the packets arrived out of order, these security devices may drop the fragment causing other fragments to be dropped too.

Traffic with DF-bit set not discussed here.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Very informative. The MTU is always a confusing topic for me. I am still not clear how the GRE Tunnel learns the MTU of the output interface? The Tunnel source is just an IP address and it could be a Loopback Interface. The actual output physical interface may change dynamically according to routing. A lot of people would set an MTU manually, I don't like that as the MTU will just get smaller and smaller (if there is another router behind trying to establish its own tunnel, for example).

I also want to understand the DF-bit scenarios as TCP sets its MSS using the result of Path MTU Discovery. The other so many parties involved in a bi-directional connection, it is not clear who is responsible for sending the ICMP unreachable.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

If we need to pass only IPv4 unicast traffic we can use IP-IP tunnel instead of GRE.

Overhead is 20 bytes, not 24 as GRE

example IP-IP tunnel

interface Tunnel0

ip address 10.10.10.253 255.255.255.252

tunnel source 1.1.1.1

tunnel destination 2.2.2.2

tunnel mode ipip

If we need GRE/IpSec better to use VTI tunnel. VTI is indusrty standart and all vendors support it.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Not sure if this answers the DF-bit question, but this blog post (from a few years ago) covers off all fragmentation/MTU/DF variations I could think of https://sites.google.com/view/fieldnoted/fragments-mtu-and-gre

Hope it helps.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi,

You can, instead, use this document: https://www.cisco.com/c/en/us/support/docs/ip/generic-routing-encapsulation-gre/25885-pmtud-ipfrag.html

Regards,

Cristian Matei.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Very informative document. I got to spend some more time reading it. I think a very important piece is that the local VPN router will remember the MTU error sent by the peer VPN router and will use that information to send the ICMP too big to the source when it retransmits. That is some wicked magic happening there!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Networking is always like magic, especially for the end users, but sometimes for the engineer as well :)

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Great, just one question: why in the above examples Wireshark shows the IP fragment sequence in the reversed order w.r.t. the fragmentation order performed by R2? Thanks.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Good question @CARLO CIANFARANI - they have been sent out of order.

My guess would be the qos configured on the ethernet interface has transmitted the smaller fragment before the larger one.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Joseph W. Doherty diagram shows e0/x interfaces = very old hardware with 10M interfaces or emulator (of that old hardware) - either way running very old IOS so take your pick - wacky QOS or IOS bug. I did say "my guess" and I was only aiming to answer Carlo's question directly not critique the whole article <smile>

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I don't know really if it is just the way Wireshark shows "logically reassembled fragments" of the same IP packet.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

No - Wireshark shows the packets in the order they are captured in the pcap file.

So in this case you'd expect to see the full size packet first and the remaining fragment second.

Wireshark can only re-assemble the complete packet after the last fragment.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I know this thread has been around a while, but I have a question.

Instead of setting the tunnel interface to 1476, could you make the MTU for the traffic inbound to the router, the local LAN, set that MTU to 1476 and then the tunnel interface to 1500?

So sort of reverse. Wouldn’t that mean traffic hitting the router is at 1476…then 24 bytes of headers are added and hit the tunnel at 1500?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Yes, that would work, but it means you're giving up 24 bytes of maximum capacity all the time.

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: