- Cisco Community

- Technology and Support

- Networking

- Routing

- Hierarchal QoS policy and Priority vs Bandwidth command and testing with IPerf

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Hierarchal QoS policy and Priority vs Bandwidth command and testing with IPerf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-23-2021 07:13 AM - edited 02-23-2021 07:18 AM

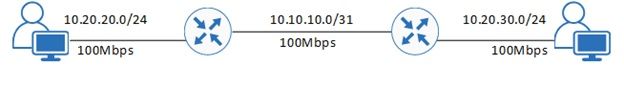

I am testing some QoS policy configurations on two Cisco 1841 routers.

In the real world one side of this would terminate on a 10Gbps NNI interface on an ASR9001 as an 802.1q tagged sub-interface, and the other on a C1111-4P with a 100Mbps WAN termination. This particular setup also has Q-in-Q with two inner VLAN tags to provide two VRFs. One is limited to 70Mbps and the other 30Mbps. The 30Mbps needs to be further split to provide a guaranteed 10Mbps to VoIP and 20Mbps to everything else.

I have therefore stripped this back to two 1841 routers connected back to back with an Ethernet X-over cable on their FE0/0 interfaces. I have created a parent policy that shapes the traffic to 30Mbps with a child policy to provide a LLQ of 10Mbps for a voice class and class-default with 20Mbps bandwidth. I am using an ACL to match traffic for the voice class so I can simulate voice and non-voice traffic.

I have a laptop either side connected to a switchport and am using IPerf3 to generate traffic.

The qos configuration on both routers is this:

ip access-list extended qos-acl permit tcp any any eq 65001 permit tcp any eq 65001 any ! class-map match-any VOICE-CLASS match access-group name qos-acl ! policy-map POLICY-QOS-CHILD-POLICY-30MB class VOICE-CLASS priority 10000 class class-default set dscp default bandwidth 20000 policy-map POLICY-QOS-OUT-30M class class-default shape average 30000000 service-policy POLICY-QOS-CHILD-POLICY-30MB ! interface FastEthernet0/0 ip address 10.10.10.1 255.255.255.254 duplex auto speed auto service-policy output POLICY-QOS-OUT-30M !

On one side I have a PC running IPerf3 in server mode in two separate command prompt sessions - one listening on TCP/65001 (VoIP) and the other on TCP/65002.

On the other side I run IPerf3 in client mode and connect to the server on either TCP/65001 or TCP/65002. If I run a single session to either TCP port it goes up to around 30Mbps. If I run both sessions simultaneously I see around 6Mbps for the traffic simulating VoIP and around 23Mbps for the other (6.05Mbps & 23Mbps).

If I change the policy so that the VoIP class has a 'bandwidth' statement rather than a 'priority' statement then I see roughly a 10Mbps/20Mbps split (10.2Mbps & 19.3Mbps).

I know the priority command operates differently than the bandwidth command, however I was expecting to see a 10/20 split with both configurations? The leaky bucket thing always confuses me...

Is this a result of IPerf3 and TCP?

- Labels:

-

WAN

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-23-2021 07:28 AM

Hello @andrew.butterworth ,

your results are interesting:

>> If I run both sessions simultaneously I see around 6Mbps for the traffic simulating VoIP and around 23Mbps for the other (6.05Mbps & 23Mbps).

>> If I change the policy so that the VoIP class has a 'bandwidth' statement rather than a 'priority' statement then I see roughly a 10Mbps/20Mbps split (10.2Mbps & 19.3Mbps).

First of all, real VOIP traffic is UDP based so it does not suffer TCP windowing effects.

The LLQ queue can have an implicit policer to avoid starvation of standard queues and in your case the effects of this policer is to reduce the goodput of the "VOIP traffic" probably for some drops happening during the test.

When you use the bandwidth commands for both traffic classes you get different results as here with bandwidth there is no implicit policer involved.

What is interesting is that your tests demonstrate the presence of this implicit policer at least on your devices under tests.

Hope to help

Giuseppe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-23-2021 08:23 AM

Ditto.

It's not uncommon Cisco policers, using defaults for Tc/Bc, often cause TCP flows to have a lower effective CIR than what's configured. Basically, the impact it much like an interface with a FIFO queue insufficient for TCP's BDP (bandwidth delay product) needs.

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide