- Cisco Community

- Technology and Support

- Networking

- Routing

- using IPSLA and Track for multiple static routes

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

using IPSLA and Track for multiple static routes

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2019 02:04 PM

Here is our setup.

Datacenter1 = DC1

Datacenter2 = DC2

Point to Point1 = PTP1

Point to Point2 = PTP2

Internet = INET

We have some Cisco 3650's that we want to configure a static route for a new point to point being setup between our 2 datacenters. We will be turning the switchports on each core that connects to the point to point into L3 routed ports. We also have an internet connection.

My issue is how to handle 3 separate routes in cores that are not stacked.

On Core1 I would setup the following.

ip sla 1

icmp-echo 10.9.0.1 source-interface gig0/20

threshold 10

timeout 1000

frequency 2

ip sla schedule 1 life forever start-time now

ip route 10.9.0.0 255.255.0.0 x.x.x.x track 1 (x.x.x.x = L3 IP of PTP1)

ip route 10.9.0.0 255.255.0.0 10.219.254.10 10

This is easy enough, but I'm unclear on how to scale this out when I have PTP2 on Core2. How do I make PTP1 the primary static route for 10.9.0.0 on core 1, but then if the 10.9.0.1 IP becomes unavailable, rather than sending to my 10.219.254.10 route I send it to Core2 PTP2..... THEN if Core PTP2 is ALSO unavailable THEN send it out the 10.219.254.10?

That's my trouble. Am I explaining it correctly?

- Labels:

-

Other Routing

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2019 09:54 AM - edited 08-29-2019 09:55 AM

You will need another route that points to the other next hop (PTP2) with an AD higher than the primary route but lower than the last resort. You will also need another IP SLA and track configuration to verify reach-ability for the secondary route.

This type of configuration tends not to scale very well, is it possible to utilize dynamic routing in your environment?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2019 10:56 AM

Hello,

you indeed need three static routes, two SLAs, and a boolean track list. Below is a configuration example. If you cannot work out how to translate it to your own configuration, post the full running configuration so we can customize it.

track 1 ip sla 1 reachability

!

track 2 ip sla 2 reachability

!

track 3 list boolean or

object 1

object 2

!

interface FastEthernet0/0

description ISP Primary

ip address 100.100.100.1 255.255.255.252

duplex auto

speed auto

media-type rj45

!

interface FastEthernet0/1

description ISP Secondary

ip address 150.150.150.1 255.255.255.252

duplex auto

speed auto

media-type rj45

!

interface FastEthernet0/2

ip address 200.200.200.1 255.255.255.252

description ISP Terciary

duplex auto

speed auto

media-type rj45

!

ip route 0.0.0.0 0.0.0.0 100.100.100.2 track 1

ip route 0.0.0.0 0.0.0.0 150.150.150.2 100 track 2

ip route 0.0.0.0 0.0.0.0 200.200.2000.2 200

!

ip sla 1

icmp-echo 8.8.8.8 source-interface FastEthernet0/0

threshold 1000

timeout 3000

frequency 3

!

ip sla schedule 1 life forever start-time now

!

ip sla 2

icmp-echo 8.8.8.8 source-interface FastEthernet0/1

threshold 1000

timeout 3000

frequency 3

!

ip sla schedule 2 life forever start-time now

!

event manager applet CLEAR_NAT

event track 3 state any

action 1.0 cli command “enable”

action 2.0 cli command “clear ip nat translation *”

action 3.0 cli command "end"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2019 02:04 PM

This is very helpful. The one thing that I don't think is addressed is the following.

MPLS1, MPLS2, and Internet are not all plugged into the same core.

I have 2 cores, which are not stacked.

So MPLS1 is plugged into core 1, MPLS2 is plugged into core 2, and Internet is plugged into both cores using an HSRP next hop IP.

The thing I'm having trouble wrapping my head around is how to configure for the following scenario.

For inter datacenter traffic we want to use MPLS1 as the primary link. If MPLS1 goes down or the next hop is unreachable, we want to send inter datacenter traffic out MPLS2. If MPLS2 also goes down or next hop is unreachable, then use Internet HSRP next hop IP.

My head is having trouble with "if core1 mpls1 goes down, core 1 needs to send traffic to core 2's mpls2. But core2 will need to know core1 mpls1 is down also so as to not try and redirect the traffic back to core1 mpls1.

Also, can you explain the event manager applet commands. What are these doing? Why are these needed?

Core1 L3 IP - 100.100.100.1 <> 100.100.100.2 Core2 L3 IP - 150.150.150.1 <> 150.150.150.2 Internet - 10.219.254.10 CORE1 ! track 1 ip sla 1 reachability ! track 2 ip sla 2 reachability ! ! int gig1/0/1 description CORE1-MPLS1 ip address 100.100.100.1 255.255.255.252 ! int gig1/0/3 description INTERNET switchport mode access switchport access vlan 999 ! ip route 10.119.0.0 255.255.0.0 100.100.100.2 track 1 ip route 10.119.0.0 255.255.0.0 150.150.150.2 100 track 2 ip route 10.119.0.0 255.255.0.0 10.219.254.10 200 ip route 0.0.0.0 0.0.0.0 10.219.254.10 ! ip sla 1 icmp-echo 100.100.100.2 source-interface gig1/0/1 threshold 10 timeout 1000 frequency 2 ! ip sla schedule 1 life forever start-time now ! ip sla 2 icmp-echo 150.150.150.2 source-interface gig/1/0/1 threshold 10 timeout 1000 frequency 2 ! ip sla schedule 2 life forever start-time now CORE2 ! track 1 ip sla 1 reachability ! track 2 ip sla 2 reachability ! ! int gig1/0/1 description CORE2-MPLS2 ip address 150.150.150.1 255.255.255.252 ! int gig1/0/3 description INTERNET switchport mode access switchport access vlan 999 ! ip route 10.119.0.0 255.255.0.0 100.100.100.2 track 1 ip route 10.119.0.0 255.255.0.0 150.150.150.2 100 track 2 ip route 10.119.0.0 255.255.0.0 10.219.254.10 200 ip route 0.0.0.0 0.0.0.0 10.219.254.10 ! ip sla 1 icmp-echo 100.100.100.2 source-interface gig1/0/1 threshold 10 timeout 1000 frequency 2 ! ip sla schedule 1 life forever start-time now ! ip sla 2 icmp-echo 150.150.150.2 source-interface gig/1/0/1 threshold 10 timeout 1000 frequency 2 ! ip sla schedule 2 life forever start-time now

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2019 11:48 PM

Hello,

can you post a schematic drawing of your topology, so we can see exactly what is connected to what ? With 'not stacked' you mean not connected to each other ?

The EEM script clears the NAT translations in case of a failover so you don't have to wait for them to timeout...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-30-2019 06:28 AM

Are you running HSRP to provide gateway redundancy for devices behind the core that need to get routed over the MPLS? As Georg mentioned a diagram would be helpful.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-09-2019 08:57 AM

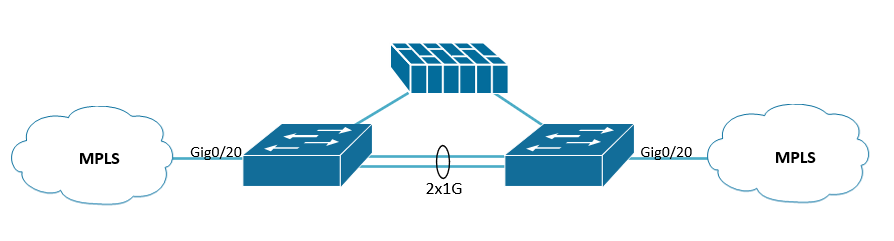

Relevant topology

The 2 switches are L3 switches, not stacked, just plugged into each other via a port channel. The upstream device is a firewall that handles the internet static route. These switches are Core 1, and Core 2. The other datacenter is an exact mirror of this topology.

On Core1:

interface GigabitEthernet0/20

description MPLS

no switchport

ip address 10.255.255.1 255.255.255.252

!

interface Vlan150

description MPLS-TEST-VLAN

ip address 10.101.150.2 255.255.255.0

standby version 2

standby 150 ip 10.101.150.1

standby 150 priority 200

standby 150 preempt

!

ip sla 150

icmp-echo 10.255.255.2 source-interface gig0/20

threshold 10

timeout 1000

frequency 2

ip sla schedule 150 life forever start-time now

!

track 150 ip sla 150 reachability

!

ip route 10.101.0.0 255.255.0.0 10.255.255.2 track 150

ip route 10.101.0.0 255.255.0.0 10.219.254.10 100

! default route

ip route 0.0.0.0 0.0.0.0 10.219.254.10

!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-09-2019 11:10 AM

Hello,

the config looks good. I guess you need the same, mirrored configuration on the Core 2 switch.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-10-2019 08:30 AM - edited 09-10-2019 02:08 PM

So I've not had trouble wrapping my head around this config for a single core MPLS.

The problem I'm having trouble with is how would I configure this such that if Core1 goes down, it sends it over to Core2's MPLS. But Core2's IPSLA needs to be aware that Core1's MPLS is down also so it doesn't try and route back to Core1 in a routing loop.

One of my big questions is, if I'm using an IP SLA to track a link on another switch, what would be my source interface to monitor the MPLS on core 2 on core 1? Would the source interface be the port channel on core1 over to core2?

The above example given by Georg assumes that both MPLS links are on the same switch.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-16-2019 06:36 AM

You also need to decrement the HSRP priority of the primary device when the track fails. This will allow the secondary to takeover.

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide