- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-30-2015 09:07 PM

Hi,

Dual sided vpc ,

esx server nic 1 and nic 2 connected to the switch a and b respectively where vpc enabled .

after cable disconnected from switch b and reconnected , esx shows nic 'none '.

is it because of orphan ports ?

Please help

Solved! Go to Solution.

- Labels:

-

Server Networking

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-07-2015 09:53 AM

Hi,

The configuration you’re trying to use with the channel-group 250 mode active is not compatible with the VMware Standard Switch (VSS). The keyword active means the Nexus switches will run IEEE 802.3ad (IEEE 802.1AX) Link Aggregation with the Link Aggregation Control Protocol (LACP). LACP is not supported on the VSS; you would need the VMware Distributed Switch or the Nexus 1000V to use this configuration.

You have two options here.

If you do want to use vPC and Link Aggregation, then you will need to use the static mode with the channel-group 250 mode on command under the ethernet interface. On the VMware server you would need to edit the vSwitch properties, and then on the "NIC Teaming" tab, select “Route based on IP Hash” from the "Load Balancing" drop down.

The other option is to simply configure both switch ports as trunk ports as you’ve now done, and then on the VMware server select “Route based on the originating virtual port ID” from the configure the "Load Balancing" drop down. Also you need to ensure that in the "Failover Order" section of the vSwitch properties, you configure both adapters as “active”.

The other point to be aware of is that on the Nexus switch for either of the above, you should add the spanning-tree port type edge trunk command under the port-channel interface, or the physical interface is you decide not to use vPC.

This command ensures that the port starts forwarding traffic as soon as the link becomes operational. If this command is omitted, the port will not forward data for a period of ~30 seconds after link establishment.

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-01-2015 12:54 AM

Hi,

I'm not sure I understand your setup or question.

"Dual sided vpc"

This is a term normally used to refer to two vPC domains connected via vPC e.g., an aggregation layer configured as one vPC domain connected via a vPC to an access layer configured as a second vPC domain.

Here you're referrig to an ESX server so I presume you have a single vPC domain comprised of switch A and B, connected to the ESX server.

"after cable disconnected from switch b and reconnected , esx shows nic 'none '."

What does the Nexus switch show for the interface? Can you post the output of the show interface <module>/<port> and show port-channel <port_channel> commands? Can you also post the show run int <module>/<port> and show run int port-channel <port_channel>?

I presume you have the ESX server configured with a distributed switch and LACP enabled? The standard switch does not support LACP.

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-01-2015 12:54 AM

Hi ,

Now i am confused dual sided and double sided

Can you clarify please . it will be a great help

thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-02-2015 12:35 AM

Hi,

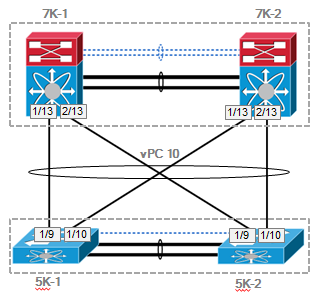

Apologies for causing any confusion. Dual or double sided vPC is typically where there are two vPC domains joined via a vPC. This might be used for example where you have Nexus 7000 as aggregation layer routers and Nexus 5000 as access layer switches. This is shown in the diagram below.

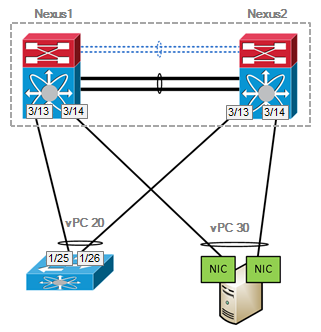

I think what you’re referring to is simply a vPC from one pair of switches to a single server connected via dual NIC as shown below.

A useful reference for vPC is the Quick Start Guide :: Virtual Port Channel (vPC) which has an example configuration for connecting devices as shown in the second image on slide 20. Does your configuration match that?

Are you able to post the output of the commands I mentioned in my previous response? Also are you able to confirm the ESX server configuration is using an LACP capable virtual switch such as the VMware Distributed Virtual Switch or the Nexus 1000v?

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-07-2015 07:47 AM

Hi ,

You asked

" What does the Nexus switch show for the interface? Can you post the output of the show interface <module>/<port> and show port-channel <port_channel> commands? Can you also post the show run int <module>/<port> and show run int port-channel <port_channel> "

I don't know it would be helpful now . i removed the vpc and portchannel from the switch .

I am attaching the topology . (i don't know the topology falls under double sided )

and the sh int

sw1

sh int eth1/20

Ethernet1/20 is up

admin state is up, Dedicated Interface

Hardware: 100/1000/10000 Ethernet, address:

MTU 1500 bytes, BW 1000000 Kbit, DLY 10 usec

reliability 255/255, txload 1/255, rxload 1/255

Encapsulation ARPA, medium is broadcast

Port mode is trunk

full-duplex, 1000 Mb/s

Beacon is turned off

Auto-Negotiation is turned on

Input flow-control is off, output flow-control is off

Auto-mdix is turned off

Switchport monitor is off

EtherType is 0x8100

EEE (efficient-ethernet) : n/a

Last link flapped 2week(s) 1day(s)

Last clearing of "show interface" counters never

13 interface resets

30 seconds input rate 72 bits/sec, 0 packets/sec

30 seconds output rate 51352 bits/sec, 60 packets/sec

Load-Interval #2: 5 minute (300 seconds)

input rate 115.73 Kbps, 8 pps; output rate 50.47 Kbps, 45 pps

RX

11041327 unicast packets 43 multicast packets 1639 broadcast packets

11043009 input packets 14784209289 bytes

9147346 jumbo packets 0 storm suppression packets

0 runts 0 giants 0 CRC 0 no buffer

0 input error 0 short frame 0 overrun 0 underrun 0 ignored

0 watchdog 0 bad etype drop 0 bad proto drop 0 if down drop

0 input with dribble 0 input discard

0 Rx pause

TX

3402175 unicast packets 47168194 multicast packets 32026733 broadcast packets

82597102 output packets 7636866112 bytes

5448 jumbo packets

0 output error 0 collision 0 deferred 0 late collision

0 lost carrier 0 no carrier 0 babble 0 output discard

0 Tx pause

sh int ethernet 1/20

Ethernet1/20 is up

admin state is up, Dedicated Interface

Hardware: 100/1000/10000 Ethernet,

MTU 1500 bytes, BW 1000000 Kbit, DLY 10 usec

reliability 255/255, txload 1/255, rxload 1/255

Encapsulation ARPA, medium is broadcast

Port mode is trunk

full-duplex, 1000 Mb/s

Beacon is turned off

Auto-Negotiation is turned on

Input flow-control is off, output flow-control is off

Auto-mdix is turned off

Switchport monitor is off

EtherType is 0x8100

EEE (efficient-ethernet) : n/a

Last link flapped 2 week(s) 1day(s)

Last clearing of "show interface" counters never

4 interface resets

30 seconds input rate 0 bits/sec, 0 packets/sec

30 seconds output rate 40944 bits/sec, 51 packets/sec

Load-Interval #2: 5 minute (300 seconds)

input rate 0 bps, 0 pps; output rate 45.98 Kbps, 45 pps

RX

0 unicast packets 0 multicast packets 0 broadcast packets

0 input packets 0 bytes

0 jumbo packets 0 storm suppression packets

0 runts 0 giants 0 CRC 0 no buffer

0 input error 0 short frame 0 overrun 0 underrun 0 ignored

0 watchdog 0 bad etype drop 0 bad proto drop 0 if down drop

0 input with dribble 0 input discard

0 Rx pause

TX

32108 unicast packets 33942008 multicast packets 25025565 broadcast packets

58999681 output packets 5035049358 bytes

3121 jumbo packets

0 output error 0 collision 0 deferred 0 late collision

0 lost carrier 0 no carrier 0 babble 0 output discard

0 Tx pause

I presume you have the ESX server configured with a distributed switch and LACP enabled? The standard switch does not support LACP.

Actually i am using standard switch . no dist

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-07-2015 09:53 AM

Hi,

The configuration you’re trying to use with the channel-group 250 mode active is not compatible with the VMware Standard Switch (VSS). The keyword active means the Nexus switches will run IEEE 802.3ad (IEEE 802.1AX) Link Aggregation with the Link Aggregation Control Protocol (LACP). LACP is not supported on the VSS; you would need the VMware Distributed Switch or the Nexus 1000V to use this configuration.

You have two options here.

If you do want to use vPC and Link Aggregation, then you will need to use the static mode with the channel-group 250 mode on command under the ethernet interface. On the VMware server you would need to edit the vSwitch properties, and then on the "NIC Teaming" tab, select “Route based on IP Hash” from the "Load Balancing" drop down.

The other option is to simply configure both switch ports as trunk ports as you’ve now done, and then on the VMware server select “Route based on the originating virtual port ID” from the configure the "Load Balancing" drop down. Also you need to ensure that in the "Failover Order" section of the vSwitch properties, you configure both adapters as “active”.

The other point to be aware of is that on the Nexus switch for either of the above, you should add the spanning-tree port type edge trunk command under the port-channel interface, or the physical interface is you decide not to use vPC.

This command ensures that the port starts forwarding traffic as soon as the link becomes operational. If this command is omitted, the port will not forward data for a period of ~30 seconds after link establishment.

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-08-2015 10:03 AM

Thanks for the info ,

The topology you provided (double sided ) and the topology i posted , is there any drawback or benefits

Thanks