- Cisco Community

- Technology and Support

- Service Providers

- Service Providers Knowledge Base

- BNG deployment scale guidelines on ASR9000

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

06-10-2015 03:03 AM - edited 03-28-2018 05:15 AM

![]()

Update (March 2018)

This document was written at the time when 5.3.4 was about to be released. Hence the SW recommendation for BNG deployment has changed. Please refer to https://supportforums.cisco.com/t5/service-providers-documents/ios-xr-release-strategy-and-deployment-recommendation/ta-p/3165422.

The supported BNG scale specified in this document stands for all 32-bit (aka Classic) IOS XR releases, regardless of the line card generation (Typhoon, Tomahawk) or RSP generation (RSP440-SE, RSP880-SE) because the limit is imposed by the max memory that a process can address. Higher BNG scale requires the 64-bit (aka Enhanced) IOS XR, which also implies Tomahawk and RSP880-SE.

Introduction

The purpose of this document is to share the information about the BNG scale tested within the System Integration Testing (SIT) cycle of IOS XR releases 5.2.4 to 5.3.4 on Cisco ASR9000 platform. This is not the only profile in which BNG was tested at Cisco.

The scalability maximums for individual features may be higher than what is specified in this profile. The BNG SIT profile is provides for a multi-dimensional view of how features perform and scale together. The results in this document closely tie with the setup utilised for this profile. The results may vary depending upon the deployment and optimizations tuned for the convergence.

IOS XR release 5.2.4 is the suggested release for deploying BNG on the ASR9000 platform (until the XR release 5.3.3 is available).

IOS XR release 5.3.4 was posted on October 7th 2016. The XR release 5.3.4 is the Cisco suggested release for all BNG deployments. If your deployment requires features not supported yet in 5.3.4, please reach out to your account team for recommendation. The scale numbers for 5.3.4 are identical to those from 5.2.4. The difference between these releases is in the supported features and level of BNG hardening cycles that we at Cisco have completed.

Note: starting with XR release 5.3.4 you can use RSP880 in BNG deployments on asr9k. The scale remains the same because the scale is dictated by line card capabilities. The supported BNG scale will increase with the introduction of BNG support on Tomahawk generation line cards.

Feature And Scale Under Test

BNG With MSE (RP based subscribers)

| Scale parameter | Scale |

|---|---|

| Access ports | bundle GigE/TenGigE |

| Number of members in a bundle | 2 |

| uplink ports | TenGige |

| Scale - PPP session PTA | 64k (Without any Other BNG Sessions) |

| Scale - PPP Sessions L2TP | 64k (Without any Other BNG Sessions) |

| Scale - IPv4 Sessions | 64k (Without any Other BNG Sessions) |

| Scale - PPP v6 session | 64k (Without any Other BNG Sessions) |

| Scale - IP v6 Session | 64k (Without any Other BNG Sessions) |

| Scale - Dual-Stack Session | 32k (Without any Other BNG Sessions) |

| Sessions Per Port | 8k/32k |

| Sessions Per NPU | 32k |

| Sessions Per Line Card | 64k |

| Number of classes per QoS policy | 8 |

| Total number of unique QoS policies | 50 |

| Number of sub-interfaces | 2k |

| Number LI taps | 100 |

| Number CPLs | 50 |

| Session QoS | Ingress: Policing Egress: Hierarchical QoS; Model F with 3 queues per session |

| Number IPv4 ACLs | 50 |

| Number ACEs per IPv4 ACL | 100 |

| Number IPv6 ACLs | 50 |

| Number ACEs per IPv6 ACL | 100 |

| Number IGP routes | 10k |

| Number VRFs for subscribers | 100 |

| Number BGP routes | 30k |

| Number VPLS | 3000 |

| Number VPWS | 2000 |

| Number MAC addresses | 2000 |

| Number of sessions with multicast receivers | 8000 |

| AAA Accounting | Start-Stop every 10min Interim |

| Service Accounting | 64k + 2 services |

| BNG over Satellite | 32k |

| CPS rate | 80 calls per second |

| CoA | 40 requests per second |

| DHCP (proxy or server) | 60 requests per second |

BNG Only (RP based subscribers)

| Scale parameter | Scale |

|---|---|

| Access ports | bundle GigE/TenGigE |

| Number of members in a bundle | 2 |

| Scale - PPP session PTA | 128k (Without any Other BNG Sessions) |

| Scale - PPP Sessions L2TP | 64k (Without any Other BNG Sessions) |

| Scale - IPv4 Sessions | 128k (Without any Other BNG Sessions) |

| Scale - PPP v6 session | 64k (Without any Other BNG Sessions) |

| Scale - IP v6 Session | 64k (Without any Other BNG Sessions) |

| DS PPPoE | 64k (Without any Other BNG Sessions) |

| Static Session | 8k (Without any Other BNG Sessions) |

| Routed Subscribers | 32k (Without any Other BNG Sessions) |

| Service Accounting | 64K with 2 Services , 24 with 3 Services |

| Routed Pkt Triggered subscriber IPv4/IPv6 | 64k |

CPU Utilisation (RP based subscribers; 128k IPoEv4)

| HW Type | A9K-RSP-440-SE | MOD80-SE |

|---|---|---|

| Role | RSP | Subscriber facing line card |

| Major features | Total of 128k RP based IPoEv4 or PPPoE subscribers | Data plane |

| CPU steady state | 14% | 10-14% |

| CPU peak | 60-70% | 50-60% |

| Top processes | lpts_pa | prm_server_ty |

| iedged | qos_ma_ea | |

| ppp_ma/dhcpd | prm_ssmh | |

| ifmgr | netio |

CPU Utilisation (RP based subscribers; 64k Dual-Stack)

| HW Type | A9K-RSP-440-SE | MOD80-SE |

|---|---|---|

| Role | RSP | Subscriber facing line card |

| Major features | Total of 64k RP based IPoE or PPPoE subscribers | Data plane |

| CPU steady state | 14% | 10-14% |

| CPU peak | 40-50% | 30-40% |

| Top processes | lpts_pa | prm_server_ty |

| iedged | qos_ma_ea | |

| ppp_ma/dhcpd | prm_ssmh/ipv6_nd | |

| ifmgr/ipv6_nd | netio |

BNG Only (LC based subscribers)

| Scale parameter | Scale |

|---|---|

| Access ports | GigE/TenGigE |

| Uplink ports | GigE/TenGigE |

| Scale - PPP session PTA | 256k (Without any Other BNG Sessions) |

| Scale - IPv4 Sessions | 256k (Without any Other BNG Sessions) |

| Scale - PPP v6 session | 128k (Without any Other BNG Sessions) |

| Scale - IP v6 Session | 128k (Without any Other BNG Sessions) |

| DS PPPoE | 128k (Without any Other BNG Sessions) |

| Static Session | 8k (Without any Other BNG Sessions) |

CPU Utilisation (LC based subscribers; 256k IPv4 Sessions)

| HW Type | A9K-RSP-440-SE | MOD80-SE |

|---|---|---|

| Role | RSP | Subscriber facing line card |

| Major features | LC based IPoE or PPPoE Subscribers (256k) | LC based Subscribers, Control and Data plane (64K per LC) |

| CPU steady state | 10% | 10-14% |

| CPU peak | 10% | 80-90% |

| Top processes | dhcpv6/dhcp | prm_server_ty |

| iedged/ipv4_rib | dhcpv6/dhcp | |

| ppp_ma/dhcpd | iedged | |

| ppp_ma | ||

| qos_ma_ea |

PWHE With BNG Only (RP based subscribers)

| Scale parameter | Scale |

|---|---|

| Generic interafce list members | Bundle, GigE/TenGigE |

| Number of members in a bundle | 2 |

| Scale - PPP session PTA | 128k (Without any Other BNG Sessions) |

| Scale - IPv4 Sessions | 128k (Without any Other BNG Sessions) |

| PW Scale | 1k |

References

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Xander,

Recently I am pushing ASR9k to wherever possible as it is so much beautiful to work with.

Currently I am deploying BNGs on ASR9010 and ASR9006 where I am using single MOD80-SE with 2 of 4x10G MPA cards. I need your suggestion on how should I deploy as MOD80 has 2 NPs and subscriber limit is 32K per NP. I have some QinQ vlans where one inner vlan got subscribers above 10K. I am seeing docs where it is saying one vlan supports 8k max only. Also I will be using Bundle interfaces hence how should the members be binded? I am planning with 2x10G for LAN and 2x10G WAN from each MPA. Please suggest me if I am going right. Also if I bind 4x10G of one MPA for subscribers and other 4x10G for WAN, will it create issues for max subscriber support? As per your doc each NP can only go 32k.

Hope you would suggest me with best way I could go with to support max sessions and with Bundling.

Regards,

Raaz

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Raaz,

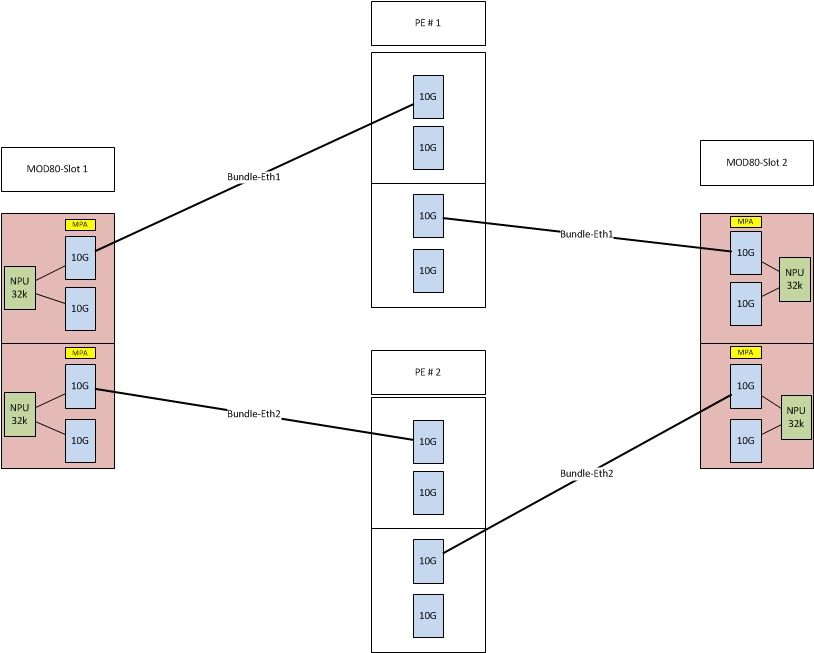

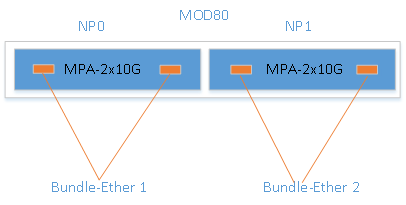

If you don't want to burn NPU's you should not add multiple NPU's into one BE. You should connect the links like in my fancy illustration.

If you connect the links like that you will get 64K sessions. If you connect two NPU's into one BE you will get "only" 32K sessions.

You can use the same BE for WAN links, just use subinterfaces.

Xander or Aleksander will correct me if I am wrong about that.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Be very cautious though, adding ports from the same NP to a bundle will consume the amount of queues times the amount of bundle member ports. Since BNG scaling is multidimensional, both session count, queue count, policer count etc has to be taken into account.

In the above scenario, using HQoS, each bundle-ether will use up its share of 32K parent queues with only 16K subscribers each.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Smail, Thank you very much for your suggestion. I have concluded on same and I found your same suggestion on another topic too. Xander/Aleksander and you helping so lot on understanding/deployment on BNG boxes. These docs and discussions have made me possible to deploy/demo so many boxes. Thumbs up to you guys.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi Raaz,

Smail and Fredrik are both raising valid points.

If you need the QoS policy on subscriber interfaces to only police the traffic, Smail's recommendation stands.

If you need queuing/shaping on subscriber interfaces, Fredrik's recommendation stands. On bundles the QoS policy is replicated per member. If both members are on the same NP you are cutting in half the available QoS resources.

/Aleksandar

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Ok so 9001-S should handle 64k subs as its all on the RSP via PPPoE (using bundle) and using GEORED for redundancy.

We're applying avpairs via radius to apply Qos Policing based on a bunch of policy maps for example ingress 2mbit/egress 10mbit example below

policy-map 2048kb -- police rate 2048 kbps burst 384 kbytes peak-rate 3072 kbps peak-burst 768 kbytes

policy-map 40960kb -- police rate 40960 kbps burst 7680 kbytes peak-rate 61440 kbps peak-burst 15360 kbytes

so when a sub connects I get on the subscriber interface policy map....

Bundle-Ether100.48.pppoe32 input: 2048kb

Bundle-Ether100.48.pppoe32 output: 40960kb

We're currently just using static routes but will enable ospf to distribute the routes from the SRG

So my question is based on the above QOS and pppoe setup and the fact we're on a 9001-S, the plan being to have 2x10G LAN and 2x10G WAN, with SRG to the secondary unit... Will we be able to get the full 20gigabit + 64k subs (or will we atleast be able to handle say 16k subs) I'm a bit confused how QOS affects the total number of pppoe subscribers we can handle per box.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi cchance01, yup you're fine with 64k subs, can be lc based or rsp based (bundle) and using geored. however the 9001-S has only one npu enabled, so then you are limited to 32k subs (32k sub per npu is the hard limit today).

the number of subs would not affect the pps performance so you can get 40G through the box as you desire. probably not at 64byte packets, but with imix it should.

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi

We need to achieve the below contention ratios

|

Mbps |

GUARANTEED Mbps-FIBRE LITE |

NUMBER OF USERS |

|

5 |

1,1 |

4 |

|

10 |

1,7 |

6 |

|

20 |

2,6 |

8 |

|

40 |

4,0 |

10 |

On the uplink from the BNG going up to the CORE is it possible to have sub interfaces for each of the 4 profiles above i.e. 5Mbps,10Mbps,20Mbps and 40Mbps and then cap each of the uplinks to something like this:

5Mbps customers------Uplink capped to 50Meg meaning that we can have a maximum of 10 customers before they start to experience anything less than the Max bandwidth stipulated. And we can have a maximum of about 80 users for that profile each experiencing 1,1.

10MBPS customers-----Uplink capped to 100Meg meaning that we can have a maximum of 10 customers before they start to experience anything less than the Max bandwidth stipulated. And we can have a maximum of about 60 users for that profile each experiencing 1.7.

20 MBPS customers---- Uplink capped to 200Meg meaning that we can have a maximum of 10 customers before they start to experience anything less than the Max bandwidth stipulated. And we can have a maximum of about 80 users for that profile each experiencing 2.6.

40 MBPS customers---- Uplink capped to 400Meg meaning that we can have a maximum of 10 customers before they start to experience anything less than the Max bandwidth stipulated. And we can have a maximum of about 1000 users for that profile each experiencing 4.0.

And then have some form of policy based routing to direct the different traffic profiles to the different respective links.

AS the users in each profile increase there will be some manual intervention to slightly increase the uplink capped bandwidth.

Is this possible?or kindly Suggest an alternative way that could work

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Alex,

It would be nice if you could provide updated scale numbers for Tomahawk LCs, specifically the Powerglide ones. What happens with 24x10G Powerglide which has only one NPU? Are we limited to 32K per LC? Do we still have the QoS restrictions that support 8K per port?

Also I'm a bit concerned with the "The XR release 5.3.4 is the Cisco suggested release for all BNG deployments". Using Powerglide you have no choice other than 6.x. Would you change your BNG recommendation or should we avoid Powerglide?? :-)

Thanks,

George

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello, based on the post of smailmilak, I want to investigate the following scenario. I have a single chassis with 2 x MOD80 filled with 4xMPA-4x10G (two in each MOD80) and The Chassis will be connected Dual Home to two PEs with a license for total 64k subs. In case a mod80 fails we will continue to have 64k subs ?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

yes that is possible. if you make bundles between the 2 LC's, you can carry 64k sessions, 32k per npu and have bundle redundancy between

the 2 lc's.

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Aleksandar, Xander,

Hope you are both doing fine!

I would like to ask you if the scalability charts are somehow affected if we enable egress queueing in a part of the sessions.

To be more specific, we are interested in LC (MOD80-SE) based dual-stack PPPoE sessions.

Do the max values of 64K per LC/128K per chassis get affected/reduced if we enable egress queueing in a part of them and if yes, how can we calculate the max supported values for this case?

Best Regards,

Dimitris

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hey dimitris,

adding qos doesnt affect subscriber scale as such. as long as you stay within the number of unique pmaps per LC (10k) and don't exhaust the number of queues per NP (512k).

cheers!

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hey Xander,

Quick as always :) Thanks!

So, if I am not mistaken, we don't expect any scaling issues in the following setup, even if we apply the policy to all 64K sessions, right?

- 2 x A9K-RSP440-SE

- 1 x A9K-MOD80-SE

- 64K LC DS PPPoE sessions

policy-map PARENT-POLICY class class-default service-policy CHILD-POLICY shape average 20 mbps ! end-policy-map ! policy-map CHILD-POLICY class CHILD-CLASS bandwidth remaining percent 30 ! class class-default ! end-policy-map

Regards,

Dimitris

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

you can have 8 shaped classes per policy and you’re also well within that!

cheers!

xander

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: