As we all know, hyper-converged storage is a software-defined approach that is either embedded in a hypervisor or based on a controller VMware appliance. The benefit of this type of approach to storage management is that it combines compute, storage, networking, and virtualization in one managed system.

In this new release, VMware Virtual SAN 6.0 introduces support for an all-flash architecture. With support for both hybrid and all-flash configurations, this solution delivers enterprise-level scale and performance. Scalability is doubled, with up to 64 nodes per cluster and support for up to 200 virtual machines per host. VMware Virtual SAN 6.0 is ready to meet the performance demands of just about any virtualized application by delivering consistent performance with sub-millisecond latencies in both hybrid and all-flash architectures.

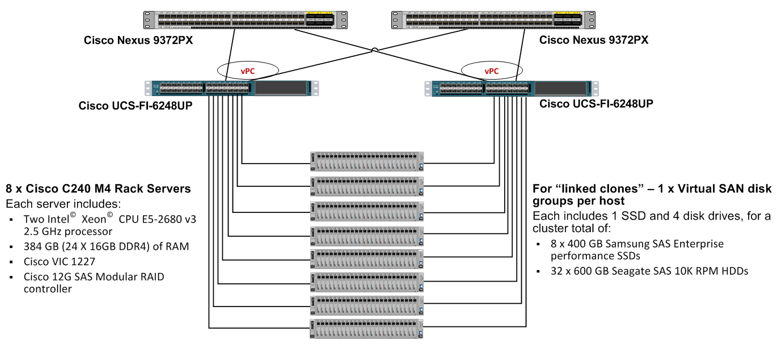

This technology is appealing to data center managers and administrators because it provides superior performance and efficient manageability. A key factor in this solution is a distributed storage architecture with direct attached storage (DAS) from an individual physical Cisco Unified Computing System (Cisco UCS) Rack Server. This gives data center staff greater control in provisioning storage in a virtualized environment from a single pane of management from Cisco UCS Manager.

Data center managers also have the option to use an automated workflow-based provisioning with Cisco UCS Director. Cisco UCS Director improves consistency and efficiency while reducing delivery time from weeks to minutes. It accomplishes this by replacing time-consuming, manual provisioning, and de-provisioning of data center resources with automated workflows. As workload requirements increase, data center staff can scale the deployment out horizontally by adding more capacity in existing hyper-converged storage nodes, or they can add more nodes. They can also use a combination of existing and new nodes.

Cisco UCS C240 M4 Rack Server with VMware Virtual SAN 6.0 Architecture

Here are some key points of note for this reference architecture:

- For this deployment, Cisco UCS firmware version 2.2 and later is compatible for the direct connection of a Cisco UCS C-Series Rack Server to a Cisco UCS Fabric Interconnect. This allows Cisco UCS B-Series Blade Servers and Cisco UCS C-Series Rack Servers to be managed under the same Cisco UCS domain and within a single pane of management.

- Cisco UCS Director can help orchestrate and deploy VMware Virtual SAN with automated workflow customized for VMware Virtual SAN deployment on Cisco UCS hardware.

- This solution is scalable by adding more disks to existing Cisco UCS C240 M4 servers or by adding more servers. Here is a complete list of Cisco UCS Rack Servers certified for VMware Virtual SAN.

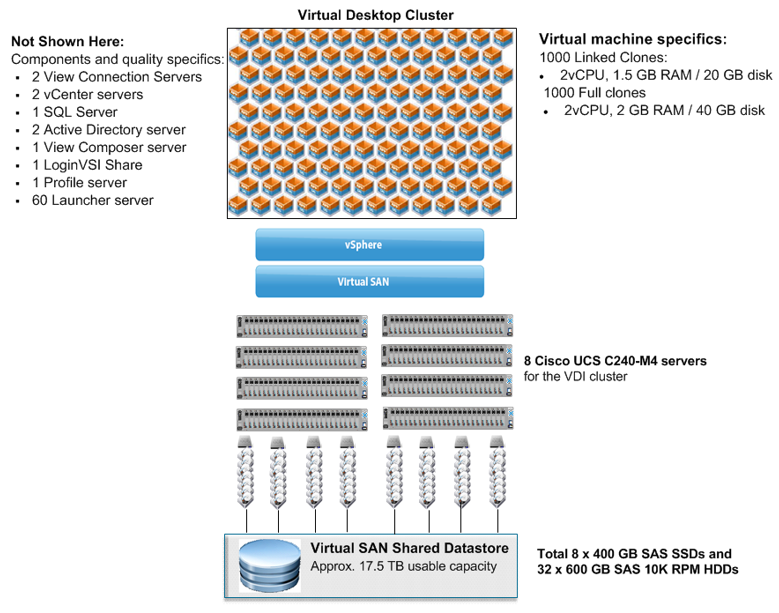

The reference architecture shown above was built using the Cisco UCS VMware VSAN Ready Node. Cisco tested VMware Horizon 6 with View linked-clone virtual desktops with LoginVSI 4.1.3 to measure the solution’s performance.

The VMware vRealize Operations for Horizon provides the capability to monitor and manage the solution’s health, capacity, and performance of the View environment. The VMware Virtual SAN Observer captures performance statistics and provides access to live measurements for storage resources utilization.

Cisco UCS with VMware Virtual SAN running VMware Horizon 6 with View Deployed Desktops

isco UCS and VMware Virtual SAN Network Configuration Best Practices

Here are a few recommended best practices:

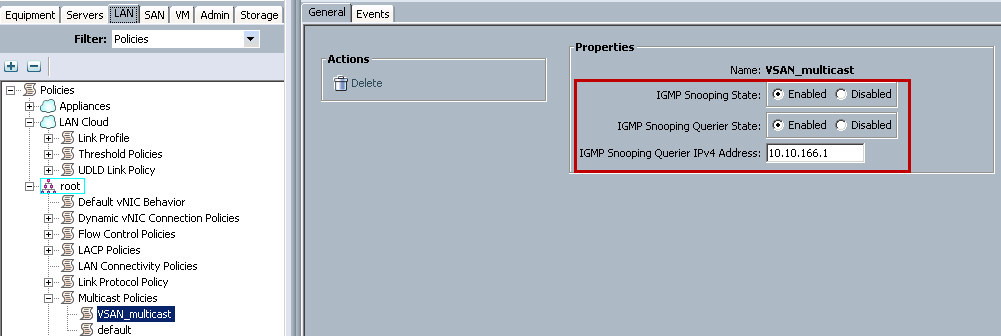

- The VMware Virtual SAN environment requires that multicast be enabled for virtual storage area network (VSAN) traffic. To achieve this, a multicast policy must be created as shown in the screenshot below:

- As a best practice recommendation, the default gateway for VMware Virtual SAN traffic IP address subnet for IGMP Snooping Querier should be defined.

- The recommended configuration steps for Nexus 1000V with VMware Virtual SAN can be found here.

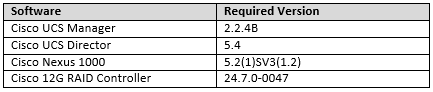

- The minimum software version requirement for Cisco UCS C220/C240 M4 and VSAN 6.0 is as follows:

Test Results and Key Takeaways:

For the reference architecture, Cisco performed five different tests with various scalability capabilities for up to 1000 VMware Horizon 6 with View deployed linked-clone desktops. The tests were based on real-world scenarios, user workloads, and infrastructure system configurations. Highlights of the test results include:

- This solution successfully achieved a linear scalability from 500 desktops on four nodes to 1000 desktops on eight nodes with VMware Virtual SAN data store latency under 15ms according to VSAN Observer performance data.

- This solution guarantees optimal end-user performance for practical workloads. The test results showed an average of less than 15ms latency with standard office applications in various failure scenarios as measured during the study. These scenarios included SSD failure, HDD failure, and node failure.

- For optimal performance and to minimize failure, the domain disk group design and sizing are key factors.

- The solution also provides proven resiliency and availability, with high application uptimes.

- IT efficiency is improved with faster desktop operations throughout this deployment.

To learn more about the complete system configuration, full test results, and recommended best practices, download this white paper: Cisco Unified Computing System with VMware Horizon 6 with View and Virtual SAN.

---

We would love to hear your thoughts on this article. Feel free to post your comments below as well as sharing the article within your social networks.