- Cisco Community

- Technology and Support

- Security

- VPN

- I think this performance

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-02-2014 12:13 AM

Hi all

I've got two ISR G1 (1811 and 1812) that have a GRE tunnel between them.

Without any crypto protection I was getting around 3.6 MB/s in regular Windows SMB transfers. After employing crypto protection to the tunnel I'm now only getting 2.7 MB/s in the same transfers.

Any idea as to why this is?

My findings:

According to this http://www.cisco.com/web/partners/downloads/765/tools/quickreference/vpn_performance_eng.pdf the AES crypto performance of the fixed 1800's is 40 Mbps.

The increased overhead of the crypto protection shouldn't be the problem as I've tried testing the transfers on the tunnel without protection and "ip tcp adjust-mss 800" on the tunnel. There was only a little performance drop here, not as much as with crypto.

I've tried several crypto transform sets, they all give the same performance as long as they are done in hardware.

Is ISAKMP always done in software? I cannot seem to get it to show its being done in hardware, no matter the isakmp policy.

IP MTU on both tunnel interfaces are 1434 with crypto protection.

My config:

encr aes 256

hash sha512

authentication pre-share

group 20

crypto isakmp key *** address ***

!

crypto ipsec transform-set ESP-AES256-SHA esp-aes 256 esp-sha-hmac

mode transport

!

crypto ipsec profile VPN

set transform-set ESP-AES256-SHA

!

Tunnel10

ip address 10.251.251.1 255.255.255.0

no ip redirects

no ip proxy-arp

load-interval 30

tunnel source FastEthernet0

tunnel destination ***

tunnel path-mtu-discovery

tunnel protection ipsec profile VPN

!

Some output:

ISR1811#sh crypto ipsec sa

interface: Tunnel10

Crypto map tag: Tunnel10-head-0, local addr **

protected vrf: (none)

local ident (addr/mask/prot/port): (**/255.255.255.255/47/0)

remote ident (addr/mask/prot/port): (**/255.255.255.255/47/0)

current_peer ** port 500

PERMIT, flags={origin_is_acl,}

#pkts encaps: 683060, #pkts encrypt: 683060, #pkts digest: 683060

#pkts decaps: 1227247, #pkts decrypt: 1227247, #pkts verify: 1227247

#pkts compressed: 0, #pkts decompressed: 0

#pkts not compressed: 0, #pkts compr. failed: 0

#pkts not decompressed: 0, #pkts decompress failed: 0

#send errors 0, #recv errors 0

local crypto endpt.: ***, remote crypto endpt.: ***

path mtu 1500, ip mtu 1500, ip mtu idb FastEthernet0

current outbound spi: 0x8D9A911E(2375717150)

PFS (Y/N): N, DH group: none

inbound esp sas:

spi: 0xD6F42959(3606325593)

transform: esp-256-aes esp-sha-hmac ,

in use settings ={Transport, }

conn id: 45, flow_id: Onboard VPN:45, sibling_flags 80000006, crypto map: Tunnel10-head-0

sa timing: remaining key lifetime (k/sec): (4563208/1061)

IV size: 16 bytes

replay detection support: Y

Status: ACTIVE

inbound ah sas:

inbound pcp sas:

outbound esp sas:

spi: 0x8D9A911E(2375717150)

transform: esp-256-aes esp-sha-hmac ,

in use settings ={Transport, }

conn id: 46, flow_id: Onboard VPN:46, sibling_flags 80000006, crypto map: Tunnel10-head-0

sa timing: remaining key lifetime (k/sec): (4563239/1061)

IV size: 16 bytes

replay detection support: Y

Status: ACTIVE

outbound ah sas:

outbound pcp sas:

ISR1811#show crypto isakmp sa detail

Codes: C - IKE configuration mode, D - Dead Peer Detection

K - Keepalives, N - NAT-traversal

T - cTCP encapsulation, X - IKE Extended Authentication

psk - Preshared key, rsig - RSA signature

renc - RSA encryption

IPv4 Crypto ISAKMP SA

C-id Local Remote I-VRF Status Encr Hash Auth DH Lifetime Cap.

2015 ************** ********** ACTIVE aes sha5 psk 20 12:42:50

Engine-id:Conn-id = SW:15

2016 ************** ********** ACTIVE aes sha5 psk 20 12:42:58

Engine-id:Conn-id = SW:16

IPv6 Crypto ISAKMP SA

CPU usage during transfer with crypto:

ISR1811#sh proc cpu his

ISR1811 09:19:54 AM Tuesday Sep 2 2014 CET

544444555555555544444444445555544444555556666644444555555555

355555000001111133333888884444444444333333333377777666662222

100

90

80

70

60 ***** *****

50 **************** ********** ************************

40 ************************************************************

30 ************************************************************

20 ************************************************************

10 ************************************************************

0....5....1....1....2....2....3....3....4....4....5....5....6

0 5 0 5 0 5 0 5 0 5 0

CPU% per second (last 60 seconds)

ISR1812#sh proc cpu history

ISR1812 09:19:24 AM Tuesday Sep 2 2014 CET

666666666666666666666666666666666666666666655555444445555544

777888883333344444555555555566666777770000055555777776666666

100

90

80

70 ******** ********************

60 ************************************************ *****

50 ************************************************************

40 ************************************************************

30 ************************************************************

20 ************************************************************

10 ************************************************************

0....5....1....1....2....2....3....3....4....4....5....5....6

0 5 0 5 0 5 0 5 0 5 0

CPU% per second (last 60 seconds)

Solved! Go to Solution.

- Labels:

-

VPN

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-02-2014 01:23 AM

I think this performance matches what you should get with the legacy 18xx ISR G1. But the performance-degration is perhaps really a little bit too high.

For ISAKMP, there is no problem with that. The amount of protected data is too small to have any influence.

As a first test, I would remove the GRE-encapsulation by configuring "tunnel mode ipsec ipv4" on the tunnel-interface and compare if the results get better.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-02-2014 01:23 AM

I think this performance matches what you should get with the legacy 18xx ISR G1. But the performance-degration is perhaps really a little bit too high.

For ISAKMP, there is no problem with that. The amount of protected data is too small to have any influence.

As a first test, I would remove the GRE-encapsulation by configuring "tunnel mode ipsec ipv4" on the tunnel-interface and compare if the results get better.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-18-2014 12:36 AM

Hi Karsten

Thank you for the reply. I've finally gotten around to do some more intensive testing and I believe that you are right - the limits of the ISR1800 has simply been reached.

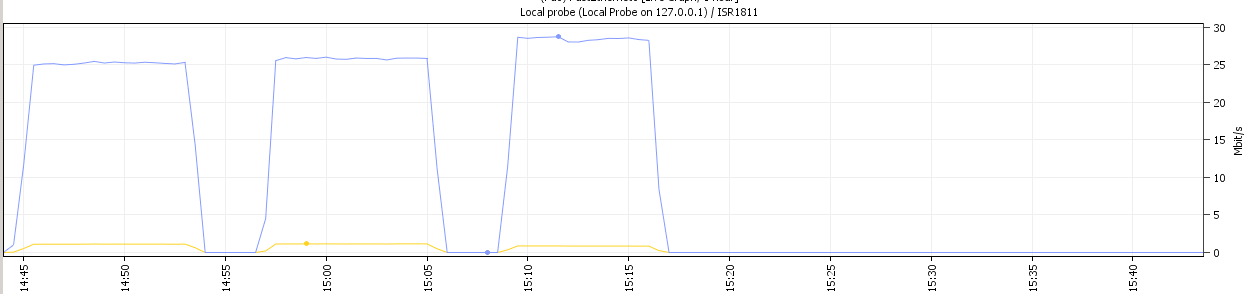

The above image displays the bandwidth usage during three different transfer tests:

Right side: GRE tunnel only

Middle: Crypto IPSEC tunnel only

Left: Crypto IPSEC+GRE tunnel

Thanks for the help!

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide