- Cisco Community

- Technology and Support

- Data Center and Cloud

- Data Center Switches

- What is the preferred method for configuring management SVI on vPC pair

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

What is the preferred method for configuring management SVI on vPC pair

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-28-2020 05:50 AM

Hi,

I have 2 x 9k switches, connected by vPC. I have setup vPC and this is working fine, as per below:

Keepalive link is via the mgmt0 interface (192.168.200.10 & 20)

Peer-link is a portchannel using 2 x 40Gig links on each switch.

I can confirm this primary vPC environment seems to be working fine.

I have connected this vPC pair to my 6509 Core switch and the portchannel seems to be working fine.

What I now need to do is configure the vPC pair so I can remotely manage each of them on my network management subnet...what is the preferred method for configuring an SVI so I can access each switch via SSH?

My topology and config is attached.

Cheers

Neil

- Labels:

-

Nexus Series Switches

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-28-2020 09:27 AM

Hello @neilo,

The best way to manage the Nexus switches is through mgmt0 interface connected to OOB network. This is because the mgmt0 interface has direct connection to CPU, and you are not going through COPP (Control Plane Policing). This is important during network loops or broadcast storms or some inband disruption, when a lot of traffic is being forwarded to Nexus' CPU, and CoPP starts dropping packets to protect the CPU.

If you directly connected the mgmt interface between the two N9K, no worries, you can move them to an OOB switch without impacting the vPC functionality. If only the VPC KA goes down, no changes happens to vpc peers state as long as vpc peer-link is up.

Let me know if you have any questions.

Best regards,

Sergiu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-28-2020 12:55 PM

Hi Sergiu,

Many thanks for your response. At this stage I'm not able to utilise an OOB network...I guess I'm trying to understand how vPC controls the switches and I'm guessing it doesn't truly act in the same way VSS does. What is the next best preferred method, do I just configure an SVI on each Nexus and how will vPC manage that...will it not care because vPC is L2?

Regards

Neil

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-28-2020 01:53 PM

Hi @neilo,

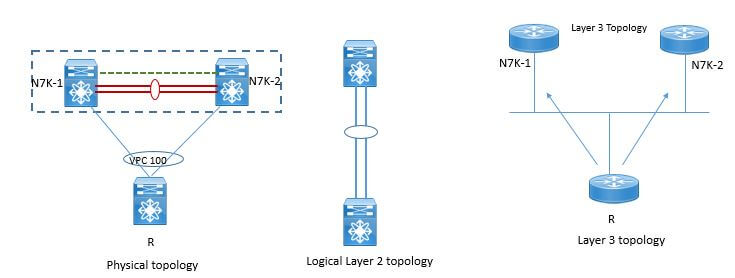

I am a bit lazy with the drawing, but I found this:

Reference: https://www.dclessons.com/vpc-and-l3-design-scenarios

So from L3 perspective, the two vPC peers will act as individual routers. However, there is one small, but important difference.

When you configure an vPC SVI with HSRP, both vPC peers will actively forward the routed traffic. With 'vpc peer-gateway' the Nexus can even forward traffic is destined to it's peer.

Second important thing you need to know is what happens during vPC failure scenarios. More exactly, when peer-link goes down - on vPC operational secondary, the vPC SVIs and VPC port-channels will be suspended.

Which means, you should not configure the inband mgmt SVI as a vPC VLAN. What is a VPC vlan? It's a vlan not allowed over peer-link. Thus, make sure you have dedicated ports for inband mgmt VLAN, otherwise, you will not be able to manage your switches during a failure.

Cheers,

Sergiu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-06-2020 03:28 AM

Thank you Sergiu, I appreciate your help...I've managed to get things working. I've applied HSRP to my production management VLAN SVI on each switch and it's working as needed.

Thanks again

Neil

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-29-2020 11:30 AM - edited 04-29-2020 11:31 AM

Hello

It looks like you using the MGT port for you peer-keepalive link which is a recommended best practice, I am aware of the 7K having a additional OOB port (CMP) which can be used however not sure its supported on the 9K's, The next best thing would be to create a mgt svi on each physical box append a hrsp/vrrp vip address to it and have that in it own vrf.

On a side note just been looking at your configuration and wondering if do you have an active peer-keepalive link?

interface mgmt0

vrf member management

ip address 192.168.100.10/2

vpc domain 10

peer-keepalive destination 192.168.100.20 source 192.168.100.10 vrf management <--- this is missing

Please rate and mark as an accepted solution if you have found any of the information provided useful.

This then could assist others on these forums to find a valuable answer and broadens the community’s global network.

Kind Regards

Paul

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-06-2020 03:30 AM

Hi Paul,

Thank you for your message, I have tried to follow the recommended best practise where I can as it's my first time using vPC. I've no added HSRP to my SVIs and all is working fine.

My peer keep-a-live is up, the command has been applied but for some reason it doesn't show on the show run output.

Many thanks

Neil

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide