- Cisco Community

- Technology and Support

- Networking

- Routing

- Re: Server side keepalive dropped by firewall

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Server side keepalive dropped by firewall

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 06:34 AM

Hi,

In short: Are keepalive packets being blocked by default on an ASA firewall? Or are special settings required when NAT is being used in conjunction?

In long:

Background:

(server)----[outside](Natting firewall; ASA5555-X; managed by FMC)[inside]----(client)

We have a database application which sets up a connection from a client application to a database server and then (among other things) waits for a predefined trigger condition to which the clients needs to react. During the night nothing much happens and as long as there the trigger condition has not been met, absolutely no data is being sent.

Global idle tcp timeout settings are 60 minutes, but I needn't worry because the server sends a keep-alive packet exactly 120 minutes after the last data has been sent. So for this application I've configured a custom idle TCP timeout of 125 minutes. This works because when I run show conn address [client-IP] the session now remains alive well after the 60 minute mark.

(side note: we checked, we can't change the keep-alive settings on the database server)

Issue:

But now the session is dropped after 125 minutes.

With a packet capture, I can see keepalive packets being sent by the server. But a trace on the client shows that the client never receives these keepalives. Additionally, the firewall seems to ignore them since the idle timer for this connection is not being reset. Am I missing some configuration where the firewall ignores keep-alive packets?

I see no dropped connections in our FMC's connection events, nor in the intrusion events

I could additionally configure a Dead Connection Detection, but this also means the firewall will send out its own keepalive packets. But I would prefer that the firewall does not inject its own traffic into this connection, especially since there are already keepalives being sent between the server and client.

Any insights from someone more knowledgeable than me is well appreciated.

Kind regards,

Wim.

- Labels:

-

Other Routers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 07:01 AM

Can I see

Show conn of this traffic

MHM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 09:06 AM

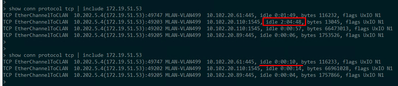

Please find the screenshot below.

The connection with the TCP idle time of about 40 minutes is the one in question.

The NAT idle timer never seems to reset (but the session remains) and can be used to see how long ago the session was created.

So at the time of the screenshot, the database connection was set up one hour and ten minutes ago, and the last trigger occurred 40 minutes ago.

Kind regards,

Wim.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 09:21 AM

From another troubleshooting session I've found the screenshot below, showing a live connection just shy of 125 minutes and then dropping when I checked again.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-12-2024 10:39 AM

I think the server not send TCP keepalive, it reestablish new One

see two lines same source/L4 same destination/L4

can you capture the traffic interface and see if the Server send TCP RST or SYN or send TCP keepalive

MHM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-13-2024 02:12 AM

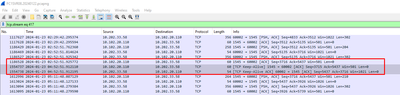

That's expected behaviour, there are indeed two sessions. One 'normal' database connection which runs a query every minute (and so the idle timer never reaches above 59s), and a second one which is used to notify the client of the trigger condition has been met.

See two screenshots below from the same netsh trace, taken from another machine with the exact same application. But this one doesn't pass our ASA5555 firewall (images recovered from an e-mail conversation with colleagues to explain what's going on, hence the highlights and red box).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-13-2024 06:30 AM

UxIO N1 this flag I see in conn

I think x (per session NAT) is make this conn drop and make client and server re-establish new conn.

Can you disable per-session xlate (just for troubleshooting)

MHM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-13-2024 03:33 AM

Hello,

the keepalive connection is between TCP ports 1545 (IBM machine?) and 60002 (which is a dynamic/private range port). I assume your ASA is allowing this connection ? Can you post the running config of the ASA ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-13-2024 03:53 AM

It is indeed the server who sends the keepalive packet and the client who acknowleges this. Our ASA is indeed allowing the connection because as long as the idle TCP timer for this connection is not expired (i.e. trigger conditions are met and the client gets notified within my custom 125 minute idle TCP connection timer), the connection works and keeps working ... potentially indefinite.

It's only when production slows down during the night shift that it can take much longer than those two hours before a next production lot action is taken (thus meeting the database trigger condition) and the idle timer expires.

This shouldn't be a problem due to the keepalive the server sends ... but this keepalive seems to be ignored by the ASAand never even reaches the client.

I'm sorry but I can't post the running config of then ASA. This is something our information security would not allow.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-13-2024 08:43 AM

Hello,

looking at your capture output, it seems that the TCP packets are very small (60/54). The default for the 'sysopt connection tcpmss' used to be 0 and 1300, maybe that has changed. You might want to try and set the minimum to 0 manually:

sysopt connection tcpmss minimum 0

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide