- Cisco Community

- Technology and Support

- Service Providers

- Service Providers Knowledge Base

- ASR9000/XR: Load-balancing architecture and characteristics

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on

08-28-2012

07:08 AM

- edited on

11-22-2022

08:23 AM

by

nkarpysh

![]()

Introduction

In this document it is discussed how the ASR9000 decides how to take multiple paths when it can load-balance. This includes IPv4, IPv6 and both ECMP and Bundle/LAG/Etherchannel scenarios in both L2 and L3 environments

Core Issue

The load-balancing architecture of the ASR9000 might be a bit complex due to the 2 stage forwarding the platform has. In this article the various scenarios should explain how a load-balancing decision is made so you can architect your network around it.

In this document it is assumed that you are running XR 4.1 at minimum (the XR 3.9.X will not be discussed) and where applicable XR42 enhancements are alerted.

Load-balancing Architecture and Characteristics

Characteristics

ASR9000 has the following load-balancing characteristics:

- ECMP:

- Non recursive or IGP paths : 32-way

- Recursive or BGP paths:

- 8-way for Trident

- 32 way for Typhoon

- 64 way Typhoon in XR 5.1+

- 64 way Tomahawk XR 5.3+ (Tomahawk only supported in XR 5.3.0 onwards)

- Bundle:

- 64 members per bundle

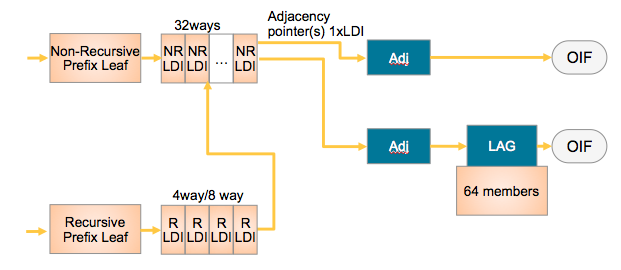

The way they tie together is shown in this simplified L3 forwarding model:

NRLDI = Non Recursive Load Distribution Index

RLDI = Recursive Load Distribution Index

ADJ = Adjancency (forwarding information)

LAG = Link Aggregation, eg Etherchannel or Bundle-Ether interface

OIF = Outgoing InterFace, eg a physical interface like G0/0/0/0 or Te0/1/0/3

What this picture shows you is that a Recursive BGP route can have 8 different paths, pointing to 32 potential IGP ways to get to that BGP next hop, and EACH of those 32 IGP paths can be a bundle which could consist of 64 members each!

Architecture

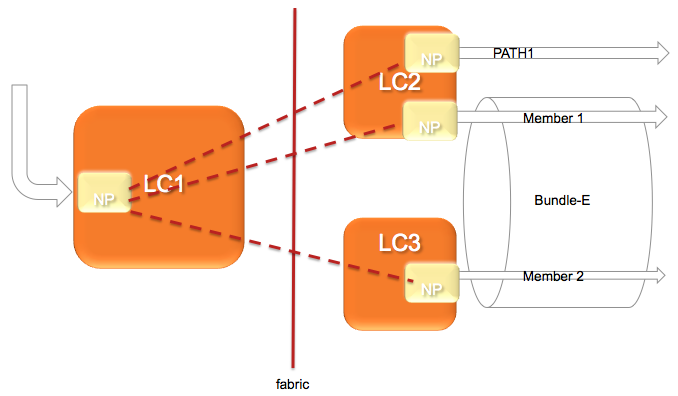

The architecture of the ASR9000 load-balancing implementation surrounds around the fact that the load-balancing decision is made on the INGRESS linecard.

This ensures that we ONLY send the traffic to that LC, path or member that is actually going to forward the traffic.

The following picture shows that:

In this diagram, let's assume there are 2 paths via the PATH-1 on LC2 and a second path via a Bundle with 2 members on different linecards.

(note this is a bit extraordinary considering that equal cost paths can't be mathematically created by a 2 member bundle and a single physical interface)

The Ingress NPU on the LC1 determines based on the hash computation that PATH1 is going to forward the traffic, then traffic is sent to LC2 only.

If the ingress NPU determines that PATH2 is to be chosen, the bundle-ether, then the LAG (link aggregation) selector points directly to the member and traffic is only sent to the NP on that linecard of that member that is going to forward the traffic.

Based on the forwarding achitecture you can see that the adj points to a bundle which can have multiple members.

Allowing this model, when there are lag table udpates (members appearing/disappearing) do NOT require a FIB update at all!!!

What is a HASH and how is it computed

In order to determine which path (ECMP) or member (LAG) to choose, the system computes a hash. Certain bits out of this hash are used to identify member or path to be taken.

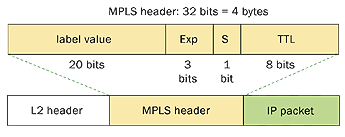

- Pre 4.0.x Trident used a folded XOR methodology resulting in an 8 bit hash from which bits were selected

- Post 4.0.x Trident uses a checksum based calculation resulting in a 16 bit hash value

- Post 4.2.x Trident uses a checksum based calculation resulting in a 32 bit hash value

- Typhoon 4.2.0 uses a CRC based calculation of the L3/L4 info and computes a 32 bit hash

8-way recursive means that we are using 3 bits out of that hash result

32-way non recursive means that we are using 5 bits

64 members means that we are looking at 6 bits out of that hash result

It is system defined, by load-balancing type (recursive, non-recursive or bundle member selection) which bits we are looking at for the load-balancing decision.

Fields used in ECMP HASH

What is fed into the HASH depends on the scenario:

| Incoming Traffic Type | Load-balancing Parameters |

|---|---|

| IPv4 |

Source IP, Destination IP, Source port (TCP/UDP only), Destination port (TCP/UDP only), Router ID |

| IPv6 |

Source IP, Destination IP, Source port (TCP/UDP only), Destination port (TCP/UDP only), Router ID |

| MPLS - IP Payload, with < 4 labels |

Source IP, Destination IP, Source port (TCP/UDP only), Destination port (TCP/UDP only), Router ID |

|

From 6.2.3 onwards, for Tomahawk + later ASR9K LCs: MPLS - IP Payload, with < 8 labels |

Source IP, Destination IP, Source port (TCP/UDP only), Destination port (TCP/UDP only), Router ID Typhoon LCs retain the original behaviour of supporting IP hashing for only up to 4 labels.

|

|

MPLS - IP Payload, with > 9 labels |

If 9 or more labels are present, MPLS hashing will be performed on labels 3, 4, and 5 (labels 7, 8, and 9 from 7.1.2 onwards). Typhoon LCs retain the original behaviour of supporting IP hashing for only up to 4 labels. |

| - IP Payload, with > 4 labels |

4th MPLS Label (or Inner most) and Router ID |

|

- Non-IP Payload |

Inner most MPLS Label and Router ID |

* Non IP Payload includes an Ethernet interworking, generally seen on Ethernet Attachment Circuits running VPLS/VPWS.

These have a construction of

EtherHeader-Mpls(next hop label)-Mpls(pseudowire label)-etherheader-InnerIP

In those scenarios the system will use the MPLS based case with non ip payload.

IP Payload in MPLS is a common case for IP based MPLS switching on LSR's whereby after the inner label an IP header is found directly.

Router ID

The router ID is a value taken from an interface address in the system in an order to attempt to provide some per node variation

This value is determined at boot time only and what the system is looking for is determined by:

sh arm router-ids

Example:

RP/0/RSP0/CPU0:A9K-BNG#show arm router-id

Tue Aug 28 11:51:50.291 EDT

Router-ID Interface

8.8.8.8 Loopback0

RP/0/RSP0/CPU0:A9K-BNG#

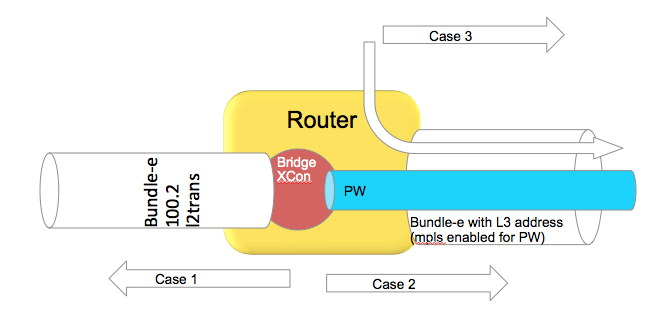

Bundle in L2 vs L3 scenarios

This section is specific to bundles. A bundle can either be an AC or attachment circuit, or it can be used to route over.

Depending on how the bundle ether is used, different hash field calculations may apply.

When the bundle ether interface has an IP address configured, then we follow the ECMP load-balancing scheme provided above.

When the bundle ether is used as an attachment circuit, that means it has the "l2transport" keyword associated with it and is used in an xconnect or bridge-domain configuration, by default L2 based balancing is used. That is Source and Destination MAC with Router ID.

If you have 2 routers on each end of the AC's, then the MAC's are not varying a lot, that is not at all, then you may want to revert to L3 based balancing which can be configured on the l2vpn configuration:

RP/0/RSP0/CPU0:A9K-BNG#configure

RP/0/RSP0/CPU0:A9K-BNG(config)#l2vpn

RP/0/RSP0/CPU0:A9K-BNG(config-l2vpn)#load-balancing flow ?

src-dst-ip Use source and destination IP addresses for hashing

src-dst-mac Use source and destination MAC addresses for hashing

Use case scenarios

Case 1 Bundle Ether Attachment circuit (downstream)

In this case the bundle ether has a configuration similar to

interface bundle-ether 100.2 l2transport

encap dot1q 2

rewrite ingress tag pop 1 symmetric

And the associated L2VPN configuration such as:

l2vpn

bridge group BG

bridge-domain BD

interface bundle-e100.2

In the downstream direction by default we are load-balancing with the L2 information, unless the load-balancing flow src-dest-ip is configured.

Case 2 Pseudowire over Bundle Ether interface (upstream)

The attachment circuit in this case doesn't really matter, whether it is bundle or single interface.

The associated configuration for this in the L2VPN is:

l2vpn

bridge group BG

bridge-domain BD

interface bundle-e100.2

vfi MY_VFI

neighbor 1.1.1.1 pw-id 2

interface bundle-ether 200

ipv4 add 192.168.1.1 255.255.255.0

router static

address-family ipv4 unicast

1.1.1.1/32 192.168.1.2

In this case neighbor 1.1.1.1 is found via routing which appens to be egress out of our bundle Ethernet interface.

This is MPLS encapped (PW) and therefore we will use MPLS based load-balancing.

Case 3 Routing through a Bundle Ether interface

In this scenario we are just routing out the bundle Ethernet interface because our ADJ tells us so (as defined by the routing).

Config:

interface bundle-ether 200

ipv4 add 200.200.1.1 255.255.255.0

show route (OSPF inter area route)

O IA 49.1.1.0/24 [110/2] via 200.200.1.2, 2w4d, Bundle-Ether200

Even if this bundle-ether is MPLS enabled and we assign a label to get to the next hop or do label swapping, in this case

the Ether header followed by MPLS header has Directly IP Behind it.

We will be able to do L3 load-balancing in that case as per chart above.

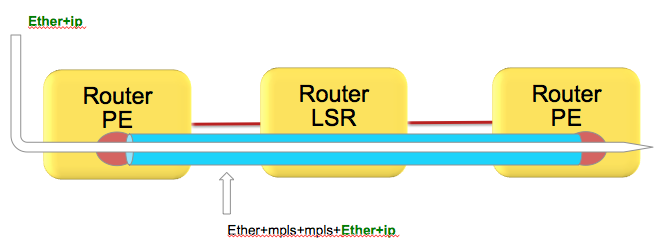

(Layer 3) Load-balancing in MPLS scenarios

As attempted to be highlighted throughout this technote the load-balacning in MPLS scenarios, whether that be based on MPLS label or IP is dependent on the inner encapsulation.

Depicted in the diagram below, we have an Ethernet frame with IP going into a pseudo wire switched through the LSR (P router) down to the remote PE.

Pseudowires in this case are encapsulating the complete frame (with ether header) into mpls with an ether header for the next hop from the PE left router to the LSR in the middle.

Although the number of labels is LESS then 4. AND there is IP available, the system can't skip beyond the ether header and read the IP and therefore falls back to MPLS label based load-balancing.

How does system differentiate between an IP header after the inner most label vs non IP is explained here:

Just to recap, the MPLS header looks like this:

Now the important part of this picture is that this shows MPLS-IP. In the VPLS/VPWS case this "GREEN" field is likely start with Ethernet headers.

Because hardware forwarding devices are limited in the number of PPS they can handle, and this is a direct equivalent to the number of instructions that are needed to process a packet, we want to make sure we can work with a packet in the LEAST number of instructions possible.

In order to comply with that thought process, we check the first nibble following the MPLS header and if that starts with a 4 (ipv4) or a 6 (ipv6) we ASSUME that this is an IP header and we'll interpret the data following as an IP header deriving the L3 source and destination.

Now this works great in the majority scenarios, because hey let's be honest, MAC addresses for the longest time started with 00-0......

in other words not a 4 or 6 and we'd default to MPLS based balancing, something that we wanted for VPLS/VPWS.

However, these days we see mac addresses that are not starting with zero's anymore and in fact 4's or 6's are seen!

This fools the system to believe that the inner packet is IP, while it is an Ether header in reality.

There is no good way to classify an ip header with a limited number of instruction cycles that would not affect performance.

In an ideal world you'd want to use an MD5 hash and all the checks possible to make the perfect decision.

Reality is different and no one wants to pay the price for it either what it would cost to design ASICS that can do high performance without affecting the PPS rate due to a very very comprehensive check of tests.

Bottom line is that if your DMAC starts with a 4 or 6 you have a situation.

Solution

Use the MPLS control word.

Control word is negotiated end to end and inserts a special 4 bytes with zero's especially to accommodate this purpose.

The system will now read a 0 instead of a 4 or 6 and default to MPLS based balancing.

Configuration

to enable control word use the follow template:

l2vpn

pw-class CW

encapsulation mpls

control-word

!

!

xconnect group TEST

p2p TEST_PW

interface GigabitEthernet0/0/0/0

neighbor 1.1.1.1 pw-id 100

pw-class CW

!

!

!

!

Alternative solutions: Fat Pseudowire

Since you might have little control over the inner label, the PW label, and you probably want to ensure some sort of load-balancing, especially on P routers that have no knowledge over the offered service or mpls packets it transports another solution is available known as FAT Pseudowire.

FAT PW inserts a "flow label" whereby the label has a value that is computed like a hash to provide some hop by hop variation and more granular load-balancing. Special care is taken into consideration that there is variation (based on the l2vpn command, see below) and that no reserved values are generated and also don't collide with allocated label values.

Fat PW is supported starting XR 4.2.1 on both Trident and Typhoon based linecards. From 6.5.1 onward we support FAT label over PWHE.

Packet transformation with a Flow Label

Configuration of FAT Pseudowire

The following is configuration example :

l2vpn

load-balancing flow src-dst-ip

pw-class test

encapsulation mpls

load-balancing

flow-label both static

!

!

You can also affect the way that the flow label is computed:

Under L2VPN configuration, use the “load-balancing flow” configuration command to determine how the flow label is generated:

l2vpn

load-balancing flow src-dst-mac

This is the default configuration, and will cause the NP to build the flow label from the source and destination MAC addresses in each frame.

l2vpn

load-balancing flow src-dst-ip

This is the recommended configuration, and will cause the NP to build the flow label from the source and destination IP addresses in each frame.

FAT Pseudowire TLV

Flow Aware Label (FAT) PW signalled sub-tlv id is currently carrying value 0x11 as specified originally in draft draft-ietf-pwe3-fat-pw. This value has been recently corrected in the draft and should be 0x17. Value 0x17 is the flow label sub-TLV identifier assigned by IANA.

When Inter operating between XR versions 4.3.1 and earlier, with XR version 4.3.2 and later. All XR releases 4.3.1 and prior that support FAT

PW will default to value 0x11. All XR releases 4.3.2 and later default to value 0x17.

Solution:

Use the following config on XR version 4.3.2 and later to configure the sub-tlv id

pw-class <pw-name>

encapsulation mpls

load-balancing

flow-label both

flow-label code 17

NOTE: Got a lot of questions regarding the confusion about the statement of 0x11 to 0x17 change (as driven by IANA) and the config requirement for number 17 in this example.

The crux is that the flow label code is configured DECIMAL, and the IANA/DRAFT numbers mentioned are HEX.

So 0x11, the old value is 17 decimal, which indeed is very similar to 0x17 which is the new IANA assigned number. Very annoying, thank IANA

(or we could have made the knob in hex I guess )

Loadbalancing and priority configurations

In the case of VPWS or VPLS, at the ingress PE side, it’s possible to change the load-balance upstream to MPLS Core in three different ways:

1. At the L2VPN sub-configuration mode with “load-balancing flow” command with the following options:

RP/0/RSP1/CPU0:ASR9000(config-l2vpn)# load-balancing flow ?

src-dst-ip

src-dst-mac [default]

2. At the pw-class sub-configuration mode with “load-balancing” command with the following options:

RP/0/RSP1/CPU0:ASR9000(config-l2vpn-pwc-mpls-load-bal)#?

flow-label [see FAT Pseudowire section]

pw-label [per-VC load balance]

3. At the Bundle interface sub-configuration mode with “bundle load-balancing hash” command with the following options:

RP/0/RSP1/CPU0:ASR9000(config-if)#bundle load-balancing hash ? [For default, see previous sections]

dst-ip

src-ip

It’s important to not only understand these commands but also that: 1 is weaker than 2 which is weaker than 3.

Example:

l2vpn

load-balancing flow src-dst-ip

pw-class FAT

encapsulation mpls

control-word

transport-mode ethernet

load-balancing

pw-label

flow-label both static

interface Bundle-Ether1

(...)

bundle load-balancing hash dst-ip

Because of the priorities, on the egress side of the ingress PE (to the MPLS Core), we will do per-dst-ip load-balance (3).

If the bundle-specific configuration is removed, we will do per-VC load-balance (2).

If the pw-class load-balance configuration is removed, we will do per-src-dst-ip load-balance (1).

with thanks to Bruno Oliveira for this priority section

P2MP MPLS TE Tunnels

Only one bundle member will be selected to forward traffic on the P2MP MPLS TE mid-point node.

Possible alternatives that would achieve better load balancing are: a) increase the number of tunnels or b) switch to mLDP.

IPv6

Pre 4.2.0 releases, for the ipv6 hash calculation we only use the last 64 bits of the address to fold and feed that into the hash, this including the regular routerID and L4 info.

In 4.2.0 we made some further enhancements that the full IPv6 Addr is taken into consideration with L4 and router ID.

Determining load-balancing

You can determine the load-balancing on the router by using the following commands

L3/ECMP

For IP :

RP/0/RSP0/CPU0:A9K-BNG#show cef exact-route 1.1.1.1 2.2.2.2 protocol udp ?

source-port Set source port

You have the ability to only specify L3 info, or include L4 info by protocol with source and destination ports.

It is important to understand that the 9k does FLOW based hashing, that is, all packets belonging to the same flow will take the same path.

If one flow is more active or requires more bandwidth then another flow, path utilization may not be a perfect equal spread.

UNLESS you provide enough variation in L3/L4 randomness, this problem can't be alleviated and is generally seen in lab tests due the limited number of flows.

For MPLS based hashing :

RP/0/RSP0/CPU0:A9K-BNG#sh mpls forwarding exact-route label 1234 bottom-label 16000 ... location 0/1/cpu0

This command gives us the output interface chosen as a result of hashing with mpls label 16000. The bottom-label (in this case '16000') is either the VC label (in case of PW L2 traffic) or the bottom label of mpls stack (in case of mpls encapped L3 traffic with more than 4 labels). Please note that for regular mpls packets (with <= 4 labels) encapsulating an L3 packet, only IP based hashing is performed on the underlying IP packet.

Also note that the mpls hash algorithm is different for trident and typhoon. The varied the label is the better is the distribution. However, in case of trident there is a known behavior of mpls hash on bundle interfaces. If a bundle interface has an even number of member links, the mpls hash would cause only half of these links to be utlized. To get around this, you may have to configure "cef load-balancing adjust 3" command on the router. Or use odd number of member links within the bundle interface. Note that this limitation applies only to trident line cards and not typhoon.

Bundle member selection

RP/0/RSP0/CPU0:A9K-BNG#bundle-hash bundle-e 100 loc 0/0/cPU0

Calculate Bundle-Hash for L2 or L3 or sub-int based: 2/3/4 [3]: 3

Enter traffic type (1.IPv4-inbound, 2.MPLS-inbound, 3:IPv6-inbound): [1]: 1

Single SA/DA pair or range: S/R [S]:

Enter source IPv4 address [255.255.255.255]:

Enter destination IPv4 address [255.255.255.255]:

Compute destination address set for all members? [y/n]: y

Enter subnet prefix for destination address set: [32]:

Enter bundle IPv4 address [255.255.255.255]:

Enter L4 protocol ID. (Enter 0 to skip L4 data) [0]:

Invalid protocol. L4 data skipped.

Link hashed [hash_val:1] to is GigabitEthernet0/0/0/19 LON 1 ifh 0x4000580

The hash type L2 or L3 depends on whether you are using the bundle Ethernet interface as an Attachment Circuit in a Bridgedomain or VPWS crossconnect, or whether the bundle ether is used to route over (eg has an IP address configured).

Polarization

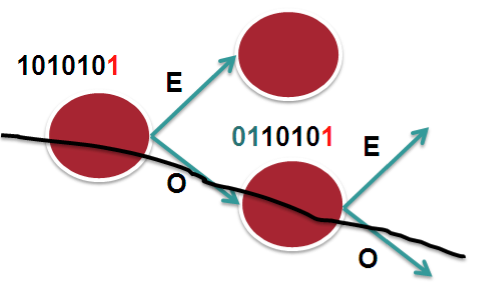

Polarization pertains mostly to ECMP scenarios and is the effect of routers in a chain making the same load-balancing decision.

The following picture tries to explain that.

In this scenario we assume 2 bucket, 1 bit on a 7 bit hash result. Let's say that in this case we only look at bit-0. So it becomes an "EVEN" or "ODD" type decision. The routers in the chain have access to the same L3 and L4 fields, the only varying factor between them is the routerID.

In the case that we have RID's that are similar or close (which is not uncommon), the system may not provide enough variation in the hash result which eventually leads to subsequent routers to compute the same hash and therefor polarize to a "Southern" (in this example above) or "Northern" path.

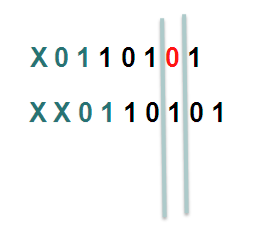

In XR 4.2.1 via a SMU or in XR 4.2.3 in the baseline code, we provide a knob that allows for shifting the hash result. By choosing a different "shift" value per node, we can make the system look at a different bit (for this example), or bits.

In this example the first line shifts the hash by 1, the second one shifts it by 2.

Considering that we have more buckets in the real implementation and more bits that we look at, the member or path selection can alter significantly based on the same hash but with the shifting, which is what we ultimately want.

HASH result Shifting

- Trident allows for a shift of maximum of 4 (performance reasons)

- Typhoon allows for a shift of maximum of 32.

Command

cef load-balancing algorithm adjust <value>

The command allows for values larger then 4 on Trident, if you configure values large then 4 for Trident, you will effectively use a modulo, resulting in the fact that shift of 1 is the same as a shift of 5

Fragmentation and Load-balancing

When the system detects fragmented packets, it will no longer use L4 information. The reason for that is that if L4 info were to be used, and subsequent fragments don't contain the L4 info anymore (have L3 header only!) the initial fragment and subsequent fragments produce a different hash result and potentially can take different paths resulting in out of order.

Regardless of release, regardless of hardware (ASR9K or CRS), when fragmentation is detected we only use L3 information for the hash computation.

Hashing updates

- Starting release 6.4.2, when an layer 2 interface (EFP) receives mpls encapped ip packets, the hashing algorithm if configured for src-dest-ip will pick up ip from ingress packet to create a hash. Before 6.4.2 the Hash would be based on MAC.

- Starting XR 6.5, layer 2 interfaces receiving GTP encapsulated packets will automatically pick up the TEID to generate a hash when src-dest-ip is configured.

Related Information

Xander Thuijs, CCIE #6775

Sr Tech Lead ASR9000

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi

What about Tomahawk linecards on 5.3.3? The cli only mentions Typhoon and Trident. What are the valid values for "cef load-balancing algorithm adjust" on a Tomahawk LC?

Thanks.

AA

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hi Alexandre, it is the same for TH as with typhoon, 32 position shift max (the hash is only 32 bits, so shifting more wouldn't help much :)

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi

Thanks a lot for your help.

AA

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi, Xander

A question here. If hash is computed in Parse, how can EoMPLS (AC -> PW) LB is based on VC label ?

The label imposition should be in the Modify/Rewrite stage, isn't it ?

Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

That's because by default we don't use the hash to load balance EoMPLS across ECMP. We only use the PW label. If you configure this:

l2vpn

load-balancing flow {src-dst-mac | src-dst-ip}

then we load-balance PW traffic across ECMPs based on L2 or L3 payload info; i.e. flows from a PW are distributed over ECMPs.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi, Xander

More confusion here.

1/

The command "load-balancing flow {src-dst-mac | src-dst-ip}" should only affect LB for downstream traffic (PW ->AC), right ?

This command should not change the LB behavior for AC-PW upstream traffic ??

In your "Case 1", you did mention this "In the downstream direction by default we are load-balancing with the L2 information, unless the load-balancing flow src-dest-ip is configured". But it didn't say if it also applies to upstream direction or not.

2/As you said by default we only use PW label for EoMPLS, does it mean the LB decision here is in Modify/Rewrite stage in NP?

If this is the case, what's the difficulty that Flow label cannot be used like VC label?

Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

The BRKSPG-2904 slide deck from Cisco Live San Diego 2015 or Cisco Live Berlin 2016 has a section dedicated to L2VPN load-balancing. It explains all scenarios with very good illustrations. I'm sure all will be clear when you go through it. Please look for the slide deck on the ciscolive.com portal and let us know afterwards if you still have questions.

regards,

Aleksandar

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Xander,

Thanks again for this information.

Regarding this topic, an issue has come to our attention, where we have two ASR9010, which transports PW traffic for CESoPSN services from connected ASR903 devices. The problem is that no load-balancing is achieved between the ASR9010 and the ASR903 (which have bundled interfaces).

Load-Balancing is only achieved between ASR903 and other ASR903 in the topology. The uplinks from the ASR903 towards the ASR9010 are L3 interfaces and show no load balancing.

We have configured the "control-word", "load-balancing in the pw-class" (FAT PW), but it is no use. Added to that, we configured "bundle load-balancing hash src-ip", and played with the hash to see any change, but with no other result.

Any suggestions?

Looking forward to your advice. Thanks in advance.

Alejandro Rivera

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

if there is no load balancing in the upstream direction (asr903 to asr9010), you obviously have to troubleshoot that on the asr903 side. For asr9k L2VPN troubleshooting lookup BRKSPG-2904 from Cisco Live Berlin 2016 or San Diego 2015. There's a very good section on L2VPN load-balancing on asr9k.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Xander,

this document is great. I have one question because we will probably use Tomahawk LCs.

You wrote this:

Typhoon 4.2.0 uses a CRC based calculation of the L3/L4 info and computes a

Is it the same with Tomahawk hardware?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hey smail! thanks :) and that is correct, TH has the same as typhoon.

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello Xander,

What do you recommend to achieve for effective ECMP with Auto-Mesh TE tunnel. I have setup this environment where the overlay topology handled by MPLS TE provides the forwarding plane for IPv4 and VPNv4 traffic. All the CEs are multi-homed to both PEs so I should expect traffic properly load-balanced across the transit network.

In summary:

- No DS-TE

- Auto-Mesh TE tunnel with 02 mesh groups with the same priority

- LDP tunneling through TE tunnel

Results: ECMP non-effective for IPv4 and VPNv4 traffic

nyc-ce1# traceroute 172.27.4.2 source 172.19.4.2 vrf BOA

traceroute to 172.27.4.2 (172.27.4.2) from 172.19.4.2 (172.19.4.2), 30 hops max, 40 byte packets

1 172.19.192.89 (172.19.192.89) 1.336 ms 1.003 ms 0.932 ms

2 172.16.0.109 (172.16.0.109) 1.573 ms 1.654 ms 1.64 ms

[Label=24086 E=4 TTL=1 S=0, Label=24008 E=4 TTL=1 S=1]

[Label=24032 E=4 TTL=1 S=0, Label=24008 E=4 TTL=1 S=1]

[Label=24086 E=4 TTL=1 S=0, Label=24008 E=4 TTL=1 S=1]

3 172.16.0.9 (172.16.0.9) 1.777 ms 1.692 ms 1.731 ms

[Label=24148 E=4 TTL=1 S=0, Label=24008 E=4 TTL=2 S=1]

[Label=24148 E=4 TTL=1 S=0, Label=24008 E=4 TTL=2 S=1]

[Label=24115 E=4 TTL=1 S=0, Label=24008 E=4 TTL=2 S=1]

4 172.16.0.42 (172.16.0.42) 1.777 ms 1.617 ms 1.671 ms

[Label=24008 E=4 TTL=1 S=1]

5 172.27.192.30 (172.27.192.30) 1.829 ms 1.755 ms 172.27.4.2 (172.27.4.2) 2.003 ms

lax-ce1# traceroute 172.19.4.2 source 172.27.4.2 vrf BOA

traceroute to 172.19.4.2 (172.19.4.2) from 172.27.4.2 (172.27.4.2), 30 hops max, 40 byte packets

1 172.27.192.25 (172.27.192.25) 1.277 ms 1.022 ms 0.942 ms

2 172.16.0.41 (172.16.0.41) 1.726 ms 1.636 ms 1.646 ms

[Label=24023 E=4 TTL=1 S=0, Label=24010 E=4 TTL=1 S=1]

[Label=24024 E=4 TTL=1 S=0, Label=24010 E=4 TTL=1 S=1]

[Label=24023 E=4 TTL=1 S=0, Label=24010 E=4 TTL=1 S=1]

3 172.16.0.14 (172.16.0.14) 1.696 ms 1.637 ms 1.626 ms

[Label=24143 E=4 TTL=1 S=0, Label=24010 E=4 TTL=2 S=1]

[Label=24144 E=4 TTL=1 S=0, Label=24010 E=4 TTL=2 S=1]

[Label=24143 E=4 TTL=1 S=0, Label=24010 E=4 TTL=2 S=1]

4 172.16.0.138 (172.16.0.138) 1.853 ms 1.651 ms 1.619 ms

[Label=24010 E=4 TTL=1 S=1]

5 172.19192.30 (172.19192.30) 1.884 ms 1.74 ms 1.778 ms

6 172.19.4.2 (172.19.4.2) 2.223 ms 2.066 ms 2.065 ms

RP/0/RP0/CPU0:lax-pe1#show cef vrf BOA 172.16.106.5/32 detail

172.16.106.5/32, version 5187, internal 0x5000001 0x0 (ptr 0x72261a68) [1], 0x0 (0x0), 0x208 (0x729549c0)

Updated Jul 14 18:18:43.760

Prefix Len 32, traffic index 0, precedence n/a, priority 3

gateway array (0x71e8ef2c) reference count 5, flags 0x404038, source rib (7), 0 backups

[1 type 1 flags 0x40441 (0x72386e90) ext 0x0 (0x0)]

LW-LDI[type=0, refc=0, ptr=0x0, sh-ldi=0x0]

gateway array update type-time 3 Jul 17 18:40:29.912

LDI Update time Jul 17 18:40:29.912

via 172.16.108.7/32, 6 dependencies, recursive, backup [flags 0x6100]

path-idx 0 NHID 0x0 [0x72426eb0 0x0]

recursion-via-/32

next hop VRF - 'default', table - 0xe0000000

next hop 172.16.108.7/32 via 24076/0/21

next hop 172.16.108.7/32 tt106 labels imposed {ImplNull 24003}

next hop 172.16.108.7/32 tt115 labels imposed {ImplNull 24003}

via 172.16.108.2/32, 6 dependencies, recursive [flags 0x6000]

path-idx 1 NHID 0x0 [0x72425638 0x0]

recursion-via-/32

next hop VRF - 'default', table - 0xe0000000

next hop 172.16.108.2/32 via 24077/0/21

next hop 172.16.108.2/32 tt119 labels imposed {ImplNull 24003}

next hop 172.16.108.2/32 tt121 labels imposed {ImplNull 24003}

Load distribution: 0 (refcount 1)

Hash OK Interface Address

0 Y Unknown 24077/0

RP/0/RP0/CPU0:lax-pe1#traceroute 172.16.108.2 source 172.16.108.1

Sun Jul 17 19:07:19.874 MST

Type escape sequence to abort.

Tracing the route to 172.16.108.2

1 172.16.0.41 [MPLS: Label 24152 Exp 0] 1 msec 1 msec 0 msec

2 172.16.0.10 [MPLS: Label 24047 Exp 0] 0 msec 0 msec 0 msec

3 172.16.0.110 1 msec * 0 msec

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Xander,

We use ASR9k as a BNG and terminate the sessions on PW-Eth headends. The BNGs (9001) are connected to the rest of the network over two 4x10G bundles. The rest of network is also based on Eth-bundles (various sizes). At this stage we have a handful (5-10) of PWE3 per BNG with each of them being under 10G of traffic. Some of those PWE3 will reach 10G shortly (once that happens the egress PEs will start using PW-Eth as the AC).

How can we ensure good distribution of traffic between the BNGs and the Ps, and then further down all the way to egress PEs? I don't think the FAT label is supported on the BNG in PW-Eth mode. Also, as the traffic continues to grow we end up with less PWE3 per BNG (so the number of labels drops).

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

with PWHE there is indeed no possibility to use FAT labels. the loadbalancing will be based on the pw label.

you'd have to add more pw's to get more labels to get some spread over members and paths between PE's.

another option is to have a pw popper before the bng so you can use fat label between the PE's and do plain ip balacing between the PE and the BNG.

so while the PWHE simplifies the topology, it has that restriction in terms of (traffic) distribution.

we are working on some next gen topologies like evpn based, but that is not until 62 later when that becomes mainstream.

cheers!

xander

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Isn't EVPN just control-plane technology? How does that solve data-plane issue where current NP code doesn't load-balance (PWHE, considering local FAT label, etc)?

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: