- Cisco Community

- Technology and Support

- Networking

- Switching

- Re: Building your own underlay - spine/leaf

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Building your own underlay - spine/leaf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-05-2017 07:54 AM - edited 03-08-2019 01:00 PM

Hi,

I'm working for a client that wants to implement VMware NSX has overlay but wants a new network for underlay too. I'm working on this underlay. They will add VXLAN with NSX after this.

He wants a spine/leaf architecture. The easiest way would be to use ACI but he doesn't want it (and it won't happen).

So, I need to build a spine/leaf architecture manually as underlay. All links between spine and leaf must be active.

In my past, I did this with a FabricPath setup with L3 at spine. Now this client wants Nexus 9000 (NXOS mode) so I think there is no FabricPath.

So right now, I need to build a custom fabric from scratch. If I had only two spines I could do vPC from leaf to them but as soon as they will add a new spine, the setup won't work.

How would you configure the fabric (between spine and leaf) ? Should I use L3 interface between spine and leaf and use a routing protocol ? In that case, default GW for vmware subnets (VTEP) would be at leaf, is it a good setup ?

You understand I am at the begining and trying to unravel all this.

Anyone had documentation ? I don't see a lot of doc about building your own fabric from scratch without using fabricpath.

To sump up :

- Spine / leaf architecture

- all links actives

- serves as underlay for vmware NSX

- Nexus 9000 in NX-OS mode

- Can't really use vPC because spines would be limited to two

any inputs would be great

Thanks

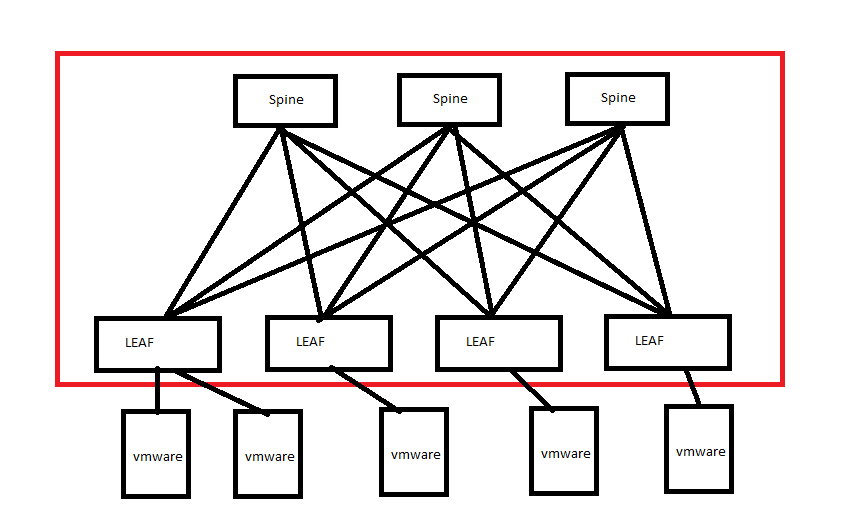

EDIT : I added a wonderful mspaint drawing. I'm looking to configure what is in the red box. I didn't draw it but it's possible that some servers may have to connect to two leafs (vPC) for redundacy

- Labels:

-

Other Switching

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-05-2017 08:51 AM

Check:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-05-2017 10:23 AM - edited 12-05-2017 10:30 AM

thanks for the links.

I'll have to take a deeper look at the first one but the last two are more about overlay than underlay.

They talk a lot about vxlan but since NSX is going to do the vxlan (overlay), I just want to be sure the underlay is OK.

I found this :

http://d2zmdbbm9feqrf.cloudfront.net/2015/eur/pdf/BRKDCT-3378.pdf

At page 39, you have a section about underlay and with some information about ways to do it like OSPF, ISIS and BGP.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

12-05-2017 12:01 PM

I found the video presentation of this powerpoint. Everything is very clear now.

To summurize:

- connect spines and leaf though routed ports (no switchport)

- configure loopback address on every nodes

- use ip unumbered on all interfaces and bind it to loopback address

- use a protocol like OSPF, set it to p2p connection

with this you have a beautiful fabric with your own configuration. All leafs become the default gateway of your VTEP networks (vmware hosts) and it's all routed with ECMP with all links being active !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-05-2018 08:12 PM

Hi Vinny,

You have mentioned "All leafs will become default gateways for VTEP networks". Could you please explain the following items as i have a similar requirement for one of my projects.

1. How many uplink did you configure from one leaf to one spine?

2. What is the IP addressing which you used for VTEP? Which pair of leaf did you configure the SVI for the VTEPs?

3. If SVIs are configured in a leaf pair and Vmware hosts are connected to another leaf which does not have SVI, how would the full feature of the ECMP be utilized?

Thanks in advance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-06-2018 12:12 PM

Hi !

first I want to tell you that this project was cancelled so it was never made and I don't know if everything would have work but this was the plan :

1. How many uplink did you configure from one leaf to one spine?

Two in our case but that depend only on you traffic needs

2. What is the IP addressing which you used for VTEP? Which pair of leaf did you configure the SVI for the VTEPs?

It was cancelled before doing an ip addressing scheme.

All pairs would have SVI configured as DG for machines connected to them

3. If SVIs are configured in a leaf pair and Vmware hosts are connected to another leaf which does not have SVI, how would the full feature of the ECMP be utilized?

You should have SVI on all leafs in this design. All links between leaf and spines are all L3 so you can't reach an SVI on another pair directly. It will be routed to the destination and not just switched.

ECMP is used between spine and leafs and not between leafs and vmware hosts.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-08-2018 09:02 AM

Thanks a lot for your explanation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-11-2018 04:17 AM

Hi, Does anyone know of a guide , paper, video or Installation Guide that describes Hyperflex installation with NSX?

Discover and save your favorite ideas. Come back to expert answers, step-by-step guides, recent topics, and more.

New here? Get started with these tips. How to use Community New member guide